How to Iterate Winning Creatives: A Modular Variant System That Beats Fatigue

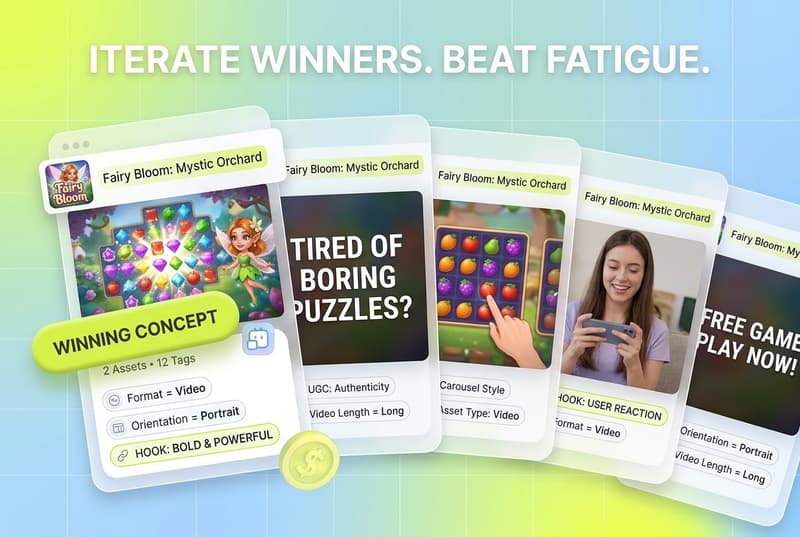

Iterating on a winning creative means producing structured variants of a proven concept rather than chasing new ideas every week, because winners die from fatigue, not from being beaten. For UA managers and creative strategists, that means a modular variant system where hooks, bodies, CTAs, formats, and voiceovers get recombined and tested faster than the audience pattern-matches the ad. Segwise's Creative Generation Agent makes this loop sustainable: it produces data-backed iterations from your winning tags, exports them in every aspect ratio per network, and Segwise's native fatigue detection alerts you before the winner crashes.

Also read Image-to-Video for App Ads: A Step-by-Step Workflow for 2026

Introduction

Most performance teams treat a winning creative like a finish line. They find an ad that pulls, scale spend behind it, and hold their breath. Two weeks later, CTR collapses, CPMs spike, and someone in the Monday meeting mutters about "the algorithm." The ad did not get beaten. It got tired.

This is the most expensive mistake in creative testing. Digital advertising is a mean-reverting system where new ads tend to outperform old ads simply because they are new. Once a creative reaches its half-life, performance does not gracefully decline. It collapses.

The fix is not more ideation. The fix is more iteration. The winning ad is a signal, not an endpoint. Treat it as a launchpad, produce structured variants of what works, and rotate them in before the original fatigues.

This post is a reference for how the iteration tree looks in practice. Which components to vary, how aggressively, how many iterations is enough, how to detect fatigue early, and how to kill iterations that do not work. Most teams under-do this step.

Key Takeaways

Creative quality drives roughly 70% of paid media outcomes in 2026, and ad performance drops 15 to 20% within the first two weeks of a creative's lifespan.

Iteration outperforms new ideation because new concepts have a low hit rate (often around 20%). Iterations of a proven winner stack on validated signal instead of starting cold.

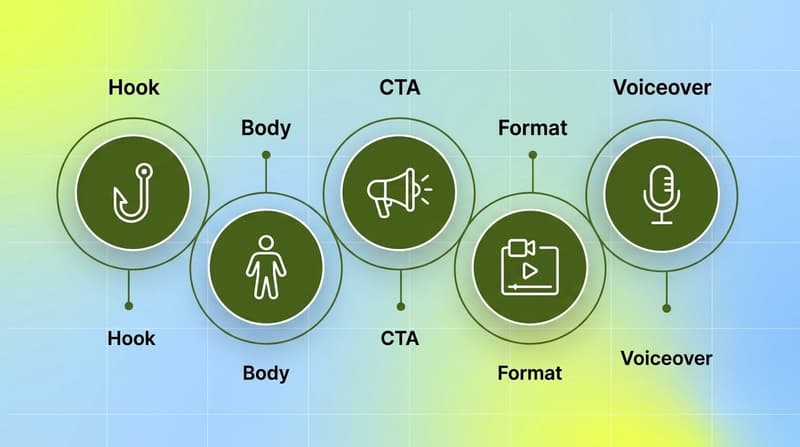

The iteration tree has five branches: hook, body, CTA, format, and voiceover. Vary one variable per test to isolate what is driving performance.

A healthy testing velocity is 3 to 5 new variants per week, with 5,000 to 10,000 impressions per variant for statistical significance.

Fatigue signals to monitor daily: CTR decline of 15% or more over 7 to 10 days, frequency above 2.5 to 3.5 on prospecting, CPA inflation that does not respond to bid changes, and thumb-stop rate dropping below historical average.

Kill iterations that fall below your control's performance after a fair test. Do not nurse them. Your testing pipeline replaces them automatically if the cadence is healthy.

Why iteration outperforms new ideation for ROAS

The intuitive move when a winning ad starts to slip is to brief a brand-new concept. New angle, new hook, new everything. This feels productive. It is also expensive and slow.

The math is unforgiving. If your creative hit rate is 20% (one in five variants performs well enough to deploy) and you retire four ads per week, you need to produce 25 ad variants weekly just to maintain. That is five concepts a week if each concept gets five variants. Most in-house teams cannot sustain that volume from scratch.

Iteration changes the math because the hit rate on iterations of a proven winner is much higher than the hit rate on new concepts. You are not gambling on whether the audience will stop scrolling. You already know they do. You are gambling on whether a different hook or CTA will pull harder than the original. Smaller bet, better odds.

The winning ad is a signal, not an endpoint. You build a creative library of modular derivatives based on what is already working, tested in parallel with your scaling efforts.

There is a second reason iteration beats fresh ideation. The platforms have automated targeting away from advertisers. Meta and Google now construct many more targeted user segments than most advertisers had previously advertised to, and advertisers are blind to how those segments are defined. The only way to pair creative with these algorithmic segments is to feed the system many variants of the same proven concept. New concepts are how you discover new surface area. Iteration is what carries the weekly load.

The iteration tree: hook, body, CTA, format, voiceover

The iteration tree is the structured set of variables you vary off a winning creative. Each branch is a different lever. Each lever, tested in isolation, produces compounding intelligence about why your winner won.

A modular system separates the concept from the execution. Think of it like a kitchen, not a restaurant. A restaurant produces complete dishes from a fixed menu. A kitchen stocks ingredients and combines them in different ways. Six hooks multiplied by four bodies multiplied by three CTAs equals 72 unique combinations from 13 components.

Here is what each branch of the iteration tree looks like in practice.

Hook variants

The hook is the first one to three seconds. It answers a single question: why should I stop scrolling? Hook performance drives 60 to 70% of whether someone engages with your ad. Test it first.

Modular hook types include a bold text statement, a product unboxing moment, a customer reaction, a pattern-interrupt visual, an emotional resonance line, a founder POV, a problem-agitation question, and a social proof opener. A single winning concept should have five to eight hook modules in rotation. Brightland's Everyday Set campaign tested over a dozen headline variants on the same product staging and layout, isolating which message pulled hardest across origin story, emotional resonance, functional benefit, founder POV, product quality, and consumer insight angles.

Body variants

The body carries the core message. Same concept, different lens: product demonstration, customer testimonial, before-and-after, ingredient deep dive, problem-solution narrative, day-in-the-life, side-by-side comparison. Three to four body modules per concept gives the algorithm a meaningful signal about which structure converts best for which segment.

Body iterations matter because they isolate the message from the hook. If your hook is winning but your body is fatiguing, swapping in a new body extends the creative's lifespan without losing the proven attention-grab.

CTA variants

The CTA closes the loop. Three to four CTA variants per body multiplies your total variant count without proportional production cost. Variants include direct urgency ("Shop Now"), benefit restatement ("See Results"), social proof closing ("Join 120K Customers"), limited availability framing ("Last Chance Today"), risk reversal ("Try It Free for 30 Days"), and category-specific phrasing for app installs versus purchase versus signup.

CTAs are the cheapest module to produce. They can be end cards, voiceover lines, or text overlays swapped in seconds. Test them last in your testing hierarchy, after hooks and bodies, but test them aggressively.

Format variants

Format is the most underused branch of the iteration tree. Most brands run two formats, usually static and short video, and miss the lift available from format diversity. Audiences do not fatigue on your brand, they fatigue on your format. A consumer's thumb pattern-matches your ad layout in 0.3 seconds. Format is the first filter.

The minimum viable format mix for genuine diversity is four: static single image, carousel, short-form video under 15 seconds, and UGC or creator content. Brands with larger budgets should add mid-form video, dynamic product creative, and interactive formats. Adding format diversity typically extends creative lifespan by 30 to 50% before fatigue sets in.

Format iteration also feeds the algorithm. Meta's Advantage+ campaigns perform better when the system has structurally different creative formats to test across placements. A static that wins on Instagram feed will not win on Reels.

Voiceover variants

For video creatives, voiceover is a separate iteration branch. Same visual, different voice changes the entire perception of the ad. Test variations like male versus female voiceover, accent or regional voice, energetic versus calm tone, scripted versus conversational pacing, founder voice versus actor voice, and silent versus voiceover (some platforms autoplay muted, so a strong text-on-screen variant can outperform a voiced version).

Voiceover changes are cheap relative to reshooting visual. They are also the iteration branch most teams skip entirely, which means they leave performance on the table.

How many iterations is enough

The honest answer is more than you are doing. The structured answer is built backward from your retirement rate.

Build the math backward from your retirement rate. Start from the number of ads you retire each week. Multiply by your iteration hit rate. That gives you the number of iterations you need to produce to maintain performance.

For most performance accounts, the practical target is 3 to 5 new modular variants per week. Not 3 to 5 brand-new concepts. 3 to 5 modular recombinations that isolate one variable each. Week one, test four hook variants against your strongest body and CTA. Week two, test three body variants against the winning hook. Week three, test CTA variants against the winning combination. Week four, test format variants. Repeat with the next concept.

Each variant needs enough impressions to be statistically meaningful. The rule of thumb is 5,000 to 10,000 impressions per variant. At a $10 CPM, that is $50 to $100 per variant. A weekly cycle of four variants costs $200 to $400 in test budget. For brands spending $50K or more monthly on paid, this is well under 3% of spend allocated to learning, and the ROI compounds across every subsequent campaign.

Low-budget accounts can use a tighter version. Evaluate creative performance after about 2,000 users have been reached, watching CTR, outbound clicks, and landing page views as early signal. For higher-spend accounts, winners can usually be identified within 2 to 5 days because volume accelerates learning.

The danger is testing too many variables at once. Most teams change everything between ad versions and learn nothing. They swap the image, the copy, the CTA, and the format simultaneously. When one version outperforms, they have no idea which change drove the result. Variable isolation feels slower per cycle. By cycle three, it is dramatically faster in total learning velocity.

There is also a ceiling. The creative flood is a state where Generative AI tools push static variant expansion to a volume where ad accounts saturate themselves and structured testing breaks down. The fix is not infinite variants. The fix is structured iteration with disciplined variable isolation, where every test makes the next test smarter.

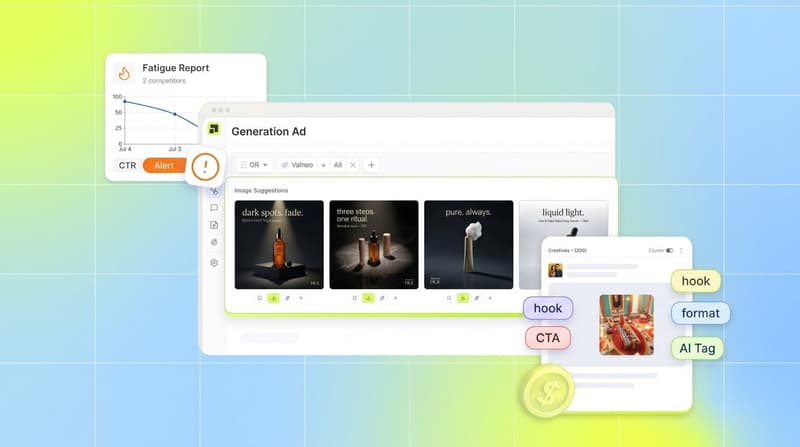

Detecting fatigue early

Creative fatigue does not announce itself. It creeps in. Performance degrades gradually enough that teams attribute the decline to seasonality, competition, or a vague algorithmic change. By the time someone identifies the creative as the problem, weeks of budget have been wasted on exhausted assets.

Build a fatigue monitoring dashboard around the signals below, tracked daily with automated alerts. Thresholds align with established creative iteration playbooks:

CTR decline while impressions stay stable is the cleanest early signal. The platform is still serving your ad. People are still seeing it. They just are not clicking. The audience has pattern-matched the ad and is scrolling past. This typically happens 7 to 10 days into a creative's lifespan for cold audiences, faster for retargeting.

Rising frequency without proportional conversion is the second canary. If frequency climbs and CVR stays flat, you are paying to annoy people who have already decided not to buy. Above 3.0 is a fire alarm.

CPA inflation that does not respond to bid changes points to the creative, not the bid. When your buyer raises or lowers bids and CPA holds, the issue is upstream of bidding. No bid strategy fixes an ad people do not want to engage with.

Thumb-stop rate is the most actionable signal for video. If the percentage of viewers who continue past three seconds drops 15% below historical average, the hook has fatigued. You do not need a new ad. You need a new hook module swapped in.

The point of monitoring daily is to act proactively. Do not wait for the weekly performance review to discover an ad has been bleeding budget for five days.

Killing iterations that do not work

Iteration discipline cuts both ways. As aggressively as you produce variants, you have to retire the losers.

Set kill rules before the test starts. Predefined thresholds remove subjective debate. Pause an iteration when CTR drops below your category benchmark for three consecutive days, when CPA rises 20% above your trailing 14-day average, or when frequency exceeds 2.5 on the core audience. These are rules, not judgment calls. Automate them where possible.

Apply the same discipline to net-new iterations after their fair-test window. If a variant gets 5,000 to 10,000 impressions and underperforms the control, kill it. Do not nurse it. Do not give it "one more day." The opportunity cost is the next variant in your pipeline that could be in rotation instead.

Document why the variant lost. This is where most teams fail. The data says hook variant C dropped CTR by 30%. What does that mean for the next brief? It means the emotional register, the pacing, the visual treatment, or the specific claim did not resonate. Someone needs to identify why and translate that insight into a creative constraint for the next round. The translation layer between data and the next brief is the difference between a testing program and an expensive content factory.

A useful frame is the 70/20/10 rule: 70% of your creative output iterates on proven winners, 20% pushes new concepts within familiar formats, and 10% is genuinely experimental. Kill rules apply hardest in the 70% bucket because that is where you have the most spend allocated.

Keep the original winner running while you test iterations against it. Your winning ad is a proven asset, so keep it running while testing new variations. The replacement is the iteration that meaningfully beats the original, or the iteration that holds while the original starts to fatigue.

Building a modular iteration system in your stack

A modular iteration system needs four components: a tagged library of winning elements, a production engine for variants, a testing engine for variable isolation, and a feedback loop that translates results into the next brief. Most teams under-build this. It is what separates a creative function from a creative system.

The tagged library is the foundation. Every creative element in every winning ad gets categorized: hook type, body structure, CTA framing, visual style, character, voiceover tone, format, aspect ratio, audio layer. Tags map to performance metrics. Without this layer, you cannot iterate intelligently because you do not know what to iterate on.

Segwise's Creative Tagging Agent does this foundational work. Its multimodal AI automatically tags every video, audio, image, and text element in a creative, and it is the only platform that tags playable (interactive) ads, which matters for mobile gaming advertisers. Every tag maps automatically to the performance metric it influenced. When you ask "which hook style drove the most installs last month?" or "what's different about my top 5 creatives versus my bottom 5?", the Creative Strategy Agent answers in plain language with full account context across Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, IronSource, and your MMP data from AppsFlyer, Adjust, Branch, or Singular.

Production is where most teams hit the bottleneck. In-house teams below the $200K monthly spend threshold often cannot sustain modular component production internally. Segwise's Creative Generation Agent addresses this directly. It identifies your top-performing tags and generates new creatives built around those winning elements, producing data-backed iterations that you can edit by prompting and export in multiple aspect ratios (1:1, 4:5, 9:16, 16:9) ready to upload to each ad network. The pipeline that took designers days to brief, produce, and revise compresses into hours.

The testing engine sits with your media team. Variable isolation, statistical thresholds, kill rules. Asset Clustering reinforces this. Segwise automatically groups creatives that share underlying assets (same footage, same images, same audio) into clusters. Compare creatives within a cluster and you isolate exactly which treatment change (hook swap, CTA swap, music change, text overlay) drove the performance difference between two similar variants. That is variable isolation enforced by the data, not by the brief.

The feedback loop closes when fatigue detection fires before the winner crashes. Segwise's native fatigue tracking monitors all creatives across platforms for continuous performance decline and spend share drop, with custom thresholds you configure based on your business logic, and alerts via email or Slack when fatigue is detected. The loop becomes: winner identified, tagged, iterated by the Generation Agent in every network's aspect ratio, tested with variable isolation, fatigue detected before crash, next iteration in pipeline rotates in.

Conclusion

The teams that win on paid media in 2026 are not the ones with the most creative ideas. They are the ones with the most disciplined iteration system. Winners die from fatigue, not from being beaten. The cost of underinvesting in iteration is higher than the cost of overinvesting in new ideation. Stop making more ads and start building a system.

The system is the iteration tree. Hook variants first because they drive 60 to 70% of engagement. Body variants next because they extend lifespan when the hook still works. CTA variants third because they are the cheapest module. Format and voiceover variants run in parallel because most teams skip them entirely. Test 3 to 5 modular variants per week with strict variable isolation. Detect fatigue daily. Kill underperformers on rule, not feel. Translate every test result into the next brief.

Treat winning creatives as launchpads, not finish lines. Build the library, build the production engine, and close the feedback loop. The compounding effect over six months delivers a 25 to 40% improvement in blended ROAS without any change to media buying. For teams running Segwise, that loop is automated end-to-end.

Frequently Asked Questions

What does it mean to iterate winning creatives?

Iterating a winning creative means producing structured variants of a proven concept (different hooks, bodies, CTAs, formats, voiceovers) and testing them with variable isolation. The goal is to extend the original winner's lifespan and discover which elements drive performance. Iteration starts from validated signal; new ideation starts from a hypothesis. Most teams under-do iteration and over-do new ideation, which is why their ROAS is volatile. Segwise's Creative Generation Agent automates the iteration step by generating data-backed variants from your winning tags. Platforms like AdCreative.ai or MagicBrief focus more on tracking which elements work.

How many creative iterations should I run per week?

The practical target for most performance accounts is 3 to 5 new modular variants per week, each isolating a single variable. Each variant needs 5,000 to 10,000 impressions for statistical significance. For brands spending $50K or more monthly on paid, weekly testing budget runs $200 to $400, which is under 3% of total spend. Lower-spend accounts can use a tighter signal threshold of 2,000 impressions to read early CTR and outbound click data. Segwise users typically run higher iteration velocity because the Creative Generation Agent removes the production bottleneck.

What is the difference between creative fatigue and creative failure?

Creative fatigue is when an ad that was performing well starts to decline after 7 to 10 days because the audience has seen it too many times or pattern-matched the format. Creative failure is when a new ad does not work from launch because the concept, hook, or targeting is wrong. Fatigue requires rotating in a fresh variant. Failure requires going back to the brief. The signal that distinguishes them is whether the ad ever had a strong CTR window. If yes and it is now declining, that is fatigue. If it never had a strong window, that is failure. Segwise's fatigue tracking, MagicBrief, and Meta's Creative Center all surface fatigue patterns. Only Segwise also generates the replacement variant from your winning tags directly in the platform.

How do I detect creative fatigue early?

Track four daily signals with automated alerts: CTR decline of 15% or more over 7 to 10 days while impressions stay stable, frequency above 2.5 on prospecting audiences, CPM spike of 20% or more without audience changes, and thumb-stop rate dropping 15% below historical average for video. Build a dashboard that surfaces these in near real-time so you act before the winner crashes. Segwise's Creative Strategy Agent handles this monitoring across all integrated networks (Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, IronSource) and MMPs (AppsFlyer, Adjust, Branch, Singular) with configurable fatigue thresholds and Slack or email alerts. Native tools like Meta Ads Manager surface frequency and CPM but require manual cross-platform stitching.

When should I kill an iteration that is not working?

Set kill rules before the test starts. Pause when CTR drops below your category benchmark for three consecutive days, when CPA rises 20% above your trailing 14-day average, or when frequency exceeds 2.5 on the core audience. For net-new iterations, kill any variant that underperforms the control after 5,000 to 10,000 impressions. Do not nurse losers. The opportunity cost is the next variant in your pipeline. Document why the variant lost so the next brief encodes the constraint. Most teams run kill rules manually inside Meta or Google Ads Manager. Teams using Segwise can automate alerts on the same thresholds and surface the next iteration from the Creative Generation Agent automatically.

Why iterate instead of just creating new concepts?

Iteration outperforms new ideation per dollar because the hit rate on iterations of a proven winner is much higher than on net-new concepts, often only around 20%. Iteration starts from validated signal and stacks on it. New ideation gambles on whether the hypothesis will pull. You still need both. The rough split is 70% iteration on winners, 20% new concepts in familiar formats, and 10% genuinely experimental. Iteration carries the weekly load. Segwise's Creative Generation Agent is built for the 70% bucket. Tools like AdCreative.ai sit closer to the 10% bucket.

Can AI tools replace a modular iteration system?

AI tools accelerate production within a system but do not replace the system itself. Generative AI can produce image variants, write copy alternatives, and assemble video edits. Without a testing framework, kill rules, and a feedback loop, AI just produces more content faster. More content is not the solution. Better-tested, strategically diverse content is. AI is the accelerant. The system is the engine. The creative flood reinforces this: pushing infinite static variants without structured testing creates saturation, not signal. Segwise's Creative Generation Agent only produces iterations grounded in your tag-to-metric mapping, so volume scales with signal, not against it.

What metrics should I track to measure iteration effectiveness?

Track five metrics: average creative lifespan before the fatigue threshold fires, win rate of tested variants (what percentage beat the control), time from insight to next variant in rotation, format diversity ratio (how many distinct formats are active), and blended ROAS trend over rolling 30-day periods. These tell you whether the system is learning and improving. Segwise surfaces creative lifespan, fatigue patterns, and tag-level performance natively. For blended ROAS trend, pair it with your MMP (AppsFlyer, Adjust, Branch, or Singular) and your BI tool. Standalone tools like MagicBrief or Meta Creative Center surface some of these metrics but require manual cross-platform stitching.

Comments

Your comment has been submitted