Is Your Media Buyer Actually Good? Here's How to Find Out

Most brands evaluate their media buyers the wrong way. They look at ROAS. They look at CPA. They track whether the numbers went up or down over the quarter. And when things look roughly okay, they assume the buyer is doing their job.

That's the equivalent of managing a salesperson by looking only at monthly revenue and never at their call notes, pipeline hygiene, or follow-up patterns. Average outcomes can hide bad process. A brand might be performing at a 3x ROAS despite their buyer, not because of them.

Curtis Howland, founder of MisfitMarketing.co and the mind behind $2.2M+ in monthly ad spend management, made exactly this point in a LinkedIn post that sparked hundreds of comments: most brands hire media buyers and track average outcomes instead of operational quality. His solution is elegant and visual: a single scatter plot that tells you whether your buyer is actually doing the work.

This post breaks down that framework, goes beyond the single chart, and gives you a full evaluation system for assessing your media buyer's operational competence, not just their results.

Key Takeaways

Tracking only aggregate ROAS or CPA masks the quality of your media buyer's operational decisions

A CPA vs. spend scatter plot on a log scale reveals whether a buyer is cutting losers fast and scaling winners correctly

Curtis Howland's four-chart system also examines when changes are made, how large those changes are, and whether changes correlate with improved CPA

A well-optimized account shows no high-spend/high-CPA ads (red zone), few low-spend/low-CPA ads (green zone), and a cluster of properly scaled winners

Red flags in media buying are behavioral, not just metric-based: messy account structure, audience obsession over creative, and "copy" ads everywhere all signal poor craft

Process metrics are a better leading indicator than performance metrics, which lag by days or weeks

Also read How to Speed Up Creative Testing: Proven Framework & AI Strategies

Why Average Performance Metrics Mislead You

Performance metrics tell you what happened. They rarely tell you why, or whether your buyer deserves credit for it.

Consider: a brand might hit its CPA target on a given month because the creative team shipped three strong hooks. The buyer's job was to scale them. If they didn't scale the right ones, or scaled too slowly, or kept spend on losers while the winners sat at low budgets, the brand still hit target, but left significant returns on the table.

Research from RedTrack in 2025 makes this point directly: tracking KPIs in isolation is never enough. The real power is integrating them into a view of performance where attribution is accurate and decisions are clear. A buyer who hits 3x ROAS by accident is a very different hire than one who engineered it.

The other issue: aggregate metrics have a natural lag. By the time a bad month shows up in your ROAS, the buyer has been making suboptimal decisions for 30-60 days. Process metrics catch problems earlier. They're behavioral. They're visible in the account today.

The Scatter Plot That Reveals Everything

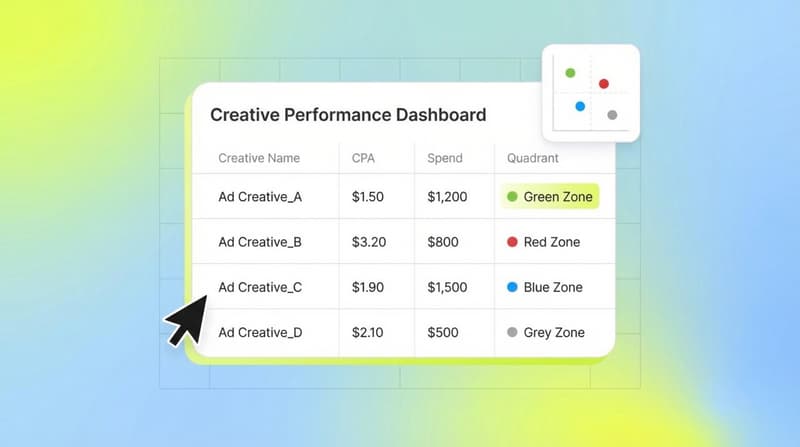

This is the chart Howland popularized. It requires no special tool, just Meta Ads Manager data and a spreadsheet.

How to build it:

Pull all ads from the last 30-90 days (30 days if a large account, 60 if smaller)

Filter for prospecting only. Remove BOFU, remarketing, awareness, and any non-prospecting campaigns

If you track multiple products, filter for one product at a time

Set Y axis: CPA (cost per acquisition)

Set X axis: total spend per ad (log scale is critical, not optional)

Each dot is one individual ad

Draw a horizontal line at your CPA target

Draw a vertical line at a minimum spend threshold (what you consider "enough spend to have data")

This creates four quadrants:

Top right (red zone): High CPA, high spend. These are losers that kept getting budget. The buyer saw the data and let them run anyway. This is the clearest sign of poor optimization discipline.

Bottom left (green zone, or grey): Low CPA, low spend. These are often your next winners. They've proven efficiency at low volume but haven't been scaled. A small number of these is normal. A large cluster means the buyer is missing winners sitting right in front of them.

Bottom right (purple zone): Low CPA, high spend. These are your scaled winners. A well-optimized account should have a handful here, often just 1-5% of all ads, but driving 50%+ of revenue.

Top left: High CPA, low spend. These are in the testing phase. Most ads live here and that's normal. The buyer's job is to move them right if they improve, or kill them if they don't.

A well-run account looks like a diagonal band from top-left to bottom-right with nothing in the top-right. If you see a scattered mess with dots everywhere, Howland is direct about the interpretation: the problem is media buying, not creative.

In a documented case study, Howland's team took over an account that showed exactly this pattern: a scattered mess of dots. With no new creatives, they doubled spend from $446K to $981K and cut CPA by 48% over 90 days, purely through better allocation.

Four Charts to Fully Evaluate Your Buyer

The scatter plot is the first chart. Howland's fuller system uses four, and each diagnoses a different failure mode.

Chart 1: The CPA vs. Spend Scatter Plot

Covered above. Diagnoses: allocation discipline and winner identification.

Chart 2: When Is Media Buying Happening?

This chart requires the Meta Ads Manager change log, which you find in the clock icon in the top right corner.

Pull the last 90 days. Copy to a spreadsheet. Create a bar chart: changes made by day of the week and time of day.

What you don't want to see: 90% of budget changes happening on Monday morning. Or entire days where no one touched the account. Or a single massive weekly review replacing daily hands-on management.

What good looks like: consistent, small optimizations distributed through the week, no single day accounting for the majority of account touches. A buyer who logs in once a week is not doing the work.

Chart 3: Are the Changes Making Things Better?

Take the change log data from Chart 2. Plot the size of budget changes (as a percentage) against next-day CPA.

The question: when they make bigger changes, does CPA go down?

If the trend line goes down-right, bigger changes correlate with improvement. That's good. If the line is flat, that's neutral. If the line goes up-right, it means larger changes consistently cause CPA to get worse. That's a direct sign you're paying someone to destabilize your algorithm.

Good media buyers make small, measured changes. Budget adjustments of 10-20% are appropriate. Dramatic 50%+ swings kick campaigns out of the learning phase and reset the algorithm's optimization, which pushes CPA up in the short term without long-run benefit.

Chart 4: Who Is Making Changes?

The last chart examines ownership. Pull the change log and categorize by user.

Optimal: one person is responsible for 85-90% of changes. That person has deep context on the account's history, knows which ads ran last month, and understands how the algorithm has been responding. Multiple buyers sharing an account typically leads to contradictory decisions, no one accountable, and the algorithm seeing inconsistent signals.

If your account has five people making significant changes, no one truly owns it.

Behavioral Red Flags That Compound the Charts

Metrics catch what happened. Behavior patterns show you what will keep happening.

Batuhan Ulker's breakdown of red flags and Ciaran Finn's 10-sign list each describe the same underlying pattern: buyers who substitute busyness for strategic thinking.

The specific behaviors to look for:

Audience obsession over creative focus. In 2024-2025, audiences on major platforms have broadened dramatically through machine learning. Meta's Advantage+ and TikTok's Smart+ audiences reach well beyond manually defined interest stacks. A buyer who still spends most of their time on audience segmentation is working the wrong lever. Point2Web's 2025 analysis of common media buying mistakes lists this directly: ignoring creative performance data in favor of targeting manipulation is a persistent and costly pattern.

No UTM tracking. Without proper UTM parameters, you can't attribute traffic accurately. This is a basic hygiene requirement, not an advanced practice.

Messy account structure. "Ad copy 3 - copy - final - final2" in campaign names isn't just aesthetically bad. It means the buyer lacks a systematic testing process. Organization and performance correlate: buyers who can't organize their naming conventions usually can't organize their testing logic either.

Over-allocation to remarketing. Remarketing audiences have natural size limits and are typically already warm. Spending more than 20-25% of budget here starves prospecting campaigns that drive new customer growth. The bias toward remarketing often comes from buyers chasing the easiest ROAS wins, which come from audiences who were going to convert anyway.

Killing ads too early. Learning phases on Meta and TikTok require sufficient spend to stabilize, typically at least 50 conversion events per ad set. Buyers who cut underperformers within 48 hours, before learning completes, waste the test spend and never get real data.

Blaming audiences for poor performance. Buyers who explain underperformance with "the audience is saturated" or "the targeting needs work" without examining creative performance first are showing you where their analytical framework stops.

What Good Looks Like, Operationally

Good media buyers aren't just optimizers. They're diagnosticians. RocketShip HQ, drawing on $100M+ in managed mobile ad spend, describes the orientation this way: start with a clear metric hierarchy before touching data. Primary KPI first (usually ROAS or CPI), then supporting metrics in order of proximity to revenue, with spend volume as a second lens to validate significance.

A strong buyer looks at a struggling creative and doesn't just turn the spend down. They examine where in the funnel it's breaking. Is the hook rate strong but CVR weak? The problem is post-click. Is the hook rate low? The problem is the first three seconds. Is engagement high but ROAS poor? The problem might be the landing page or offer, not the creative at all.

They also know the difference between an ad that failed and an ad that didn't get enough data to tell. Setting a minimum spend threshold before drawing conclusions (at least 3x your target CPA, or $100, whichever is higher) is the kind of discipline that separates buyers who run on intuition from buyers who run on evidence.

And they document. If a winning ad gets paused for a reason that isn't clear, the next buyer who opens the account won't know whether to retest it. Howland's team specifically found, when taking over a new account, that retesting paused winners with new copy was one of the highest-return actions available. Context that lives only in the buyer's head is context that's gone when they are.

A Framework for Your Monthly Buyer Review

If you want to make this systematic, build a review using the four charts above plus this checklist:

Monthly (first week of month):

- Pull the scatter plot for the last 30 days. Is the red zone empty? Are green-zone ads getting scaled?

- Review the change log for timing. Are changes distributed through the week, or batched on one day?

- Check change-to-CPA correlation. Are larger changes helping or hurting?

- Verify account ownership. Is one primary buyer making the majority of changes?

Ongoing (weekly check-in):

- What was paused this week and why?

- What's being scaled and what's the current CPA trajectory?

- What new ads are in testing and what's the plan if they don't hit threshold?

- Are creative briefs informed by what's working in the account, or is the buyer a passive recipient of whatever the creative team ships?

The last question matters more than most brands realize. The best buyers have opinions on creative. They analyze why winning ads win (hook style, format, offer clarity, emotional tone) and feed that back into the brief. A buyer who can't articulate what made last month's top ad work isn't fully doing the job.

The Link Between Media Buying Quality and Creative Performance

There's a dynamic that often gets missed: poor media buying distorts your creative data.

If a buyer consistently lets losing ads overspend before cutting them, the performance data becomes noisy. You can't tell if a creative concept failed because the concept was weak or because it got oversaturated before it had a chance to find an audience. If they don't scale winning ads aggressively enough, you'll never know which concepts could sustain real volume.

This is why the scatter plot is so revealing. The distribution of dots tells you about allocation discipline, but it also tells you whether you can trust the creative performance signals coming out of your account.

This is also where Segwise becomes useful. Segwise connects creative and performance data from 15+ ad networks, including Meta, Google, TikTok, AppLovin, and Unity Ads, plus MMPs including AppsFlyer, Adjust, Branch, and Singular. It applies multimodal AI to tag every creative element (hook style, visual format, audio, CTA) and maps those tags to performance metrics. When you have that systematic tagging across your entire asset library, you can audit whether your buyer's scaling decisions match what the creative data shows was actually working. If they were scaling the flashy creative while a quieter product-demo ad sat at low budget with better ROAS, that shows up clearly. Do your creative performance signals align with your buyer's allocation decisions? That's the diagnostic Segwise makes straightforward.

Conclusion

The question of whether your media buyer is good is really a question about whether you're measuring the right things.

Aggregate ROAS and CPA give you a lagging, averaged view that hides operational quality. The scatter plot gives you a real-time view into allocation discipline. The change log charts give you visibility into when work happens, how large the decisions are, and whether those decisions are improving the account. The behavioral red flags tell you about the buyer's underlying model of how good performance actually gets built.

Most brands won't run these analyses. That's the gap. Buyers who operate in environments where only aggregate outcomes are tracked have no accountability for process, and process is where both value and waste are created.

Run the scatter plot. Pull the change log. Talk to your buyer about why specific ads were cut and what the plan is for the ones sitting in the bottom-left quadrant. You'll learn more about their quality in that conversation than in six months of monthly reports.

If you want a cleaner way to see whether your buyer's allocation decisions match what your creative data actually shows, Segwise is worth looking at. It unifies creative performance data from 15+ ad networks and MMPs (AppsFlyer, Adjust, Branch, Singular) and uses multimodal AI to tag every creative element, so you can see exactly which hooks, formats, and CTAs drove your results, and whether your buyer was actually scaling the right ones.

Frequently Asked Questions

How often should I review my media buyer's performance?

A brief weekly check-in is ideal for in-flight decisions. The more detailed four-chart analysis works well monthly. Quarterly reviews tend to be too infrequent to catch and correct operational problems before they compound into performance problems.

What's a realistic CPA vs. spend distribution for a healthy account?

In a well-optimized account, expect roughly 60-70% of ads in the top-left quadrant (high CPA, low spend, in testing), 20-25% in the red zone being actively cut, 5-15% in the bottom-left showing efficiency at low spend but awaiting scaling, and 1-5% in the bottom-right as scaled winners. If top-right exceeds 15-20%, you have an allocation problem.

Is it possible to over-optimize a media buying account?

Yes. Making too many changes too frequently disrupts the learning phase on Meta and TikTok, which requires a stable signal to optimize delivery. Good buyers make calibrated, small adjustments rather than frequent resets. The "change size vs. next-day CPA" chart from Howland's framework will often reveal over-optimization directly.

What should I do if my buyer blames algorithm updates for poor performance?

Ask them to pull the scatter plot. If the distribution looks healthy, algorithm conditions might genuinely be a factor. If you see a lot of red-zone ads or an absence of scaled winners, the account management is the primary issue, not external conditions. Algorithm changes affect everyone equally; allocation quality is buyer-specific.

How do I evaluate a media buyer before hiring one?

Ask them to audit a sample account or show you their process documentation. Questions that reveal quality: How do you decide when to cut an underperforming ad? What does your change log look like on a normal week? How do you identify which ads to scale? How do you close the loop with the creative team? Buyers who can answer these concretely with examples have a systematic approach. Buyers who answer in generalities don't.

What's the biggest mistake brands make when managing media buyers?

Tracking average outcomes only. A buyer who hits monthly targets while leaving 30% of potential revenue on the table through poor allocation is harder to identify, but just as costly, as a buyer who misses targets outright. Process metrics are the only way to see this clearly.

Does the scatter plot approach work for non-Meta platforms?

The principle applies across platforms, but the ease of implementation varies. Meta Ads Manager makes pulling creative-level CPA and spend data relatively straightforward. Google and TikTok require more data work to replicate the view. The underlying logic (eliminate high-spend losers, identify and scale low-spend winners) is universal to paid media.

At what spend level does this analysis become useful?

Howland suggests a minimum 30-day window on accounts spending enough to generate meaningful data at the ad level, roughly $50-100 per ad. At very low spend levels, statistical noise makes the scatter plot harder to interpret. For smaller accounts, a 60-90 day window helps gather enough data points to see the pattern clearly.

Comments

Your comment has been submitted