How Many Ads Should You Produce Each Month? A Budget-Based Framework

Most creative teams treat ad production volume as a gut-feel decision. Someone in a Slack channel says "we need more ads," the team scrambles to produce 20 new concepts, most of them get $30 in spend before the data's meaningless, and nothing conclusive comes out the other end.

In 2025 and into 2026, this is getting more expensive to get wrong. Ad platforms are burning through creative faster. Creative fatigue is measurable now, tracked by platform algorithms through entity IDs unique to each image or video file. Meta's algorithm needs 50 conversions per week per ad set to exit the Learning Phase, so flooding an account with underfunded creative doesn't just waste budget -- it actively slows learning.

At the same time, brands testing fewer than five new concepts per month show nearly half the median ROAS of those testing 15 or more. The math runs in both directions: too many underfunded tests, and too few tests altogether, both hurt performance.

This post breaks down a practical, budget-anchored framework for figuring out exactly how many ads to produce monthly, what format split makes sense, and how to structure the mix between new concepts and iterations of proven winners.

Key Takeaways

Target roughly 1 new creative concept per $2,000-3,000 in monthly Meta spend as a starting benchmark, according to MHI Media's analysis of 80 DTC accounts

Brands testing 15+ concepts per month had 1.8x higher median ROAS than brands testing fewer than five

Only 2% of creatives tested ever become scalable winners -- which means low-volume testing isn't a strategy, it's wishful thinking

For each video concept, start with 2-3 hook variations. For each static concept, aim for 5-8 variations

Allocate roughly 70-80% of budget to scaling proven winners; dedicate 10-20% to testing new creative

Using 5-7 creatives refreshed weekly delivers a 1.5x ROAS improvement for performance campaigns

Track creative production separately from campaign spend -- volume is a production systems problem, not just a budget problem

Also read What Is Hook Rate and How to Improve It

Step 1: Start with your monthly spend budget

Before thinking about creative styles, formats, or concepts, you need to anchor your production target to actual spend.

A practitioner who has tested nearly 10,000 Facebook ads published a framework that holds up well in practice:

For brands spending under $50,000/month: 1 new concept per $5,000 in spend

For brands spending over $50,000/month: 1 new concept per $10,000 in spend

So a brand spending $100,000/month should target around 10-15 new concepts. A brand at $500,000/month should be producing roughly 50.

MHI Media's analysis of 80 DTC accounts breaks this down further by tier:

The logic behind these numbers: at lower spend levels, you have less statistical power per concept, so you need to test more angles to find winners before running out of budget runway. At higher spend levels, the algorithm has more data to work with and can learn faster from fewer concepts if they're structured correctly.

Step 2: Decide your video vs. static split

Not all concepts take the same production effort or budget, so splitting by format matters both for planning and spend allocation.

For most DTC and mobile app brands, a target of 70-80% video spend makes sense. That said, the right split depends on the product:

Products with complex mechanisms or strong transformations: lean toward more video

Products where visual aesthetics are the main appeal: static ads can hold a larger share

Using the $500k/month example from above, 50 concepts would break into roughly 35 video and 15 static.

Each of those concepts isn't a single ad, though. From each concept, you produce variations:

Video concepts: 2-3 hook variations each (start with just one hook when testing an unproven concept, to keep test spend low)

Static concepts: 5-8 variations each

That math produces roughly 70-105 video ads and 75-120 static ads per month for a $500k brand. Production systems matter a lot here -- without batch filming and templated static production workflows, these numbers are impossible to hit sustainably.

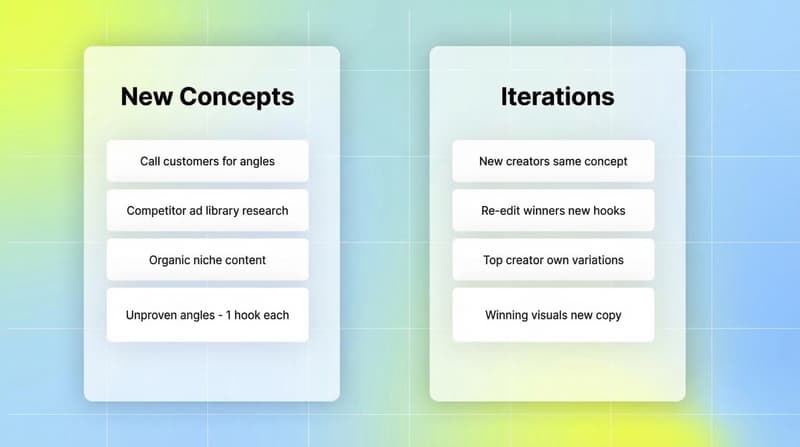

Step 3: Split between new concepts and iterations

Volume alone won't save you if everything is a first-test concept. For established brands with enough performance data, a roughly 50/50 split between new concepts and iterations of proven winners tends to work well.

Iterations aren't just reskins. The most effective approaches include:

Finding new creators for the same concept that already worked

Re-editing existing winners with new hooks, music, or captions

Asking high-performing creators to produce their own variations

Taking winning visuals and testing different copy angles over them

For new concepts: call customers, review competitor ads in the Meta Ad Library, analyze organic content in your niche, and build from what's already resonating with your audience.

New concepts carry more risk and more upside. Iterations carry less of both. A healthy pipeline needs both running in parallel.

Why the math matters more than you think

Only 2% of creatives tested ever become scalable winners, according to data from hundreds of Meta ad accounts analyzed by brkfst.io. If you're testing 10 ads per month, you might not find a single winner in a given month. Test 50 and the numbers start working in your favor.

But this isn't just a volume argument. Advertisers running 5 or more creative variants per ad set see 20-30% lower CPA than those running 1-2 variants, according to Meta Ads testing data. Structure still matters. Quality still matters. Volume without either just burns budget faster.

The budget question also connects directly to creative fatigue. Lo-fi UGC content, for example, typically burns out in just 7-10 days on performance campaigns. A winning ad that delivers strong results in week one can be fatiguing audiences in week two. Creative fatigue is the fastest way to kill Meta ad performance, and brands that aren't producing new creative fast enough are constantly scrambling to replace burned-out assets instead of scaling winners.

The formula: 70-80% of budget scales proven winners while 10-20% funds ongoing testing. That ratio keeps the pipeline moving without killing current performance.

The budget-per-creative constraint

Volume targets only work if each ad gets enough spend to generate meaningful data. Each creative needs a minimum of $100-150 in spend to produce statistically reliable results, according to AdManage's 2025 creative testing framework.

That creates a real ceiling. If you have $500 to allocate toward testing in a given week, you can meaningfully test five creatives, not fifty. Spreading that budget across too many ads at once means none of them exits the Learning Phase with enough data to trust.

Meta recommends at least 50 conversions per week per ad set for the algorithm to optimize efficiently. If your brand has a longer sales cycle or higher CAC, you may need to consolidate ads and prioritize fewer, better-funded tests over any given period.

Budget allocation for testing vs. scaling

The numbers only work if you're protecting some spend for testing. The general guideline from several practitioners and agency frameworks lands in the same place: 10-20% of monthly media spend goes toward testing new creative.

That 10-20% is investment spending, not performance spending. You shouldn't expect it to hit the same ROAS as your proven winners. You're buying learnings, not conversions. The payoff comes when one of those tests breaks through and becomes the next winner you scale.

Some categories of spend where test budget tends to generate outsized returns:

Founder-filmed video (near zero production cost, often high authenticity)

UGC creators ($150-200 per piece for initial tests)

Hook variations on existing winning concepts (low production cost, high test value)

New format experiments (playable, carousel, short vs. long video)

What this looks like at different scales

For small-budget brands (under $5,000/month), the constraint isn't spend -- it's production resources. Focus entirely on low-cost formats: founder-filmed video, phone photography. Commit to one filming session per week, producing 2-3 hook variations per concept. That's 4-8 test-ready concepts per month with near-zero production cost.

For mid-budget brands ($5,000-$20,000/month), invest in systematic production. Batch filming once a month can produce 8-12 concepts in a single session. Add two to three UGC creators and you're consistently hitting 10-15 new concepts per month.

For high-budget brands ($100,000+/month), creative is the primary growth lever, and production needs to be treated as infrastructure. At this scale, agencies typically produce 20-50 unique assets per month for a single client. Mobile gaming studios at peak scale go much further -- Kingshot deployed 3,000+ creatives per day at peak growth, according to the Global Mobile Gaming UA Trends report Q1-Q3 2025.

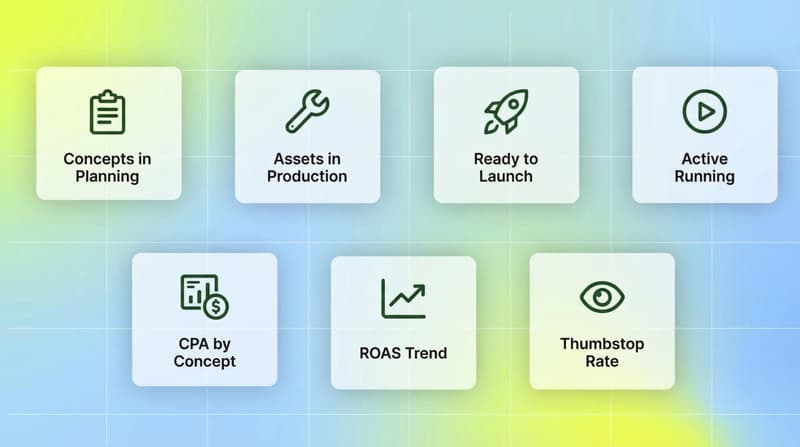

Tracking production as a performance metric

Most teams track ad performance obsessively. Fewer track production performance. If you want to close the gap between your volume target and your actual output, you need visibility into both.

Track:

Concepts in ideation/planning

Assets in active production

Assets ready to launch

Active running concepts

Performance by concept (CPA, ROAS, thumbstop rate by format)

MHI Media's analysis notes that volume is a production systems problem, not a budget problem. The constraint for most brands is the production pipeline, not the money available to test. Batching, templating, and working with a stable roster of UGC creators are what unlock consistent volume at any spend level.

If you want to stay ahead of creative fatigue and keep winners scaling, you need to know at a glance: how many new concepts launched this week, how many are in testing, and how many proven concepts are currently scaling before they burn out.

That kind of visibility doesn't come from manually reviewing Ads Manager. It comes from having creative performance data and production tracking in one place.

Where Segwise fits into this

The reason volume compounds so well is that data from each test informs the next one. But that compounding only happens if you can actually see which elements, hooks, formats, and creative variables drove performance -- across every platform you're running on.

Segwise connects to 15+ ad networks and MMPs (Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, IronSource, and AppsFlyer, Adjust, Branch, Singular) and automatically tags every creative element using multimodal AI -- video, audio, image, and text together. When a hook variation outperforms the others, Segwise shows you exactly which element made the difference, so your next 50 concepts start from an informed position instead of a blank slate.

Teams using Segwise save up to 20 hours per week previously spent on manual tagging and data consolidation, and report up to a 50% improvement in ROAS from identifying winning creative patterns early. At $500k/month in spend, that kind of intelligence doesn't just improve performance -- it changes how fast you can learn, iterate, and scale.

Explore how Segwise's creative intelligence platform can help your team build a smarter, faster creative production system.

Conclusion

The right monthly ad production number isn't arbitrary. It comes directly from your spend level, your format mix, and how much of your pipeline is new concepts versus proven iterations.

At any budget level, the floor is consistent testing: new concepts going live every week, hook variations covering each concept, and a clear split between test budget and scaling budget. The specifics change at different spend tiers, but the discipline doesn't.

Most brands producing fewer than 10 concepts a month aren't under-resourced -- they're under-systematized. The framework above doesn't require a massive production team. It requires a filming cadence, a UGC roster, and a clear number to hit each week.

Build the system first. The volume follows.

Frequently Asked Questions

How many ads should a brand spending $10,000/month on Meta be testing?

At $10,000/month, target 4-8 new creative concepts per month. At this budget level, give each concept roughly $100-150 in spend before evaluating. That limits you to 2-4 meaningful concept tests per week. Focus on the widest possible range of angles and hooks before going deep on iterations of any single concept.

What's the difference between a creative "concept" and an "ad"?

A concept is the core creative idea: a specific angle, hook type, or narrative. An ad is a single execution of that concept. One video concept should generate 2-3 hook variations, each as a separate ad. One static concept typically generates 5-8 individual ad variations. When counting your monthly production targets, count concepts, not individual ads.

How do you split budget between testing new creatives and scaling winners?

The standard approach is 70-80% of budget scaling proven winners and 10-20% funding ongoing creative tests. The testing allocation is investment spending, not performance spending -- you shouldn't expect it to match the ROAS of proven ads. Its job is to find the next winner.

How often should you refresh creatives to avoid fatigue?

Fatigue timelines vary by format and audience size. Lo-fi UGC typically burns out in 7-10 days on performance channels. Polished video concepts tend to last longer. The practical rule: track frequency and watch for CTR decline paired with CPM increases. Set automated rules to shift budget away from ads showing those signals and toward fresh assets.

How many hook variations should you test per video concept?

Start with 2-3 hook variations per concept. When testing an unproven concept for the first time, one hook is enough -- keep test spend low until the core concept proves out. Once a concept shows promise, add hook variations to find the strongest opening before putting major spend behind it. Most performance gains from video testing come from hooks, not from changing other elements.

How does this framework differ for mobile gaming vs. DTC brands?

The framework is similar. Mobile gaming UA teams running at scale often need substantially higher volume given faster creative fatigue on gaming networks. The Kingshot case study from Q1-Q3 2025 shows 3,000+ creatives per day at peak -- though that's an extreme outlier. Mid-stage gaming studios should use the same budget-anchored framework, adjusting upward for the shorter fatigue cycles typical of gaming networks.

When should you stop testing a concept?

Once a concept has received at least $100-150 in spend per variation without hitting your target CPI or ROAS, retire it. Don't pour more budget hoping the algorithm figures it out. Move to the next concept. The 2% winner rate means most concepts won't make it -- the goal is to test enough that some do.

Comments

Your comment has been submitted