AI UGC vs Human UGC for Meta Ads: Which One Drives ROAS?

AI UGC and human UGC each win on a different ROAS lever in 2026. Humans still convert better in raw head-to-head tests on emotionally driven verticals like skincare, while AI UGC wins on cost-per-test and variants-per-dollar by a 5x to 10x margin. The teams pulling ahead on Meta this year are not picking one or the other, they are running a hybrid stack where AI handles testing volume and humans scale the proven winners. Segwise tags every UGC ad at the element level (AI or human), then generates new AI creatives built around your winning hooks, characters, and CTAs, so teams close the loop from analytics to AI ad production inside one platform.

The honest answer to "AI UGC vs human UGC, which wins on ROAS?" is that they win different fights. A $15K skincare test recently shared by a Meta media buyer in r/FacebookAds captured the split most teams are seeing in 2026: human UGC took the pure ROAS crown, AI UGC took the variants-per-dollar crown. Neither result was a knockout. Both were repeatable.

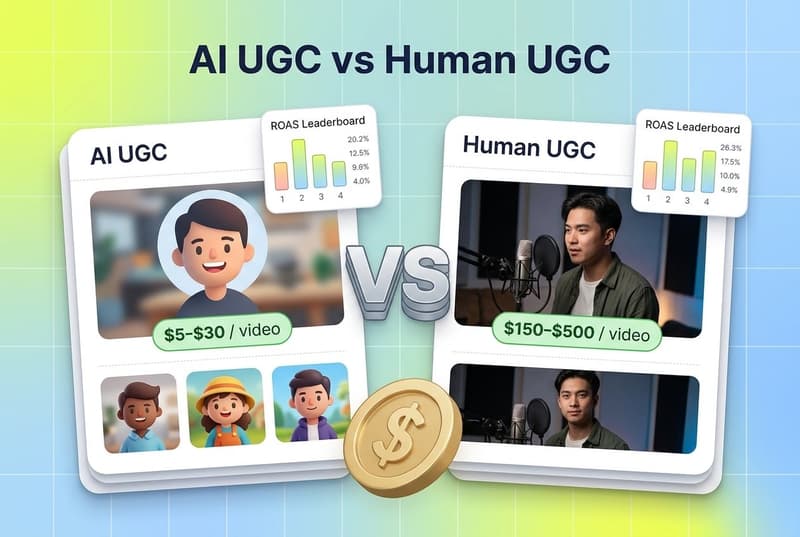

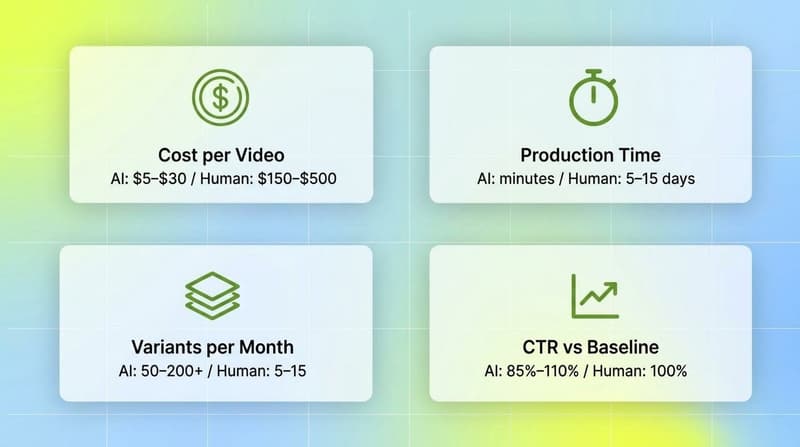

What changed in the last twelve months is the cost gap. AI UGC platforms now produce convincing creator-style videos for roughly $5 to $30 per video, compared to $150 to $500 per video for a human creator and edit pass, according to Hoox's March 2026 cost analysis. For a brand spending $50K a month on Meta and refreshing creatives every two weeks to fight fatigue, that ratio is the difference between testing 5 ideas a month and testing 50.

The performance side is also closer than most marketers think. Field data from media buying teams shows AI UGC videos achieving 85% to 110% of the click-through rate of well-performing traditional UGC, per Hoox. Most viewers cannot tell the two apart in a fast-scrolling Reels feed. The gap shows up in conversion, not attention, and only in specific verticals.

This guide pulls together the $15K test data, head-to-head observations on Argil, Arcads, Creatify, and MakeUGC, the verticals where each format wins, and a hybrid workflow you can run inside your existing Meta stack.

Key Takeaways

AI UGC videos hit 85% to 110% of the CTR of well-performing human UGC in 2026, per field data from Hoox. The gap is in conversion, not attention.

Production cost differs by 5x to 10x. Human UGC: $150 to $500 per video. AI UGC: $5 to $30 per video, per the same Hoox analysis.

A $15K Meta ads test on a skincare brand shared in r/FacebookAds showed human UGC winning pure ROAS while AI UGC won variants-per-dollar, matching the broader pattern in 2026.

80% of brands report human UGC outperforms AI for trust and relatability in emotional or testimonial-heavy verticals, according to PPC.io's 2026 UGC pricing report.

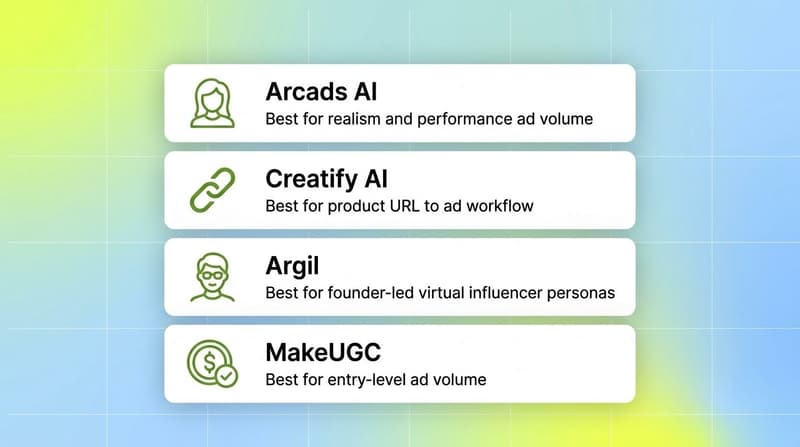

Argil, Arcads, Creatify, and MakeUGC each solve a different bottleneck. Arcads wins on actor realism, Creatify wins on URL-to-ad workflow with built-in analytics, Argil wins on founder-led "virtual influencer" personas, MakeUGC wins on entry pricing.

Meta's AI disclosure rules, in effect across 2026, require labeling for ads with photorealistic AI-generated humans. Hybrid workflows reduce disclosure friction by anchoring the scale phase on a real creator.

The hybrid workflow that beat both pure approaches in 2026: AI UGC for the test phase (20 to 50 variants in 48 hours), human UGC for the scale phase, and element-level tagging to figure out which winning script crosses formats.

Also read Meta Andromeda Update: The 2026 Creative Strategy Playbook

What "AI UGC" Actually Means in 2026

AI UGC stands for User-Generated Content style ads produced entirely by artificial intelligence, where an AI avatar delivers a script in a format that mimics a real creator filming on their phone. The technology stack rests on three pieces working together: avatar generation, voice synthesis, and automated video composition, per AdStellar's 2026 AI UGC guide.

Modern avatars are not the cartoonish CGI of three years ago. Tools like Arcads license real human actors who provide motion capture and likeness rights, then let the AI re-script their delivery. Voice synthesis now captures filler words, micro-pauses, and conversational tonal variation, so the output sounds like someone talking to their phone, not a corporate voiceover.

The category breaks into two practical formats. The first is "AI creator UGC," where the avatar plays a generic creator persona and rotates across scripts. The second is "AI clone UGC," where you train the AI on a single founder, brand ambassador, or licensed creator and produce a consistent virtual influencer. Argil leans into the second format. Arcads, Creatify, and MakeUGC focus mostly on the first.

The thing that ties both formats together is intentional imperfection. Effective AI UGC keeps the slightly raw, slightly home-shot quality that makes user-generated content feel authentic. Studio-polished AI UGC underperforms because viewers' subconscious flags it as a brand video pretending to be a creator video.

The $15K Meta Ads Test: What the Numbers Actually Show

The pattern we keep seeing across 2026 ad tests on Meta lines up with what one media buyer documented in a recent r/FacebookAds writeup: a skincare brand split a $15K test budget across human UGC and AI UGC creatives running on the same offer, the same audience, and the same landing page. The structure was clean enough to draw conclusions from.

Two findings held up. The first was that human UGC won on pure ROAS. The skincare vertical is one where physical product demonstrations and emotional testimonials matter, which is exactly where Hoox's vertical analysis flags human creators as still holding "a measurable advantage." Touching the product, applying it on real skin, and reacting on camera all read as more credible when a real human does it.

The second finding was that AI UGC won on variants-per-dollar. The team produced roughly 30 AI variants for the cost of 3 human videos, then ran a 48-hour cull and scaled the top 2 hooks. The winning hook from the AI batch was then re-shot by a human creator for the scale phase. That hook beat the original AI version on ROAS once it ran behind a real face.

Neither result is surprising once you map them onto the bigger benchmarks. AI-generated ad creative has been linked to a 47% lift in click-through rate and a 29% drop in cost-per-acquisition versus static brand ads, while AI-powered ad optimization correlates with up to 72% higher ROAS in reported industry data. On the other side, UGC ads in general show 50% lower cost-per-click than traditional polished ads on Meta and a 31% lift in memorability, per the same datasets. The point is that "UGC style" is the lever that matters most. Whether that style is delivered by a person or an avatar is the second-order question.

For practitioners, the takeaway is simple. If your vertical is emotional, demonstration-heavy, or trust-driven (skincare, supplements, fitness, premium beauty), expect a small ROAS premium for human UGC at scale. If your vertical is more transactional or speed-of-iteration matters more (DTC ecommerce, SaaS, mobile games, fast-fashion), AI UGC closes the gap or wins outright thanks to volume.

AI UGC vs Human UGC: Side-by-Side Comparison

The comparison table below summarizes the operational differences across the two formats based on aggregated 2026 data from Hoox, PPC.io, and AdStellar.

The pattern is consistent. AI wins on speed, cost, and breadth of testing. Humans win on raw conversion strength in emotional verticals and on the comfort of the scale phase. For most brands in 2026, the right answer is to use both, in sequence, on the same hook.

Argil vs Arcads vs Creatify vs MakeUGC: Head-to-Head

These four tools dominate the AI UGC conversation among Meta media buyers in 2026. They are not interchangeable. Each one wins on a different problem.

1. Arcads AI, Best for Realism and Performance Ad Volume

Arcads runs on a library of over 1,000 licensed AI actors as of 2026, the largest in the category, per eesel AI's pricing review. The actors are real people who licensed their likeness, which is why the lip-sync and micro-expressions sit closer to "indistinguishable from a creator video" than tools that generate fully synthetic avatars.

Pricing starts at roughly $110 per month for 10 videos (Starter) and $220 per month for 20 videos (Creator), with custom Pro pricing for unlimited generation and API access. That works out to about $11 per video, which is 90%+ cheaper than mid-tier human UGC but the most expensive option in the AI UGC category.

Arcads is light on built-in editing and has no native analytics. If your team already has a strong Meta and analytics stack, that is fine. If not, you will end up bolting external tools on top.

Use Arcads when: you care more about ad realism than cost, and your bottleneck is generating ad-ready visuals fast for testing and scale.

2. Creatify AI, Best for Product URL to Ad Workflow

Creatify is built around an end-to-end ad workflow rather than a pure video generator. Paste a product URL, the platform scrapes images, writes the script, and renders the video. The Pro tier includes the AdMax automation layer with hook variants, A/B testing, and ROAS dashboards, per EzUGC's Arcads vs Creatify breakdown.

Pricing is the most accessible in the category. Free tier with watermarked credits, $39 per month Starter (or $33 annually), $99 per month Pro, and $299 per month for AdMax. The avatar library reaches up to ~1,500 on higher tiers.

The trade-offs are real. Creatify has occasional lip-sync misalignment and the avatars sit a notch below Arcads on realism. The platform makes up for it with batch generation (up to 50 variants on higher tiers) and the only built-in analytics layer of the four.

Use Creatify when: you are running a Shopify or DTC brand and want a single tool that handles ideation, production, batch testing, and ROAS attribution.

3. Argil, Best for Founder-Led Virtual Influencer Personas

Argil is the odd one out. Where Arcads and Creatify focus on rotating AI actors, Argil is built for cloning a single person into a consistent virtual influencer. Upload a two-minute video of yourself or your founder, and Argil produces a clone that delivers any script, with auto-captions, B-roll suggestions, and A/B testing built in.

Pricing starts at $27 per month for the Creator plan and tops out around $104 per month for Pro, per Argil's own comparison post. The value is heavily front-loaded on the workflow layer (script writing, captions, B-roll) rather than the avatar library.

The catch is that one cloned face will not solve a 30-variant test. Argil shines when the brand strategy depends on a recognizable persona that is the same person across every ad.

Use Argil when: you are a founder, expert, or thought-leader brand where consistent face equity matters more than rotating creators.

4. MakeUGC, Best for Entry-Level Ad Volume

MakeUGC sits in the budget-conscious tier alongside Creatify's Starter plan. The platform offers AI actors, a script-to-video pipeline, and aspect-ratio export for Meta, TikTok, and YouTube placements. Pricing is similar to Creatify Starter, making it a natural test entry point for solo founders and small teams.

The trade-off is depth. MakeUGC has fewer actors than Arcads and lighter analytics than Creatify. It is good for "is this format working for my brand?" type tests and less suited to scaled, multi-script, multi-language campaigns.

Use MakeUGC when: you are testing the AI UGC format for the first time and want a low-commitment way to generate 10 to 20 variants without committing to a $100+ per month plan.

Where Human UGC Still Wins

The 2026 data is clear that human UGC keeps a structural advantage in three situations.

The first is emotionally charged testimonials. A real customer recounting weight loss, skin healing, or a life-change moment creates a connection that AI does not yet replicate cleanly. Skincare, supplements, mental health, and fitness verticals lean heavily on this format and pay a real ROAS price for switching to AI-only.

The second is physical product demonstrations. Unboxings, applying cosmetics on real skin, trying on apparel, food prep. Viewers want to see the real product behave in a real context, and AI avatars in 2026 still struggle with hand-product interaction realism.

The third is community-driven niches. Gaming, fitness, niche fashion, and creator-economy categories where the audience knows the creators by name. A familiar face holds an authority that an AI avatar simply cannot inherit.

There is also a regulatory layer. Meta's AI disclosure rules, in effect through 2026, require advertisers to label ads featuring photorealistic AI-generated humans. The label appears next to the "Sponsored" tag for high-stakes content. Human UGC sidesteps this entirely. AI UGC is not penalized in delivery, but the disclosure can shave a percentage point off click-through in trust-sensitive categories.

The Hybrid Workflow That Outperformed Both Pure Approaches

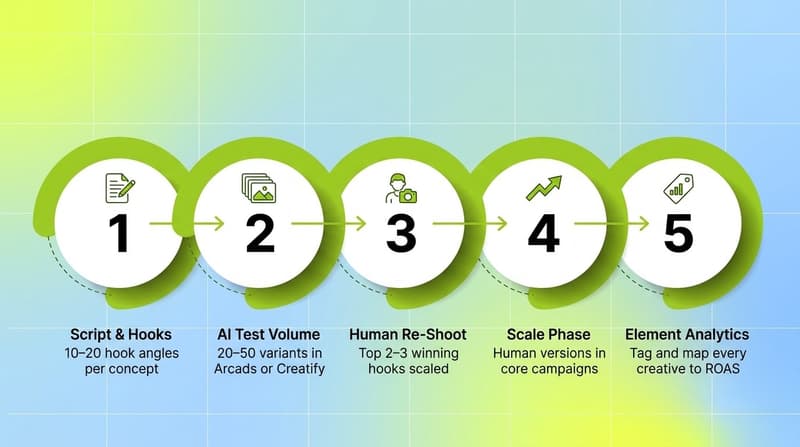

The accounts winning on Meta in 2026 are not picking AI or human. They are running a hybrid loop where each format does what it is good at. The pattern below comes from aggregated practitioner workflows across the Hoox, AdStellar, and PPC.io writeups.

Phase 1: Script and Angle Generation

Brainstorm 10 to 20 hook angles per concept. Use the proven UGC structure: 2-second hook, 3- to 5-second viewer identification, 10- to 15-second solution presentation, 5- to 10-second proof, clear CTA. Hooks drive 80% of UGC video performance, so this is where the time goes.

Phase 2: AI UGC Test Volume

Generate 20 to 50 AI UGC variants in Arcads or Creatify. Same body, same CTA, rotating hooks and avatars. Launch into a structured Meta test (new vs. new at $50 to $100 per ad set per day for 48 to 72 hours). Cull losers fast.

Phase 3: Human UGC for the Winners

Take the top 2 to 3 hooks from the AI batch. Brief a human creator to film the same script. The handoff is the cheap part of the workflow because the creative direction is already proven.

Phase 4: Scale Phase

Run human UGC versions of the proven hooks in your scale campaigns. Keep the AI UGC variants in test campaigns for ongoing refresh. Refresh creatives every two weeks to combat fatigue.

Phase 5: Element-Level Analytics

Tag every creative (AI and human) at the element level: hook style, character, CTA, on-screen text, audio tone, emotion. Map each tag to ROAS, CTR, and CPI. This is where most teams break down, because doing this manually across 50 ads per week is unsustainable.

How Segwise Closes the Loop on Hybrid UGC Workflows

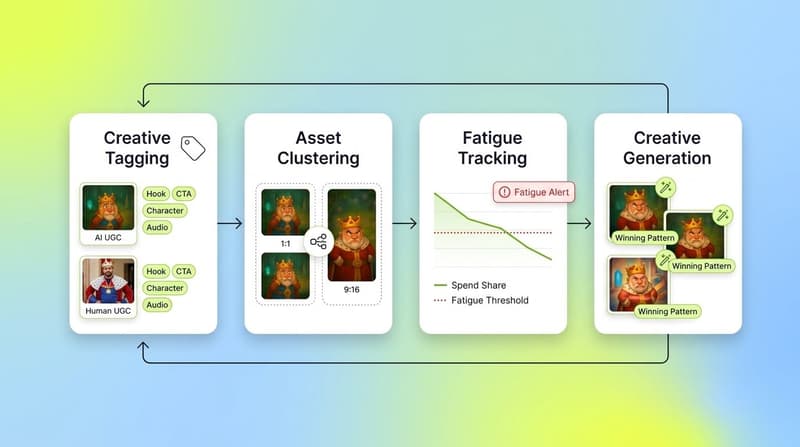

The hybrid workflow above breaks at Phase 5 for most teams. Once you are running 50+ creatives per week across AI and human UGC, manually tagging which hook drove which ROAS becomes the bottleneck that kills the whole loop. Segwise is built specifically to run that loop end-to-end. It is the only platform in the AI UGC conversation that combines element-level analytics, fatigue tracking, AND data-backed AI creative generation in a single stack.

Segwise's Creative Tagging Agent uses multimodal AI to analyze video, audio, image, and text together. Every UGC ad, AI or human, gets tagged automatically across the elements that matter: hooks, CTAs, characters, visual styles, emotions, and audio components. Tag-to-metric mapping connects each element back to ROAS, CTR, CPI, and any custom event you care about.

The platform connects to Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, and IronSource on the ad network side, and AppsFlyer, Adjust, Branch, and Singular on the MMP side, with no-code setup in under five minutes. That means the hybrid AI-plus-human workflow runs through a single source of truth across every platform.

Asset Clustering is the piece most relevant to AI vs. human comparisons. The Creative Strategy Agent groups creatives that share underlying assets (same script, same hook, same offer) into clusters. Inside a cluster, you can isolate the variable that mattered: was the AI version vs. human version the lift driver, or was it the hook? That is the question every hybrid workflow has to answer to scale intelligently, and answering it without element-level data is mostly guessing.

Fatigue tracking is the third leg. AI UGC variants tend to fatigue faster because of volume and lower novelty per asset. Native fatigue detection catches the decline before spend bleeds out, with custom thresholds (e.g., 20% ROAS decline over 7 days) and alerts to Slack or email.

Creative generation is where Segwise diverges from Arcads, Creatify, MakeUGC, and Argil. Those tools generate UGC from a prompt, a script, or a product URL. Segwise generates from your winning creative patterns. The Creative Generation Agent identifies your top-performing tags (a specific hook style, a winning CTA, a visual emotion that drove ROAS) and generates new creatives built around those exact elements, directly inside the platform. You can edit them by prompting and export in any aspect ratio (1:1, 4:5, 9:16, 16:9) ready to upload to Meta, TikTok, Google, or Snapchat. Static creative generation is fully live; video generation is in beta. The result is a closed loop: tag every ad, find the winning elements, generate the next round from those elements, and ship them across networks without leaving the platform.

Segwise customers report up to 20 hours saved per week, per app or brand, by eliminating manual tagging and data consolidation; a 50% ROAS improvement by identifying winning creative patterns and catching fatigue early; and halved creative production time through AI-powered creative generation that uses performance data instead of prompt guesswork.

What's The Answer in 2026?

AI UGC and human UGC are not in a winner-take-all fight. They each win different rounds. Humans hold the conversion edge in emotional and demonstration-heavy verticals. AI holds the cost-and-volume edge for testing breadth. The hybrid workflow, AI for testing volume and humans for scaling proven winners, beats either pure approach on aggregate ROAS in 2026 because it lets each format do what it is structurally good at.

The teams that get this right are not just running parallel campaigns. They are tagging every creative at the element level, isolating which hook crossed both formats, and feeding that signal back into their next round of AI generation and human briefs. Without that tagging layer, the hybrid loop is just two separate workflows running in parallel, and you are leaving most of the compounding advantage on the table.

Frequently Asked Questions

Does AI UGC outperform human UGC for Meta ads in 2026?

Not on raw ROAS in most emotional or demonstration-heavy verticals like skincare, supplements, and fitness. AI UGC achieves 85% to 110% of the CTR of well-performing human UGC, per Hoox 2026 data, but conversion rate still favors humans where physical product interaction or emotional testimony matters. AI UGC wins on cost-per-test and variants-per-dollar by a 5x to 10x margin, which is why hybrid workflows beat either pure approach. Tools like Segwise and Triple Whale help teams measure which format is actually driving ROAS in their specific account.

What's the best AI UGC tool for scaling Meta ads testing?

It depends on your bottleneck. Arcads AI wins on actor realism and the largest licensed actor library (1,000+ avatars), making it the strongest pure-visual engine. Creatify AI wins on workflow depth with built-in AdMax analytics and a product-URL-to-ad pipeline, making it the better all-in-one performance stack. Argil wins for founder-led virtual influencer personas where one consistent face matters. MakeUGC fits as a budget-conscious entry point for first-time AI UGC tests. Segwise plays a different game: it tags the resulting creatives at the element level, ties tags back to ROAS, and then generates new AI creatives directly from your winning patterns instead of from a generic prompt. Static creative generation is live; video generation is in beta. That makes Segwise the only tool in the AI UGC conversation that closes the analytics-to-production loop in one platform.

How much does AI UGC cost compared to human UGC creators?

AI UGC costs $5 to $30 per video on platforms like Arcads, Creatify, MakeUGC, and Argil. Human UGC creators charge $150 to $500 per video for mid-tier deliverables, with premium creators commanding $800 to $2,000+ per asset, per PPC.io's 2026 pricing report. Usage rights, whitelisting, and rush delivery add another 25% to 150% on top of human UGC base rates. The 5x to 10x cost advantage is what makes AI UGC structurally better for testing and human UGC structurally better for scaled spend on proven winners.

What does Meta's AI disclosure policy mean for UGC ads in 2026?

Meta requires advertisers to label ads created or heavily modified using generative AI tools, with stricter requirements for ads featuring photorealistic AI-generated humans, per Meta's transparency center. The "AI info" label appears next to the "Sponsored" tag for lifelike AI humans and in the three-dot menu for less prominent AI use. Minor edits like cropping or color adjustments are exempt. The label has not been shown to materially hurt delivery, but it can shave click-through in trust-sensitive categories. Tools like Segwise and CreativeOS can help track which disclosed AI variants outperform and inform when to switch to human UGC for scale.

How do I structure a hybrid AI plus human UGC workflow?

Run a five-phase loop. Phase 1: brainstorm 10 to 20 hook angles per concept. Phase 2: generate 20 to 50 AI UGC variants in Arcads or Creatify and run a 48- to 72-hour structured Meta test. Phase 3: take the top 2 to 3 hooks from the AI batch and brief a human creator to re-shoot them. Phase 4: run the human versions in your scale campaigns and keep AI variants in test campaigns for ongoing refresh. Phase 5: tag every creative at the element level (hook, CTA, character, visual style, emotion, audio) and map each tag to ROAS using a tool like Segwise or Triple Whale. The loop only compounds if Phase 5 is automated.

Which UGC type wins on ROAS for skincare and beauty brands?

Human UGC has held the ROAS edge in skincare and beauty through 2026 because the vertical relies on physical product application, skin texture demonstration, and before-and-after social proof, which AI avatars still struggle to replicate cleanly. The $15K skincare test recently shared in r/FacebookAds lines up with Hoox's vertical analysis: humans win pure ROAS, AI wins variants-per-dollar. The hybrid workflow (AI for hook testing, human for the scale shoot) beats either pure approach. Tools like Segwise, EzUGC, and Arcads are commonly used in beauty hybrid stacks to tag creatives, generate test variants, and isolate which hook is the actual driver.

Does Segwise generate AI UGC ads, or does it only do analytics?

Segwise does both. The Creative Generation Agent generates new AI creatives directly from your winning creative patterns (top-performing hooks, CTAs, emotions, visual styles), not from a generic prompt the way Arcads, Creatify, or MakeUGC do. Static creative generation is fully live and exports in any aspect ratio (1:1, 4:5, 9:16, 16:9) ready to upload to Meta, TikTok, Google, or Snapchat. Video generation is in beta. The differentiator is that Segwise grounds generation in your actual performance data, so each new creative is a data-backed iteration on something that already worked. Segwise customers cite halved creative production time and 20+ hours saved per week as a result.

Can Meta tell if a UGC ad was made with AI?

Meta uses a combination of metadata signals (industry-standard "AI generated" markers like C2PA), self-disclosure during ad upload, and internal classifiers to detect AI-generated content. For ads that pass through Meta's own creative AI tools, detection is automatic. For third-party AI UGC tools like Arcads, Creatify, MakeUGC, or Argil, advertisers are required to self-disclose, and Meta increasingly applies its own classifiers to verify. The label is not punitive, but undisclosed AI-generated humans risk policy violations. Hybrid workflows reduce disclosure friction by running the scale phase on real human creators while keeping AI in the test phase. Segwise and other creative analytics platforms help track disclosure status alongside performance.

Comments

Your comment has been submitted