Are AI tools the key to understanding what makes your ads work?

AI ad performance analysis goes beyond surface metrics like CTR and ROAS to reveal which specific creative elements, from hooks and visuals to CTAs and pacing, actually drive results. For UA managers and creative strategists running hundreds of ads across Meta, Google, and TikTok, that shift from tracking what happened to understanding why it happened is the difference between scaling profitably and burning budget on guesswork. Platforms like Segwise automate creative-level analysis with multimodal AI tagging across 15+ ad networks, turning raw performance data into actionable creative intelligence.

Your ad dashboards are full of data. CPMs, CTRs, conversion rates, ROAS numbers for every campaign and ad set. But ask a simple question, "Why did this ad outperform every other creative we tested last month?" and the dashboards go quiet.

That gap between what happened and why it happened is where most performance marketing teams lose money. They can see that Ad Creative #47 drove a 3.2x ROAS while #48 barely broke even. They just can not figure out which creative elements caused the difference. Was it the hook? The pacing? The CTA placement? The background music? At scale, with hundreds of creatives running across Meta, Google, TikTok, and AppLovin simultaneously, human analysis hits a wall.

This is not a minor inconvenience. Creative is now responsible for roughly 70% of campaign performance outcomes, according to research from Nielsen. It is the single largest lever advertisers can pull. Yet most teams still analyze creative performance using the same spreadsheet workflows they used five years ago: manual tagging, gut instinct, and the occasional retrospective that arrives too late to influence the next creative cycle.

AI tools built specifically for ad performance analysis are changing this. They break down every creative into its component elements, map those elements to performance outcomes, and surface patterns that would take a human analyst weeks to identify. The result is not just faster reporting. It is a fundamentally different way of building creative strategy, one grounded in evidence rather than intuition.

According to Forrester's Q1 2026 AI Advertising Impact Report, 63% of enterprise brands using AI-generated creative analysis reported ROAS improvements between 45% and 58%. And a Gartner CMO survey found that organizations with mature AI creative integration reported ROI lifts averaging 31.4%.

The question is no longer whether AI tools improve ad performance analysis. It is whether your team can afford to keep analyzing creative without them.

Also read Ad Set Budget Optimization: How to Control Spend and Maximize Ad Performance

Key takeaways

Creative drives roughly 70% of campaign performance outcomes, yet most teams lack the tools to analyze creative at the element level, leaving the biggest performance lever under-optimized.

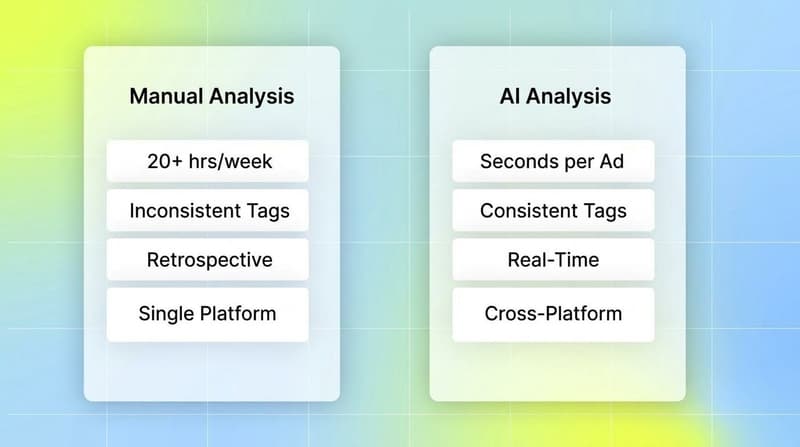

AI-powered creative tagging automates what used to take 20+ hours per week of manual work, analyzing hooks, visuals, CTAs, pacing, and audio across video and static ads.

Teams using AI-driven creative analysis report 20-30% higher marketing ROI compared to teams relying on manual methods, with some seeing ROAS lifts above 50%.

Creative fatigue detection powered by AI catches performance declines 2-3 weeks earlier than manual monitoring, preventing wasted spend before it accumulates.

AI multivariate testing reaches statistically significant results in 4.2 days on average compared to 21.6 days for traditional A/B testing, an 80% reduction in time-to-insight.

The best results come from human-AI collaboration: campaigns using a hybrid approach outperform fully automated campaigns by 41.3% in brand equity and fully human campaigns by 29.7% in conversion metrics, per Deloitte Digital.

The real problem: you know what happened, but not why

Every ad platform gives you metrics. Meta tells you your cost per result. Google shows your conversion rate. TikTok surfaces your video view rates. But none of them answer the question that actually matters for creative strategy: which specific elements within a creative drove that performance?

This is the gap that keeps creative teams operating on intuition rather than evidence. A UA manager might notice that video ads with user-generated content (UGC) styling tend to perform better. But "UGC styling" is too broad to be actionable. Was it the handheld camera angle? The conversational hook? The specific CTA format? Without element-level analysis, every creative brief becomes a bet.

Why manual analysis breaks down at scale

Manual creative tagging is where most teams try to bridge this gap, and where most teams fail. The process typically looks like this: someone on the team watches each ad, records attributes in a spreadsheet (hook type, visual style, CTA format, talent presence), then cross-references those attributes against performance data.

This breaks down for three reasons. First, it is slow. Teams running 50-100+ active creatives across multiple platforms can easily spend 20+ hours per week just on tagging. Second, it is inconsistent. Two people will tag the same creative differently, making pattern detection unreliable. Third, it is retrospective. By the time the analysis is complete, the creative cycle has moved on.

The IAB found that 64% of advertisers now cite cost efficiency as the top benefit of AI in advertising, a sharp jump from fifth place in 2024. That shift reflects a growing recognition that manual workflows are not just slow; they are actively holding back creative performance.

The creative fatigue blind spot

Creative fatigue is the other problem that manual analysis struggles to catch. When a creative starts declining, the performance drop is gradual: CTR dips slightly, then CPMs creep up as the platform starts showing the ad to less optimal audiences. By the time a human analyst flags it, weeks of budget may have already been wasted.

Singular's research on creative fatigue shows that when engagement declines on platforms like Meta, the algorithm responds by showing your ad to less optimal audience segments, which drives up CPM. Rising CPMs paired with declining CTR confirms fatigue is setting in. But detecting this pattern manually across hundreds of creatives running on multiple platforms simultaneously is nearly impossible.

AI tools that monitor performance patterns continuously can flag fatigue signals 2-3 weeks earlier than manual checks, giving teams time to rotate creatives before the budget damage accumulates.

How AI tools actually analyze ad performance

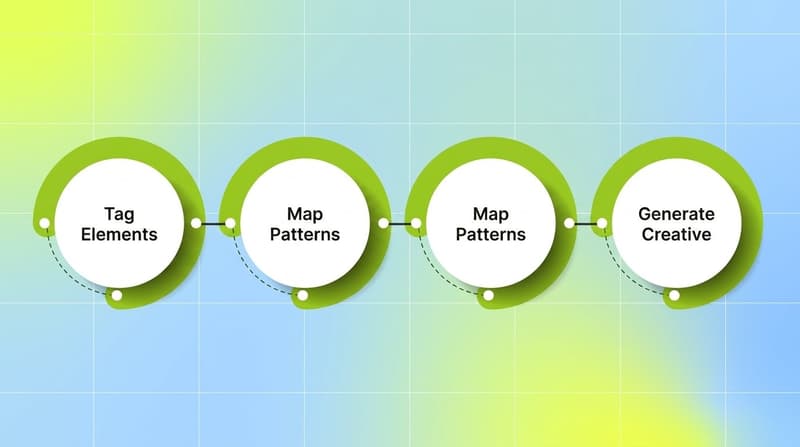

AI ad performance analysis is not a single capability. It is a stack of interconnected functions that work together to move teams from "what happened" to "why it happened" and eventually to "what should we do next."

Creative-level element tagging

The foundation of AI-powered ad analysis is automated creative tagging. Rather than relying on humans to watch and categorize each ad, multimodal AI analyzes every component of a creative simultaneously: the visual composition, on-screen text, spoken dialogue, background music, pacing, transitions, hook style, CTA format, talent characteristics, and emotional tone.

This analysis happens automatically at scale. What used to take a team member 15-20 minutes per creative happens in seconds. More importantly, the tagging is consistent. The AI applies the same framework to every creative, eliminating the subjective variation that makes manual tagging unreliable.

The real power shows up when these tags are mapped to performance metrics. When you can see that creatives featuring a specific hook style (say, question-based openings) paired with a particular CTA format (text overlay vs. spoken CTA) deliver 40% higher conversion rates, you are no longer guessing about what to test next. You are building creative briefs from evidence.

According to Kantar's research on AI creative tagging, this approach reveals patterns that are invisible to human analysis. An AI system might discover that the combination of a specific background color palette plus a particular text overlay speed in the first 3 seconds correlates with higher completion rates. No human analyst would flag that combination manually.

Performance pattern mapping

Tagging alone is not enough. The second layer is mapping those creative elements to real business outcomes across platforms and time periods.

This means answering questions like: do benefit-led hooks outperform problem-statement hooks on TikTok but underperform on Meta? Does showing the product in the first 2 seconds versus the first 5 seconds change CPA differently on Google versus Snapchat? Which messaging angles maintain performance longest before fatigue sets in?

These are cross-dimensional queries that require analyzing thousands of data points simultaneously. AI tools handle this natively. They surface correlations that would take a human analyst weeks to find, and they do it continuously, updating as new performance data flows in.

Fatigue detection and early warning

AI-powered fatigue detection monitors all active creatives across platforms for patterns of declining performance. Rather than waiting for a creative to obviously tank, the system tracks leading indicators: subtle CTR declines, spend share shifts, frequency increases, and CPM movements that precede a full performance collapse.

Campaigns using AI-generated creative rotation reduced frequency-related performance decay by 38.4%, according to a Smartly.io analysis. Ads maintained above-baseline CTR performance for an average of 19.3 days compared to just 7.1 days for static single-creative campaigns.

The shift here is from reactive to proactive. Instead of pausing an ad after it has already wasted budget, teams can rotate creatives at the optimal moment, based on data rather than arbitrary schedules.

From analysis to action: closing the feedback loop

The most advanced AI ad analysis tools do not stop at telling you what happened. They close the loop between insight and action.

When analysis reveals that a specific combination of elements drives high performance, the system can recommend (or generate) new creative variations that incorporate those winning elements. When fatigue is detected, it can suggest which replacement creative from your library best fits the gap. When a new creative launches, it can predict likely performance based on its element composition.

This creates a continuous cycle: analyze performance, identify winning patterns, generate new creative based on those patterns, test, measure, and refine. As MarTech reported, a Forrester survey found that two out of three enterprise B2C marketing leaders believe AI-driven creative testing and analytics improve both efficiency and creative quality.

Understanding which creative elements drive ad performance is the core value proposition. Teams that can answer "why did this ad work?" with data, not opinions, build creative strategies that compound over time. Each cycle produces better-performing creative because each cycle is informed by the last.

What to look for in an AI ad performance analysis tool

Not all AI tools are built the same. Some handle basic reporting automation. Others go deep on creative-level analysis. Here is what separates the tools that actually change creative performance from the ones that just generate prettier dashboards.

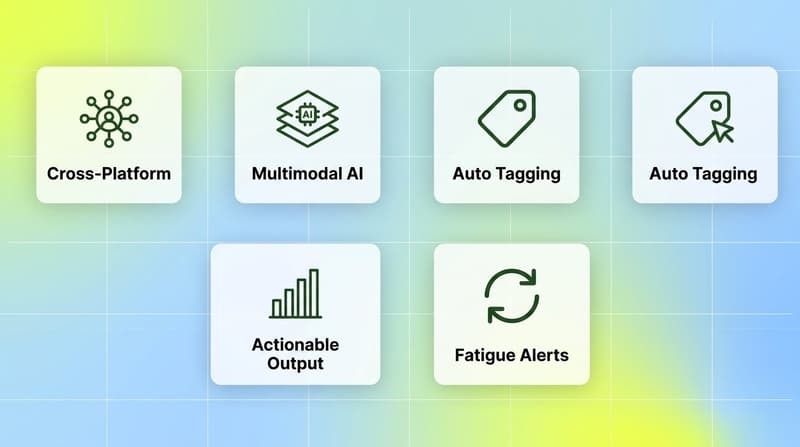

Cross-platform data unification

If a tool only connects to one ad network, it is solving a fraction of the problem. Most performance marketing teams run creative across Meta, Google, TikTok, Snapchat, AppLovin, and sometimes YouTube, Unity Ads, Mintegral, or IronSource. They also pull attribution data from MMPs like AppsFlyer, Adjust, Branch, and Singular.

A real creative intelligence tool unifies all of this. It lets you compare creative performance across platforms using the same tagging framework, spot which creative elements transfer well cross-platform, and identify where platform-specific creative strategies are needed.

Multimodal creative analysis

Video ads have multiple dimensions: visuals, audio, text overlays, pacing, transitions. A tool that only analyzes thumbnail frames or on-screen text misses the majority of what makes a video ad work or fail.

Look for multimodal AI that processes video, audio, image, and text together. The ability to tag spoken dialogue, background music tone, scene transitions, and visual composition simultaneously is what enables genuine element-level performance analysis.

Automated, consistent tagging

Manual tagging does not scale. Even if a tool has great analysis capabilities, if it still requires human input for creative categorization, you will hit the same consistency and speed bottlenecks. The tagging system should be automatic, consistent, and customizable so you can define brand-specific or campaign-specific attributes.

Actionable output, not just data

A dashboard full of charts is not creative intelligence. The output should directly inform creative decisions: which elements to use in the next round of briefs, which creatives to pause, which variations to test. The best tools surface specific, actionable recommendations, not vague trends.

Why understanding ad performance at the creative level compounds over time

Most marketing investments have diminishing returns. You optimize a campaign, squeeze out a few percentage points, and hit a ceiling. Creative intelligence works differently because it compounds.

Every creative cycle that is analyzed adds to your library of element-level performance data. Over three months, you do not just know which hooks work best. You know which hooks work best for which audience segments, on which platforms, during which phases of a campaign's lifecycle, and in combination with which CTA formats.

This is institutional creative knowledge. It lives in data, not in the head of a single creative strategist who might leave next quarter. It scales across campaigns, products, and markets.

Teams at this level stop thinking about individual ads and start thinking about creative systems. They know their performance formula and can produce variations faster and more reliably because each new creative is informed by the accumulated evidence of what works.

According to Deloitte Digital's Creative Effectiveness Index, campaigns using a hybrid human-AI production model outperformed fully automated AI campaigns by 41.3% in long-term brand equity and outperformed fully human campaigns by 29.7% in short-term conversion metrics. The takeaway is clear: AI analysis gives humans better inputs, and humans give AI better direction. The combination compounds.

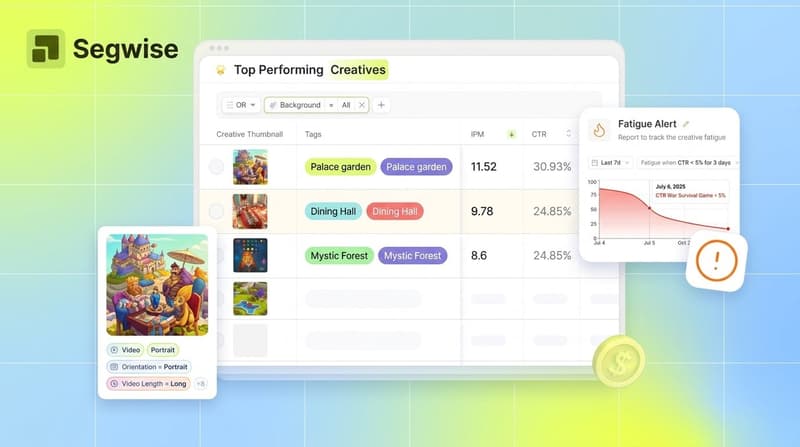

How Segwise approaches AI-powered creative intelligence

Segwise is built around the idea that creative intelligence should be fully automated and deeply integrated across your entire ad ecosystem. The platform connects to 15+ ad networks (Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, IronSource, and more) plus MMPs including AppsFlyer, Adjust, Branch, and Singular, bringing all creative and performance data into a single unified view.

At the core is Segwise's Creative Tagging Agent, a multimodal AI that automatically analyzes every creative element across video, audio, image, and text. It tags hooks, CTAs, visual styles, spoken dialogue, background music, pacing, and emotional tone, then maps every tag directly to performance metrics like CPI, ROAS, and CTR.

The Creative Strategy Agent acts as an always-on creative strategist that maintains full context across all your creative data. Ask it anything about your creative performance in plain language. It also powers automated fatigue detection, flagging performance declines before they drain budget, plus asset clustering to isolate which specific creative treatments cause performance differences between similar ads.

When the analysis reveals winning patterns, Segwise's Creative Generation Agent turns those insights into new creative iterations. It generates data-backed creatives from winning elements, supports prompt-based editing, and exports in multiple aspect ratios ready for any ad network.

The result: teams using Segwise report saving up to 20 hours per week on manual creative analysis, achieving 50% ROAS improvement through data-driven creative decisions, and halving creative production time by eliminating guesswork from the creative process.

The bottom line

The performance marketing teams that win in 2026 are not the ones with the biggest budgets or the most creatives in rotation. They are the ones that understand, at the element level, why their ads work.

AI tools for ad performance analysis have made this understanding accessible. Automated creative tagging replaces 20+ hours of manual work per week. Performance pattern mapping turns raw data into creative strategy. Fatigue detection catches declining ads before they waste budget. And closed-loop systems turn insights into better creative faster.

The shift is not incremental. It is structural. Teams that adopt AI-powered creative intelligence build compounding advantages: each creative cycle produces better results because each cycle is informed by deeper data. Those that do not adopt it will keep guessing while competitors keep compounding.

If your team is still relying on spreadsheets and gut instinct to understand ad creative performance, explore how Segwise's AI-powered creative intelligence platform can give you the element-level insights that drive real ROAS improvement.

Frequently asked questions

What is AI ad performance analysis and how does it differ from standard analytics?

AI ad performance analysis breaks down individual creatives into their component elements (hooks, visuals, CTAs, pacing, audio) and maps each element to performance outcomes like ROAS, CPI, and CTR. Standard analytics tools show aggregate campaign metrics without explaining which specific creative components caused the results. Platforms like Segwise and other creative intelligence tools automate this element-level analysis across multiple ad networks simultaneously.

Why does understanding creative performance matter more than campaign metrics?

Creative drives roughly 70% of campaign performance outcomes. Campaign-level metrics (CPM, CPA, ROAS) tell you the score but not how to improve it. Element-level creative analysis tells you exactly which hooks, visuals, and messaging patterns drive results, so you can replicate successes and avoid repeating failures. Segwise automates this by tagging creative elements with multimodal AI and mapping them to performance data across platforms.

How do AI tools detect creative fatigue before performance drops?

AI-powered fatigue detection monitors leading indicators like subtle CTR declines, spend share shifts, frequency increases, and CPM movements across all active creatives continuously. These patterns precede a full performance collapse by 2-3 weeks, giving teams time to rotate creatives proactively. Platforms like Segwise, Smartly.io, and Madgicx offer automated fatigue tracking, though Segwise is the only one that combines fatigue alerts with element-level creative tagging to explain why a creative is fatiguing, not just that it is.

What is the difference between creative analytics and creative intelligence?

Creative analytics refers to reporting on creative performance metrics, often at the campaign or ad level. Creative intelligence goes further: it automates element-level tagging, maps those elements to outcomes, detects fatigue patterns, and closes the loop by informing (or generating) new creative based on data. Segwise's platform covers the full creative intelligence stack, from tagging through generation, while basic analytics tools stop at dashboards.

Can AI actually tell me which part of my video ad is working?

Yes. Multimodal AI tools analyze video ads frame by frame, processing visual composition, on-screen text, spoken dialogue, background music, pacing, and transitions simultaneously. They tag each element and correlate it with performance data. Segwise and VidMob both offer this kind of element-level video analysis, though Segwise is unique in also tagging playable (interactive) ads, which matters for mobile gaming advertisers running playable formats on AppLovin or Unity Ads.

How long does it take to see results from AI creative analysis tools?

Most teams see actionable insights within the first 1-2 weeks after connecting their ad accounts, since the AI begins tagging and analyzing existing creatives immediately. Performance improvements typically follow within 1-3 creative cycles as teams start applying element-level insights to new briefs. Forrester's research indicates that enterprise brands report measurable ROAS improvements within the first 90 days of using AI-driven creative analysis.

Do I still need human creative strategists if I use AI analysis tools?

Absolutely. Deloitte Digital's research shows that hybrid human-AI campaigns outperform both fully automated and fully human approaches. AI excels at pattern detection, consistent tagging, and continuous monitoring at scale. Humans bring storytelling, brand judgment, and strategic creativity that AI cannot replicate. The most effective teams use AI to give human strategists better inputs, then apply human judgment to turn those inputs into compelling creative.

What ad networks and platforms do creative intelligence tools typically support?

Comprehensive creative intelligence platforms connect to major ad networks including Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, and IronSource. They also integrate with MMPs like AppsFlyer, Adjust, Branch, and Singular for unified attribution data. Cross-platform coverage is important because creative performance varies significantly by network, and teams need a single source of truth to compare creative elements across channels.

Comments

Your comment has been submitted