Here's what spending $115k in testing 5 AI UGC platforms teaches you

Curtis Howland of MisfitMarketing ran a controlled $115k experiment across five AI UGC SaaS platforms: same offers, same scripts, same audiences, same DTC client. No brand deals, no affiliate relationships. Just ad spend on five platforms and a clear winner at the end.

The platforms: Arcads AI, Argil, Creatify AI, HeyGen, and Mirage Studio. The winner hit a 2.42x ROAS at a $70 CPA. But the "who won" matters less here than why it won, and what that tells you about running AI UGC tests that actually produce usable signal.

This post breaks down those findings, layers in what broader industry data shows about AI UGC performance in 2025-2026, and explains the three-step framework Howland's team used to avoid "AI slop." If you're spending serious budget on creative testing, these findings are worth sitting with.

Key takeaways

Mirage Studio won the $115k head-to-head test with a 2.42x ROAS and $70 CPA, driven primarily by high-realism avatars with natural micro-expressions, hand gestures, and eye movement

No platform works out of the box. The winning framework required clear FTC disclaimers, multi-scene editing, and customer-language scripts

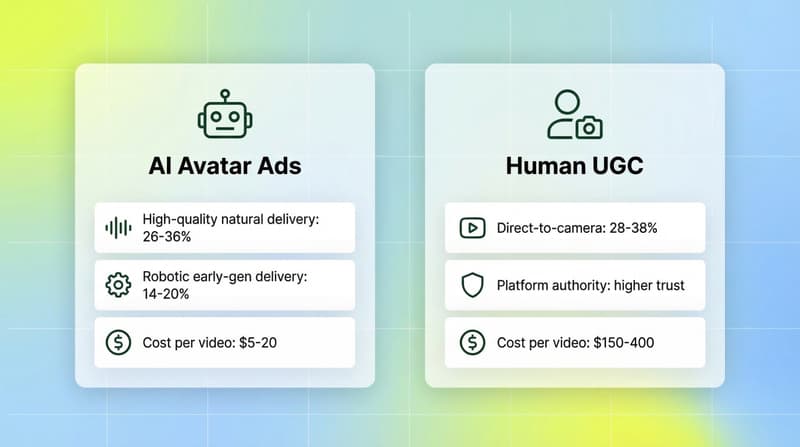

AI UGC production costs 90-98% less than traditional UGC ($5-20 per video vs. $150-400 per creator video), enabling 8x more creative testing in the same timeframe (UGC Lab)

AI UGC performance is converging with human UGC: hook rates for high-quality AI avatars (26-36%) now sit within 5-10% of human direct-to-camera content (28-38%) (CineRads)

The 70/30 hybrid model is the dominant framework in practice: 70% AI UGC for high-volume testing, 30% human UGC for hero assets (Koro)

Generating 100+ AI variants is now easy. Knowing which creative elements actually drove results across all of them is the hard part

The $115k test: what was tested and how

The test setup was methodologically clean, which matters if you want conclusions that mean anything. According to the original LinkedIn post from MisfitMarketing, the constraints were strict:

Same offers across all platforms

Same scripts across all platforms

Same target audiences across all platforms

Zero brand deals or affiliate relationships with any platform

Each platform's output went into a separate ad set for a DTC client. Here's what made each platform distinct:

Arcads AI. The OG of AI UGC. Over 300 AI actors, two-minute generation time. Best for rapid hook testing at scale. If you need 20 hook variants by tomorrow, this is the fastest route.

Argil. Best-in-class for editors who want control. Multi-camera angles, body language control, and auto-editing with B-roll baked in. The reviewer's take: "too much control for me, but a pro-editor would love it."

Creatify AI. Built for eCom brands running batch tests. 10,000+ B-roll clips, product URL to video in minutes. Strongest for product-focused content at scale.

HeyGen. The reliable all-rounder. Clean outputs, strong lip-sync, consistent results. Less experimental but fewer surprises. Heard lots of people in the community recommend it.

Mirage Studio. The winner. Built on their own foundational model. High-quality avatars with natural micro-expressions, hand gestures, and subtle eye movements. The realism edge was not cosmetic; it translated directly to performance numbers.

The result: Mirage delivered 2.42x ROAS at $70 CPA against the same offer and audience every platform faced.

Why realism won: what the data actually tells you

Mirage's edge comes down to a conversion mechanism, not an aesthetic preference.

CineRads' 2026 benchmark analysis of DTC brands running both AI avatar and human UGC creatives found:

Human UGC (direct-to-camera): 28-38% hook rate (3-second view rate)

AI avatar (high-quality, natural delivery): 26-36% hook rate

AI avatar (robotic delivery, early-gen): 14-20% hook rate

The gap between "high quality" and "robotic" AI avatars is roughly 2x hook rate performance. Early-gen AI UGC looked like AI. Tools like Mirage, which built their own foundational model, close that gap because natural micro-expressions and eye movement trigger the same trust signals that make real UGC effective.

EzUGC's 2026 comparison notes that both Arcads and Creatify can cut production costs by up to 90% versus traditional shoots, but the right choice depends on whether your bottleneck is creative quality or campaign optimization velocity. Mirage sits in a different category: high-realism avatars as its primary differentiator, not just speed.

For DTC brands where spokesperson-style UGC is the native ad format (especially in beauty, skincare, and supplements), the authenticity signal matters directly to CPA. Superscale's analysis found AI UGC delivered 46% lower cost per install versus banner ads in some verticals, while traditional UGC still maintains higher absolute conversion rates. The gap is closing every quarter, though.

The 3-step "no slop" framework

Every platform produced ads in the test. The quality gap came from how the team used each platform, not just which platform they chose.

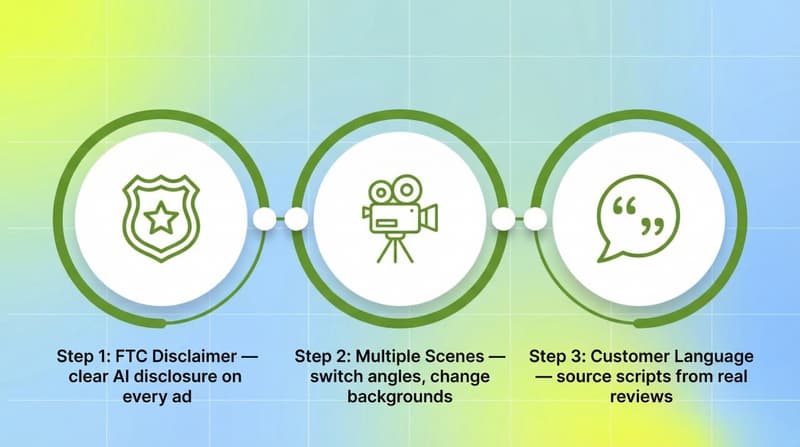

Step 1: Add the FTC disclaimer

Every ad included a clear, upfront statement that the testimonial was AI-generated. Not optional. The FTC's enforcement actions on undisclosed AI content have been accelerating, and the team explicitly said they would not skip this step.

Beyond compliance, transparency is increasingly a conversion asset. Superscale's data shows AI UGC carries a 68% consumer quality approval rating when authenticity signals are properly managed, versus 81% for human UGC. Closing that 13-point gap starts with not trying to deceive viewers.

Step 2: Use multiple scenes

Single-camera, static AI avatar ads are the easiest to spot as AI, and the fastest to fatigue. The winning approach: switch scenes, change camera angles, change backgrounds.

Platform capability matters here. Argil's multi-camera angle and body language control makes this easier. Creatify's 10,000+ B-roll library makes it accessible even without heavy editing. The main technical challenge is character continuity across scenes. DesignRevision's comparison notes the best 2026 AI tools are largely solving this, but it remains something to test per-platform.

DTC best practice frameworks recommend targeting a hook rate above 30%. Static single-scene AI videos typically won't get there.

Step 3: Use customer language

This is where AI UGC most often fails silently. The script determines whether the ad creates accurate expectations about the product experience. Over-promising in the AI ad, then under-delivering post-purchase, produces returns and negative reviews that don't show up in your ROAS numbers until later.

The team's principle: "Your ad creates expectations and they need to feed transparently into the real experience." Practically, this means sourcing scripts from actual customer reviews, the language real buyers use to describe the product, not the language marketers use to sell it.

The real bottleneck after you scale AI UGC

The $115k test ended with a winner. Most teams don't stop there; they want to scale the winner and iterate on it.

This is where the next problem surfaces.

Segwise's analysis of AI UGC scaling patterns makes it concrete: "When you move from managing five human UGC videos to 125 AI UGC variants, the volume of performance data becomes unmanageable in spreadsheets. The core question shifts from 'Which ad is winning?' to: Which AI-generated element is actually driving results?"

That's the right question. Answering it requires creative-level data, not just ad-level data.

Was Mirage winning because of the avatar's realism, the hook, the scene structure, the CTA language, or the background? If you can't attribute performance to specific creative elements, you can't intelligently brief your next batch of AI UGC variations. You're back to guessing.

This is where platforms like Segwise come in. Segwise's multimodal AI automatically tags every creative element across your ad library, including visual style, hook type, CTA format, scene structure, and audio tone, and maps each tag to performance metrics (ROAS, CTR, CPI, CVR) across 15+ ad networks including Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, and IronSource, plus MMPs like AppsFlyer, Adjust, Branch, and Singular. When you're running 50+ AI UGC variants, Segwise's tag-level reporting tells you which elements are driving performance, so your next creative brief is informed by data rather than instinct. Teams using this approach save up to 20 hours per week that would otherwise go to manual analysis.

Choosing your platform: a decision framework

The $115k test gives you directional signal. Here's how to translate it into a platform decision for your own stack:

Arcads AI works best if hook testing velocity is the priority (300+ actors, 2-minute generation time). Good for identifying winning hooks before investing in higher-production content.

Argil works best if you have an editor on the team who can use full multi-camera control. Also useful if B-roll integration matters and you want it automated rather than manual.

Creatify AI works best for eCom batch tests that need product-URL to video at scale. Also has native A/B testing and analytics built into the platform.

HeyGen works best if reliability and consistency matter more than edge features, and particularly strong for multilingual content.

Mirage Studio works best when realism is your conversion driver, especially for DTC spokesperson-style ads in beauty, supplements, or lifestyle. A deliberate quality-over-volume tradeoff.

One thing ATTN Agency's analysis gets right: "Success requires combining AI capabilities with strategic creative thinking, statistical rigor, and deep customer understanding." The platform is a production tool. The creative strategy, including angles, hooks, scripts, and testing frameworks, still requires human judgment. Picking the best platform without a strong testing process is just expensive mediocrity.

What comes after the platform test

The team's stated next move was telling: "Taking existing winners and using AI to generate new variations, angles, and settings. Moving away from real content, though? Not a chance."

That's the 70/30 model in practice. Industry consensus in 2026 has largely settled on this split: 70% AI UGC for volume testing, 30% human UGC for hero assets. AI finds the winning angles. Human creators execute the best-performing concepts at a quality ceiling AI hasn't matched yet.

What this approach demands operationally is more than most teams account for. Running 50+ variants requires deliberate ad set structure and clear naming conventions so you can actually attribute results back to creative decisions later. You need to know which specific hooks, formats, and visual elements are winning, not just which ads are winning. And every subsequent round of AI UGC should be briefed from the performance data of the previous round, not from intuition.

CineRads' benchmark data makes a useful point here: "The creative quality ceiling for AI video has risen enough in 2025-2026 that the performance gap is no longer a fundamental limitation, it is a testing and iteration challenge." The platform problem is largely solved. The process problem is what's left.

Conclusion

The $115k test validated something worth remembering: AI UGC platform choice matters, but execution matters more. Mirage won on realism, a genuine differentiator for DTC spokesperson-style ads. But every platform in the test had a legitimate use case, and the three-step process (disclaimer, multi-scene, customer language) was consistent across all of them.

The bigger takeaway is what happens after you find a winner. Scaling from one winning platform to 50+ AI UGC variants creates a creative data problem that doesn't solve itself. Knowing which elements drove your 2.42x ROAS, and using that intelligence to brief the next round, is what separates a one-time win from a repeatable creative engine.

If you're scaling AI UGC and need to understand which creative elements are actually driving performance across your variants, Segwise's multimodal creative intelligence platform automatically tags every element across your ad library and maps them to ROAS, CTR, and CPI. Book a demo to see how teams use tag-level data to turn creative testing into a systematic process.

Frequently asked questions

Which AI UGC platform performed best in the $115k test?

Mirage Studio won with a 2.42x ROAS and $70 CPA against a DTC client's ad spend. The key advantage was avatar realism: natural micro-expressions, hand gestures, and subtle eye movements that outperformed the more static delivery of other platforms. The test used identical offers, scripts, and target audiences across all five platforms.

Is AI UGC performance competitive with human UGC?

Yes, for well-produced AI avatars. According to CineRads' 2026 benchmark data, high-quality AI avatars achieve 26-36% hook rates, compared to 28-38% for human direct-to-camera UGC. The performance gap is narrowing every quarter, particularly in verticals like beauty and DTC where the spokesperson format is native.

How much cheaper is AI UGC compared to human UGC?

Production cost per video is 90-98% lower with AI UGC. Industry benchmarks put traditional UGC at $150-400 per video, while AI UGC costs $5-20 per video. This cost difference enables 8x more creative testing in the same timeframe and budget.

Do AI UGC platforms require FTC disclaimers?

Yes. The FTC has been actively enforcing disclosure requirements for AI-generated content. The MisfitMarketing team included clear disclaimers on every AI ad stating the content used an AI actor, not a real testimonial.

What is the 70/30 model for AI and human UGC?

The 70/30 model allocates 70% of creative production to AI UGC for high-volume testing and 30% to human creators for hero assets. AI finds winning angles cheaply and quickly; human creators then execute the best-performing concepts at a higher quality ceiling. This is the dominant framework across successful DTC performance marketing teams in 2026.

What is the biggest operational challenge when scaling AI UGC?

Volume itself. Once you're running 50-125+ AI UGC variants, manually analyzing which creative elements drove performance becomes unmanageable in spreadsheets. The bottleneck shifts from "which ad won?" to "which specific hook, scene structure, avatar style, or CTA drove the results?" That requires creative intelligence tools that can tag and attribute performance at the element level, not just the ad level.

Is Mirage Studio the right choice for every brand?

No. Mirage excels for DTC spokesperson-style content where realism is the conversion driver: beauty, supplements, lifestyle products. Creatify is better for eCom batch testing with product-URL automation. Arcads is best for rapid hook testing at scale. HeyGen suits multilingual and polished professional content. Platform choice should follow your primary bottleneck: realism, volume, speed, or workflow integration.

Comments

Your comment has been submitted