How to Test Creative Angles on Meta Ads Without Wasting Budget

Most Meta ad budgets do not get wasted on bad targeting. They get wasted on bad creative testing.

The team launches five ads, waits a week, calls the one with the best ROAS the winner, and briefs more of the same. A few months later, performance is inconsistent, costs are climbing, and nobody is quite sure what is actually working. The problem is not the algorithm. It is the absence of a real, data-driven testing system.

Creative angle testing is fundamentally different from standard creative testing. Creative testing compares two specific ads. Angle testing starts further upstream: it identifies which fundamental message, appeal, or framing strategy resonates with your audience before you invest in production and spend at scale.

Getting the angle right is worth orders of magnitude more than getting the headline right. David Ogilvy documented this decades ago: the same product, same publication, same photography, and same carefully written copy, but one ad sold 19.5x more than the other simply because it used the right appeal.

That gap still exists on Meta today. And the teams closing it systematically are the ones compounding on creative performance rather than starting over every quarter.

Key Takeaways

Creative angles: the fundamental appeal or framing used in an ad account for approximately 70% to 80% of performance variation. Audiences, settings, and bid strategy account for the rest.

Isolate one variable at a time: This is the foundation of any learning system. Changing multiple variables simultaneously is the single most expensive testing mistake.

Budget for Signal: Meta's algorithm needs a consistent volume of conversions to optimize. Budgets set below the threshold needed for 2 to 3 conversions per day per ad set produce noisy data, not learning.

ABO (Ad Set Budget Optimization) is the right structure for angle testing because it ensures equal spend across angles. CBO is better reserved for scaling proven winners.

Format Integrity: Meta’s algorithm often exhibits a spend bias toward high-inventory formats (like Reels or Advantage+ Catalog). Mixing formats in the same ad set prevents lower-priority formats from reaching statistical significance.

Stay Disciplined: Most wasted budget in Meta angle testing comes from killing tests too early, not from running them too long.

Also read The 12 Best Ecommerce Tools to Scale Your Business in 2026

What is a creative angle and why does it matter more than execution?

A creative angle is the fundamental appeal or framing strategy behind an ad. It is not the visual treatment, the headline phrasing, or the CTA copy. It is the underlying reason the ad should resonate with your audience.

For a fitness product, "lose weight for your wedding" and "build sustainable habits" are different angles. Both can be executed as polished video ads with identical production quality and similar messaging styles. The performance difference between them can be dramatic, because one appeal will resonate with your specific audience’s psychology and the other will not.

This is why experienced practitioners focus angle testing at the top of the creative hierarchy and treat execution-level tests (headline variants, visual treatments, CTA phrasing) as secondary. The right angle with mediocre execution usually outperforms the wrong angle with excellent execution.

For practical angle development, a useful structure is to categorize angles across a few dimensions: the problem they address (functional, emotional, social), the evidence type they use (social proof, product demonstration, transformation story, authority), and the audience mindset they target (aspirational, fearful, analytical, convenience-seeking). Most brands have three to five genuinely distinct angles worth testing. More than that and you are often just testing variations on the same core message.

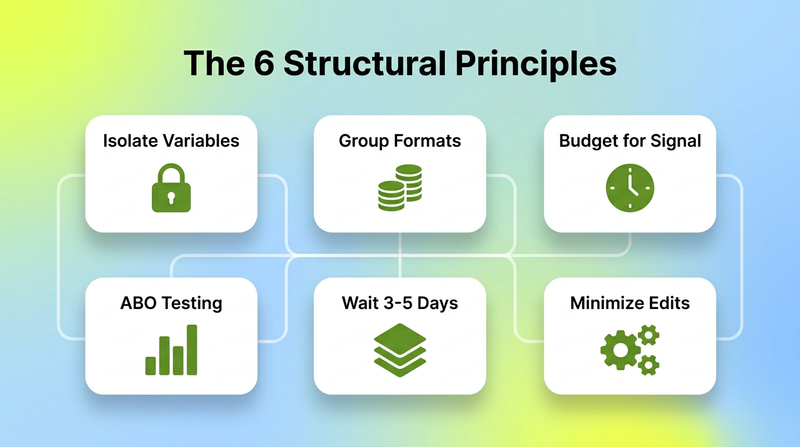

The Structural Principles of Meta Creative Testing

Before covering the tactical specifics of angle testing, these six principles determine whether a testing program produces learning or just produces spend.

1. Isolate one variable at a time

This is the most violated principle in Meta advertising. If you are testing angles, keep format, audience, budget, and campaign structure identical across every angle. If you are testing formats, keep the angle and copy identical. Changing more than one variable means you cannot isolate what caused the difference in results. You get data but no learning, which means the next test starts from the same baseline of uncertainty.

2. Group creative formats together

Meta's algorithm prioritizes creative formats in a consistent hierarchy based on available inventory. Dynamic catalogs and Reels tend to absorb the most spend. If you place a Reel and a static image in the same ad set, the Reel will likely starve the static image of spend. You will draw incorrect conclusions because the static image never received a fair test. For angle testing, test the same angle across the same format type.

3. Set budgets that support actual optimization

The practical threshold for the Meta algorithm is 2 to 3 conversions per ad set per day. To determine your testing budget, take your target cost per conversion and set each ad set's daily budget at 2x or 3x that number. If your average purchase costs $50, your minimum daily test budget for an ad set should be $100 to $150. Running below this threshold means the algorithm is making decisions with insufficient data, producing results that look definitive but are actually statistical noise.

4. Give tests enough time to be meaningful

The instinct to cut a test after one bad day is one of the most expensive habits in paid social. A single day of poor results can reflect audience overlap, a platform auction anomaly, or a delay in conversion attribution. Most experienced practitioners recommend leaving tests running for at least three to five days before drawing conclusions. The exception is catastrophic early performance that clearly indicates a structural or technical error.

There is also a deeper reason to stay disciplined: a test you close before it concludes produces no learning. You spend the money and come away with nothing.

5. Use ABO for testing, CBO for scaling

ABO (Ad Set Budget Optimization) gives each ad set a fixed, equal budget. This makes it the correct structure for angle testing because it ensures that each angle gets a fair, equal opportunity to spend. CBO (Campaign Budget Optimization) is valuable for scaling known winners because it automates budget allocation toward your best performers, but it is the wrong structure for testing because the algorithm’s skew introduces a variable you cannot control.

6. The "Snow Globe" Rule: Minimize unnecessary changes

Every time you edit a campaign, you disrupt the algorithm's learning state. One way to think about it: Meta's algorithm performs best when the account is calm and signal is accumulating consistently. Each intervention is a reset. Launch the test, observe for the planned duration, make one decision, then wait again.

How to Structure an Angle Test on Meta

A repeatable angle testing workflow looks like this:

Angle Hypothesis Development: Define two to five distinct angles with clear differentiation in the underlying appeal. Write each one as a single sentence: "This ad works because it speaks to [audience mindset] using [evidence type] framed around [specific problem]."

Minimum Viable Creative: Produce the simplest creative needed to test the angle. Production quality matters much less than angle clarity at this stage. A strong hook with a clear message in your standard format is sufficient.

The Setup: Use an ABO campaign with one ad set per angle. Match budgets exactly. Use the same audience signals, conversion objective, and attribution window for every ad set. Launch all angles simultaneously.

The Evaluation Window: Let the test run for 3–5 days. Aggregate results at the end of the window and evaluate angles against your primary conversion metric, not just CTR.

Validation: When you identify a winning angle, validate it by testing format variations within that angle. Does the winning angle work better as a video, a static image, or a Playable Ad? Once you have both a winning angle and a winning format, that combination becomes your scaling asset.

The Dimension That Most Testing Workflows Skip

Most teams test at the ad level: they compare finished ads and read the results. Very few teams test at the element level: they understand which specific component of a winning ad made it win.

This is where Segwise transforms the process. Its multimodal AI automatically tags every creative dimension across video, audio, image, and text, including the interactive elements of Playable Ads. Once your angle test concludes, Segwise tells you which elements inside that ad are driving results: whether the hook format is responsible, whether a specific CTA structure is the variable, or whether the emotional tone of the audio correlates with higher ROAS.

This transforms the output of an angle test from "Angle B won" to "Angle B won, specifically because problem-first hooks with a transformation-story structure produced 2.3x higher CTR than product-demo hooks."

How Segwise Accelerates the Full Testing Cycle

Beyond post-test analysis, Segwise changes the entire workflow:

Pre-Testing (Competitor Intelligence): Segwise’s competitor tracking (currently Meta supported) lets you analyze which angles your competitors are running and which ones have longevity. This informs which angles you should prioritize in your own tests.

During Testing (Fatigue Monitoring): If a new angle shows early performance signals that indicate unsustainability, such as a strong early CTR followed by a rapid drop-off you get a fatigue alert before the budget runs deeper into a misleading spike.

Scaling (AI Generation): When you are ready to scale, Segwise’s AI-powered creative generation produces 15+ data-backed iterations of your winning angle. The system generates variations built around the specific creative variables that drove your test results.

Cross-Network Learning: Segwise connects to Meta alongside 15+ other ad networks including YouTube, Google, TikTok, Snapchat, AppLovin, and more. It unifies this with MMP data from AppsFlyer, Adjust, Branch, and Singular. Winning angles validated on Meta can be instantly adapted for other networks with total performance visibility.

What to do when you have identified your winning angles

Identifying winning angles is the output; systematically exploiting them is the business value.

Once you have a winner, scale horizontally before scaling budgets vertically. Horizontal scaling means taking the winning combination and testing it against new audiences or geographic markets. Vertical scaling means increasing budgets in controlled increments on your proven ABO setup, or migrating the proven combination into a CBO structure for global optimization.

Refresh creative within the winning angle before it fatigues, not after. Segwise's fatigue alerts catch early decline patterns (rising frequency and declining CTR) before the performance cliff. This allows you to introduce the next iteration, briefed from your angle's winning element data before your ROAS deteriorates.

Conclusion

Scaling on Meta without wasting budget is about building a system where every dollar spent either generates revenue or generates learning.

Angle testing is the mechanism. Creative analytics is the intelligence layer that makes the learning transferable. Segwise gives performance marketing teams the element-level intelligence that closes the gap between testing and creative direction, saving up to 20 hours per week while achieving up to 50% ROAS improvement.

Frequently Asked Questions

What is a creative angle in Meta advertising?

A creative angle is the fundamental appeal or framing strategy behind an ad (e.g., "Convenience" vs "Cost-savings"). It is the underlying reason the ad should resonate with a specific audience, distinct from execution-level variables like colors or fonts.

Should I use ABO or CBO for creative angle testing?

ABO is the right structure for testing because it gives each ad set a fixed, equal budget, allowing for a true apples-to-apples comparison. Use CBO to scale proven winners once the test is complete.

How much budget do I need to properly test a creative angle?

Set each ad set’s daily budget to 2x or 3x your target CPA. This ensures the algorithm accumulates enough conversion data (2-3 per day) to provide a statistically significant result rather than "noise."

How long should I run a creative angle test?

At least three to five days. Cutting tests after 24 hours often leads to "false negatives" due to auction volatility or attribution delays.

How do I know which element of a winning ad drove the result?

Standard Meta reporting cannot tell you "why" an ad won. You need element-level tagging. Segwise’s multimodal AI automatically tags every dimension (video, audio, text, playables) and maps them to performance metrics to identify the specific winning variables.

What causes Meta angle tests to produce misleading results?

The most common causes are mixing formats (which skews spend), testing multiple variables at once, setting budgets too low for optimization, and making "mid-flight" edits to the campaign.

Howdo I know when to refresh creative within a winning angle?

Watch for leading indicators: rising frequency and a gradual decline in CTR. Segwise monitors all running creatives for these patterns and sends alerts when thresholds are hit, so you can refresh before ROAS drops.

Comments

Your comment has been submitted