How to Pre-test Creatives in 2026: 2 Simple Methods That Actually Work

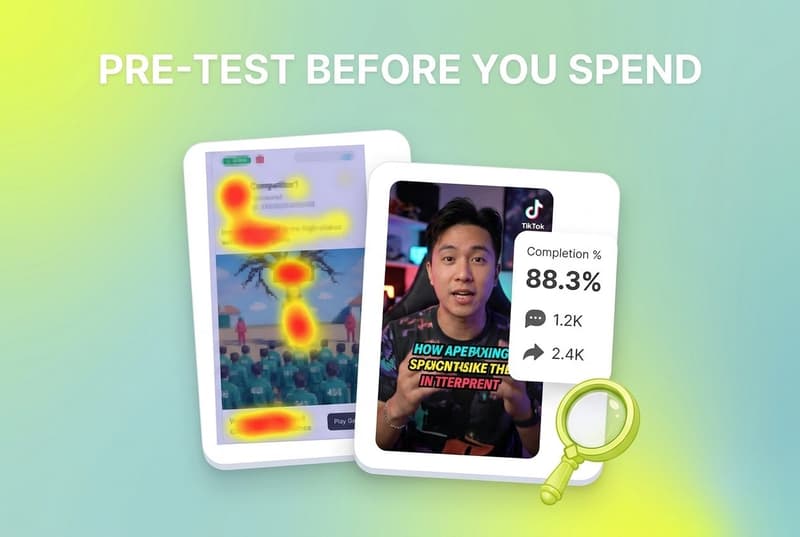

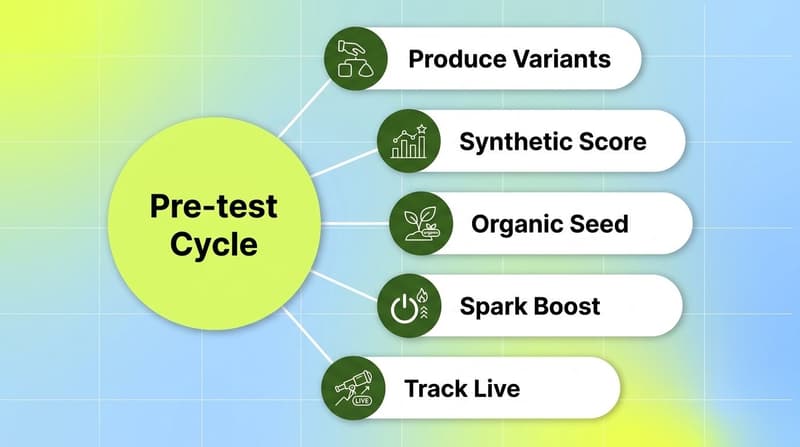

The two creative pre-testing methods that work in 2026 are organic TikTok seeding (via Spark Ads) and AI synthetic pre-testing (via tools like Neurons and VidMob). The first reads real reactions from real users at zero ad spend, the second returns a predicted attention and recall score in seconds. Both work best when their winners feed directly into a creative-level analytics platform like Segwise so live performance can be compared back to the pre-test signal across Meta, TikTok, Google, and 12+ other networks.

Pre-testing creatives used to be a luxury most performance teams skipped. In 2026, with AI-generated UGC slashing production costs and Meta's Andromeda algorithm chewing through 30+ creatives a day, the cost of shipping a dud is no longer the production cost. It is the lost auction signal, the wasted learning phase, and the seven days of underperformance baked into the model before you can pause it.

So before you push spend behind a creative, two methods are worth running first. Both are cheap. Both are fast. Both have catches that are easy to miss.

This guide walks through both, what each can and can't tell you, and how to plug pre-test winners into the rest of your test cycle so the signal does not die at the pre-test stage.

Also read Enterprise Creative Experimentation Platforms: The 2026 Buyer's Guide

Key takeaways

Organic TikTok seeding via Spark Ads is the closest thing to a free dress rehearsal: post creatives natively, watch a 24 to 72 hour organic window, then boost the ones that earn engagement. Stackmatix reports Spark Ads typically deliver 30 to 40% lower CPA than equivalent dark posts because the social proof carries over.

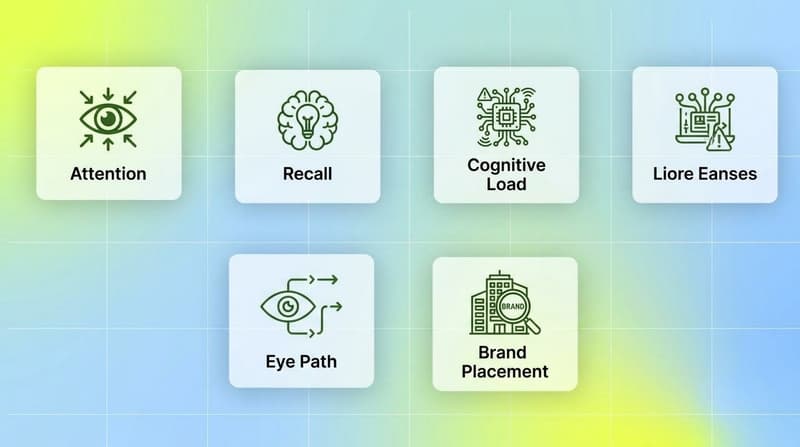

AI synthetic pre-testing tools like Neurons predict attention, cognitive load and brand recall in under a minute using neuroscience-trained models. Neurons reports its winning eye-tracking model is statistically equivalent to running 100 to 150 real participants, with claimed 95%+ accuracy on attention prediction.

Pre-testing matters more in 2026 because creative volume on Meta and TikTok has roughly tripled per advertiser since 2023, and the algorithms now use early-window signals (3-second watch, profile clicks, hook completion) to decide whether your creative ever gets a learning phase at all.

Neither method tells you about ROAS, retention, IAP behavior, or geo-specific performance. Both are filters for the bottom 30 to 50% of obviously weak creatives, not crystal balls for what will scale.

The smart play is sequenced: pre-test for hook strength and attention, ship the survivors, and use creative-level analytics like Segwise to track which pre-test winners actually convert once paid spend kicks in.

Why pre-testing matters more in 2026

A few things changed at once.

First, creative volume exploded. Liftoff's 2025 Mobile Ad Creative Index shows that AI tools are reshaping how creative teams ideate and produce, and top mobile advertisers now ship dozens of variations a week rather than a handful a month. The cost of a single bad creative is small, but the cost of letting Meta's Andromeda model train on the bad ones is large. Andromeda picks early winners and starves the rest of impressions inside 48 hours.

Second, the platforms shifted weight to early-window signals. Megadigital's analysis of TikTok Ads trends notes that hooks under three seconds and gameplay before 1.5 seconds now drive a meaningful chunk of view-through attribution on TikTok and Meta. If your hook does not land in the first beat, the rest of the ad rarely gets seen.

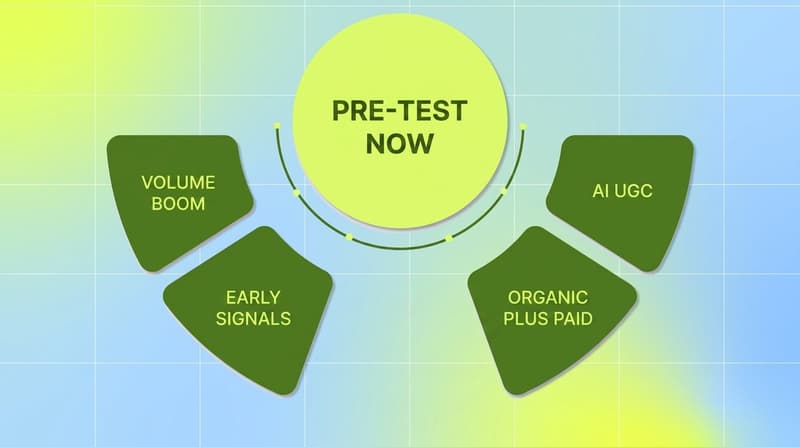

Third, AI UGC tools (Arcads, HeyGen, Sora) collapsed production costs to near zero, which means the bottleneck moved from "can we produce 50 ads" to "which 5 of these 50 should we actually spend behind". That is exactly the question pre-testing tries to answer.

Fourth, organic and paid converged. TikTok's What's Next 2025 Trend Report frames this as the "Creative Catalysts" theme, with brands like Meoky reporting 1.8x higher purchases and 13% higher ROAS when they ran a mix of business-as-usual creative and AI-generated variants together. The mix beats the original. Pre-testing is how you find which AI variants belong in the mix.

The upshot: pre-testing in 2026 is less about avoiding waste and more about earning the algorithm's first-look attention on the right creatives. Two methods do this well.

The pre-test premise - Pre-testing does not predict ROAS. It predicts whether a creative is worth the algorithm's first impressions. That is a much smaller, much more achievable claim, and it is exactly the gap most teams fail to fill in 2026.

The 2 pre-test methods worth running in 2026

Method 1. Organic TikTok seeding: Best for hook validation in real auction conditions

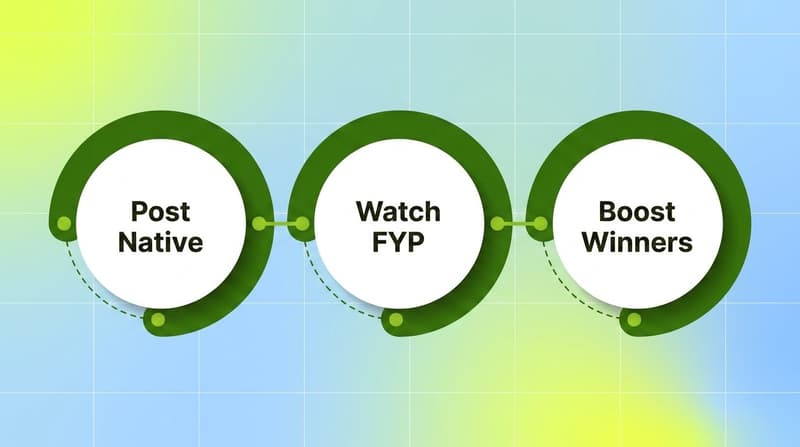

Organic seeding is the practice of posting your creatives to your own (or a creator's) TikTok account, watching how the FYP responds for 24 to 72 hours, then using Spark Ads to boost only the ones that earn organic signal. Stackmatix calls this the "organic-plus-paid flywheel" and frames it as the default 2026 playbook for both DTC and app advertisers.

How the mechanic actually works. You publish a creative as a normal organic post from a TikTok handle that has at least a small follower base or a creator partner you have authorized. TikTok seeds the post to a small slice of the FYP based on the account's history. You watch four signals in the first 24 to 72 hours: completion rate, comments, shares, and follower-from-view ratio. If those clear the bar, you authorize the post for Spark Ads using the creator's authorization code, and your paid campaign runs the post natively, complete with handle, comments, and likes intact. The result is paid distribution that looks like organic content because, at least at the start, it was organic content.

What to measure during the organic window. Four numbers, in order of importance:

Completion rate. Stackmatix and TikTok both flag this as the strongest predictor of how a creative will perform once boosted. A completion rate above the account's median is the green light. A drop after the hook is a red flag, no matter how strong the rest of the video is.

Comment volume and sentiment. Comments are TikTok's truest social proof. A creative with 50 organic comments will almost always outperform one with 5, holding completion rate constant. Sentiment matters too: positive, curious or playful comments carry more weight than confused or critical ones.

Shares and saves. Shares signal that the creative was good enough to forward. Saves signal that it was useful enough to revisit. Both correlate with strong post-boost CVR.

Profile-click rate. A high profile-click rate means the creative made viewers want to know who posted it, which is gold for brand-led campaigns and almost always converts when boosted.

Pitfalls worth flagging.

Account history skews the seed. A brand-new TikTok account will not get the same organic distribution as a seasoned one. The signal you read is partly the creative and partly the account. Use creator partners with established audiences when possible.

The 24 to 72 hour window is narrow. Wait too long and the post grows stale, the algorithm de-ranks it, and a Spark Ad boost lifts a creative whose organic momentum already died.

Comment-section swings. Comments shape future viewers' perception. One viral negative comment can poison a creative that would otherwise have won. Read the room before boosting.

Geo and language fragmentation. A creative that wins on US-FYP can flop on UK or AU FYP, and vice versa. Seed in your target market or you are pre-testing the wrong audience.

No app install signal. Organic TikTok engagement does not predict CPI or D1 retention. It predicts hook strength and engagement quality, which is necessary but not sufficient.

When you should try it. Try organic seeding if you are a DTC brand, app, or game with at least one TikTok handle (your own or a creator partner's), if your media plan includes any TikTok or Meta UGC spend, and if you want a real-world filter before committing budget. It works especially well for AI-generated UGC, where you have 10 cheap variants and need to know which 2 to amplify. Hyperfocus and other agencies recommend a 60-30-10 budget split once you find winners: 60% on Spark Ads of proven organic, 30% on new test concepts, 10% on experiments.

Limitations. Organic seeding only works on TikTok in 2026. The closest analog on Meta is the "boost a Reel that performed well" workflow, but Meta's organic reach for brand pages is far weaker, and the predictive value is correspondingly lower. Reddit and YouTube Shorts have similar dynamics but smaller audiences for most performance categories. If your media mix is 80% Meta and 20% TikTok, organic seeding will only validate the TikTok portion.

Always seed at least three variants of the same hook, the differences between them surface clear preference signals. A single creative tells you nothing comparative.

Method 2. AI synthetic pre-testing: Best for fast, cheap, pre-production filtering

AI synthetic pre-testing tools predict creative performance before you ever post it. The strongest of them, Neurons, uses neuroscience-trained models built on what Neurons describes as one of the world's largest eye-tracking databases. Upload a video or static image, get a heatmap of predicted attention, a cognitive load score, a brand recall estimate and an engagement prediction in under a minute. VidMob runs an analogous play on the post-launch side, decomposing creatives into element-level scores (hook, CTA placement, color contrast, voiceover energy) and benchmarking against past performance.

How synthetic scoring works. These tools train on labeled creative data: real eye-tracking studies, click-through results from prior campaigns, brand-recall surveys. The model learns which compositional patterns (eye lines, contrast, motion direction, text density) correlate with attention and recall. When you upload a new creative, it predicts where viewers will look first, where attention drops, what they will remember, and how cognitively heavy the ad is. Neurons reports 95%+ accuracy versus actual eye-tracking studies on its attention model, validated against industry-specific stimuli across food and beverage, financial services, telecom, and retail.

Where the synthetic score is useful.

Pre-production gut checks. Run a storyboard or animatic through the model before you film. Cheap to fix; expensive to re-shoot.

Variant ranking. Score 20 cuts of the same ad in five minutes and pick the top 3 to actually post. Saves the team from running a horse-race they don't have media budget for.

Brand-element placement. Heatmaps tell you whether the logo, product, or CTA is in the high-attention zone or the dead-attention zone.

Compliance pre-check. For regulated categories (financial services, gaming, alcohol) the synthetic test catches obvious legibility or focus issues before legal review.

When to trust the score (and when not to). Trust the synthetic score for what it actually measures: attention, gaze, recall, cognitive load. These map well to top-of-funnel performance (CTR, view-through, ad recall lift). Distrust it for anything bottom-of-funnel: ROAS, install-to-purchase, LTV, retention, geo-specific cultural fit. The model has never seen your audience, your offer, or your seasonality, so it cannot predict revenue. It can only predict whether a viewer's eyes will land where you want them to.

A second caveat: synthetic models are trained on past data. A creative that breaks a pattern (a deliberately weird hook, a disorienting cut) may score badly while still winning in the wild because novelty itself drives attention. The score punishes pattern-breaks. Sometimes pattern-breaks are exactly what you want.

Pitfalls worth flagging.

Score inflation across categories. Most tools train on broad creative datasets. The benchmark for a "high attention" mobile gaming ad is different from a SaaS ad. If the tool does not let you select an industry, the score is approximate.

Static-vs-video gap. Static and storyboard scoring is generally more reliable than full-video scoring because predicting attention frame-by-frame across cuts is harder than predicting it on a single image.

No brand context. The model does not know your brand or audience. A creative that scores 9.5 may flop because it does not look like your brand at all, even if it grabs attention.

License lock-in. Most synthetic testing tools price by seat or by asset volume. The unit economics break for teams testing 50+ assets per week.

The accuracy claim is for attention, not conversion. Vendor 95% accuracy claims refer to eye-tracking equivalence, not ROAS prediction. Read what is actually being measured.

When you should try it. Try synthetic pre-testing if you are running mostly Meta or display formats (where organic seeding does not apply), if you produce in volume and need to pre-rank variants, if you have a brand team that cares about logo and product placement, or if you ship in regulated categories where one bad creative triggers a takedown. It is also useful when you do not have a TikTok presence with enough history to seed organically.

Limitations. Synthetic scoring will never tell you whether a hook is funny, whether a script is on-brand, or whether your audience finds the framing tone-deaf. It will tell you whether the technical fundamentals (attention, contrast, focal point, recall) are intact. Treat it as a fundamentals check, not a creative judgment.

Once your pre-test winners go live, watch them at the creative level - Pre-testing tells you which creatives deserve paid impressions. and tell you which ones actually earn the spend back across Meta, TikTok, Google, and 12 other networks

Comparison: organic seeding vs synthetic pre-testing

The two pre-test methods filter which creatives are worth shipping. Segwise is the layer that tracks how shipped creatives actually perform once live, surfacing tag-level winners and fatigue across all your networks. They are complements, not substitutes.

What pre-testing can't tell you

This is the section most pre-testing posts skip, so it is worth dwelling on.

Pre-tests do not predict ROAS. They predict whether a creative has the basic fundamentals (hook, attention, recall, organic resonance) to be worth running. They do not know your offer, your customer LTV, your D7 retention curve, or your cost-per-install ceiling. A creative can pre-test beautifully and still lose money. A creative can pre-test poorly and still win because of a hook the model could not parse.

Pre-tests do not predict fatigue. A creative that wins in week one almost always loses by week three on Meta or TikTok. Pre-tests give you a launch signal; they do not give you a refresh cadence. For that, you need creative-level fatigue tracking once the creative is live.

Pre-tests do not predict cross-network behavior. A creative that wins organically on TikTok may underperform when reformatted as a Meta Reel, AppLovin interstitial, or YouTube Short. The format conversion changes the signal.

Pre-tests do not predict cohort effects. A creative that hits well in Tier 1 English-speaking markets can flop in non-English geos, in older demos, or in segments your media plan over-indexes against. Synthetic models trained on US datasets are particularly weak here.

What pre-tests can do is filter out the bottom 30 to 50% of weak creatives, which is exactly what 2026 budgets need: a fast, cheap "is this even worth running" gate before paid spend gets involved.

How to fold pre-tests into the broader test cycle

A pre-test is only as useful as the system it feeds into. Here is the cycle that works in 2026:

Brief and produce in volume. Use AI UGC tools, in-house production, or creator partners to produce 15 to 30 variants of the same brief. Production cost should not gate the pre-test.

Synthetic-test the static thumbnails and storyboards first. Cut the obviously weak attention scores. Save 10 to 15 for the next stage.

Organic-seed the top 5 to 10. Post natively on TikTok (or paid creator handles), watch 24 to 72 hours of organic FYP signal, kill the bottom half.

Spark Ads or paid launch the survivors. Push the top 3 to 5 into Spark Ads on TikTok and / or paid Meta, with structured naming so you can track them.

Tag and monitor at the creative level. Once spend is flowing, you need creative-level analytics to track which pre-test winners actually convert. This is where tools like Segwise's creative analytics and asset clustering matter, because they show whether the pre-test signal predicted the live signal or whether you need to recalibrate the pre-test process for next round.

Catch fatigue early. A pre-test winner that fatigues in week two is still a win. The cycle is: pre-test, ship, track, refresh, repeat. Fatigue tracking closes the loop so the next round of pre-tests can target the patterns that survived longest.

Feed live performance back into the pre-test process. If the synthetic score said 8/10 but ROAS said 1.4x and another creative scored 6/10 but ROAS came in at 3.2x, your pre-test process is mis-calibrated. Use real performance data from live campaigns (clean tag-level data, not aggregated campaign reports) to retrain the human judgment around your pre-test scores.

This is the loop the strongest performance teams in 2026 run. Pre-tests on the front end. Live creative analytics in the middle. Fatigue tracking and asset clustering on the back end. Each stage feeds the next.

How to choose which pre-test method to use

The right pre-test method depends on your media mix, your production cadence, and your team's resources. Both methods are cheap enough that running both is often the right answer. Synthetic for speed, organic for real-world validation.

If you spend most on TikTok and have a creator-led content engine, organic TikTok seeding via Spark Ads is the right starting point. The signal is real and the path to paid is built into the platform. Synthetic scoring is a useful supplementary filter for pre-production rounds.

If you spend most on Meta, Display, or in-app interstitials, AI synthetic pre-testing (Neurons, VidMob, similar) is the right starting point. Organic seeding does not apply outside TikTok, so synthetic is the only fast pre-launch gate available.

If you produce 50+ creatives a week (AI UGC pipelines, gaming UA at scale), use both, sequenced. Synthetic test the storyboards to cut volume in half. Organic seed the survivors that have a TikTok play. Spark Ads or paid launch the rest. Pair with Segwise's creative tagging for live monitoring once paid spend turns on.

If you ship in a regulated category (financial services, alcohol, gaming, health), synthetic pre-testing is the safer first stop because compliance issues are easier to spot in heatmaps than in organic comments. Once cleared, organic seeding catches the audience-perception issues that compliance review can't see.

If you are a small DTC brand without a TikTok presence, synthetic is the only realistic pre-test option short term. Build a TikTok handle in parallel so organic seeding becomes available within a quarter.

If you are an agency managing multiple clients, offer both as a standard pre-test layer. The unit economics work because synthetic tools price by asset and organic seeding has no marginal cost. The differentiator is the analytics layer downstream, where Segwise's growth-agency workflow becomes the scaling tool once pre-test winners go live.

Bottom line

Pre-testing in 2026 is not a luxury workflow; it is a gate every performance team needs before paid spend hits the algorithm. Organic TikTok seeding via Spark Ads gives you a 24 to 72 hour real-world filter at zero ad cost, which is the closest thing performance marketing has to a free dress rehearsal. AI synthetic pre-testing through Neurons-class tools gives you a sub-minute attention and recall score, which is the cheapest way to cut the bottom half of a production batch before media. Neither tells you about ROAS, retention, or fatigue, which is why both methods are most useful when their winners feed into live creative-level analytics like Segwise the moment paid spend turns on.

Frequently Asked Questions

What is the best way to pre-test ad creatives in 2026?

The two most reliable methods in 2026 are organic TikTok seeding via Spark Ads (post natively, watch 24 to 72 hours of FYP signal, boost the winners) and AI synthetic pre-testing (Neurons, VidMob and similar tools predict attention and recall before you launch). Most teams use them in sequence: synthetic for fast variant ranking, organic seeding for real-world hook validation, and tools like Segwise to track which pre-test winners actually convert once paid spend turns on.

How do I pre-test creatives without spending ad budget?

Organic TikTok seeding gives you the closest thing to a zero-spend pre-test. You post the creative natively from your brand or creator handle, let the FYP serve it for 24 to 72 hours, then read four signals: completion rate, comment volume and sentiment, share and save count, and profile-click rate. Creatives that clear those bars get authorized for Spark Ads. Synthetic tools like Neurons offer a free trial tier as well. Once the creatives are live, Segwise tracks performance at the creative level alongside competitors like VidMob.

How accurate are AI synthetic pre-testing tools?

Vendor-reported accuracy is high for what synthetic tools actually measure. Neurons reports 95%+ accuracy on attention prediction versus real eye-tracking studies, validated across multiple industries. The catch is that "accuracy" refers to attention, gaze, and recall, not ROAS or LTV. The score predicts whether viewers will see the right thing, not whether they will buy. For revenue-side validation you need live creative analytics platforms like Segwise, VidMob, or Dragonfly AI once the creative is in market.

What's the difference between organic seeding and AI synthetic pre-testing?

Organic seeding tests creatives with real users in real auction conditions on TikTok and reads behavioral signals (completion, comments, shares). AI synthetic pre-testing scores creatives in seconds without any users, using neuroscience-trained models that predict attention and recall. Organic is slower (days) but reads real reactions. Synthetic is instant but predicts only the fundamentals. Most performance teams in 2026 use both as sequential filters before paid spend, with creative-level tools like Segwise tracking which pre-test winners actually convert in market.

Best pre-testing approach for mobile gaming UA managers?

Mobile gaming UA in 2026 ships dozens of creatives per week, so the right approach is sequenced. First, use synthetic pre-testing on storyboards or static cuts to drop the obviously weak attention scores. Second, organic-seed the top survivors on TikTok where gameplay-first hooks earn fast feedback. Third, run survivors through Spark Ads on TikTok and parallel paid tests on Meta, AppLovin, and Mintegral. Fourth, monitor at the creative level with Segwise's creative tagging, which is the only platform that tags playable ads and surfaces which gameplay elements drive installs across 15+ networks.

Best pre-testing approach for DTC brands?

DTC brands typically have a smaller test budget and a stronger TikTok presence (or creator network) than mobile games. Organic TikTok seeding is the lower-cost first filter; synthetic scoring catches placement and contrast issues before launch. Once creatives are running, Segwise's creative analytics for DTC tracks tag-level performance across Meta and TikTok and surfaces which hooks, products, and offer framings actually drove revenue, alongside competitors like VidMob and Pencil.

Best pre-testing approach for performance marketing agencies?

Agencies should standardize a two-stage pre-test layer across all clients: synthetic scoring on every storyboard and static cut, organic seeding on every TikTok-eligible creative. Both have low marginal cost and easy reporting. The differentiator is the live analytics layer once creatives ship. Segwise's agency workflow supports multi-brand and multi-client portfolio reporting, with creative-level tagging across Meta, TikTok, Google, and the major MMPs, which makes pre-test signal versus live performance comparisons easy to do at scale.

Can I pre-test creatives for Meta the same way I pre-test for TikTok?

Not directly. Meta's organic reach for brand pages is far weaker than TikTok's FYP, so organic seeding is rarely a valid pre-test on Meta alone. The closest analog is boosting a Reel that earned organic engagement, but the volume of usable signal is much lower. For Meta-heavy media plans, AI synthetic pre-testing through Neurons or VidMob is the more reliable pre-launch filter, with creative-level tools like Segwise picking up live performance once the creative is in the auction.

How long should an organic seeding window run before I boost a creative?

24 to 72 hours is the standard window. Read the four key signals (completion rate, comments, shares, profile-clicks) within the first 48 hours and decide on the 72-hour mark. Going longer risks the post going stale and the algorithm de-ranking it before you can boost. Going shorter risks reading noise. Once you commit to Spark Ads, Segwise's creative analytics lets you compare the boosted post's tag-level performance against your other live creatives across all networks, alongside benchmarks from tools like VidMob.

Comments

Your comment has been submitted