Creative Analytics for Meta Ads: Why ROAS Is Only Half the Story

Meta is still the largest performance advertising platform in the world. The targeting is sophisticated, the algorithm is powerful, and the sheer scale of the user base makes it essential for most growth teams. Yet most advertisers running on Meta are measuring their way to average results.

ROAS tells you whether your ads generated profitable returns. It does not tell you what made those returns possible. Was it the hook? The creative format? The offer framing? The visual style? Without that information, every new creative brief starts from scratch, built on gut feel and historical averages rather than evidence.

This is the gap creative analytics fills. And it's the gap that separates teams with compounding creative advantage from teams perpetually starting over.

Key takeaways

ROAS measures campaign profitability but can't identify which creative elements drove it

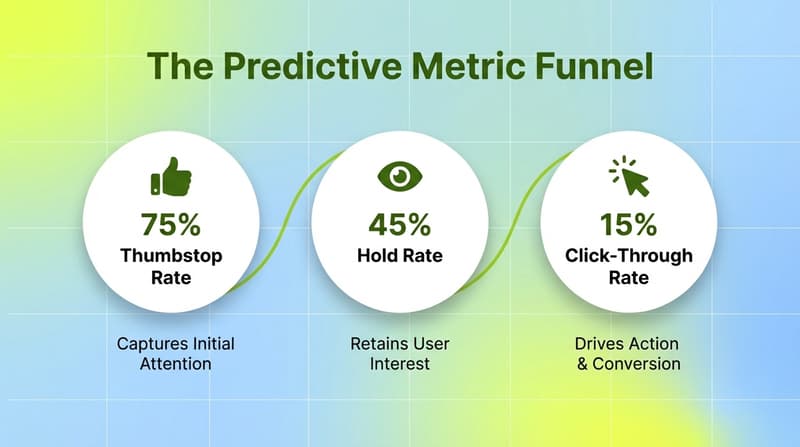

Meta's algorithm uses thumbstop rate, hold rate, and CTR as quality signals to score creatives, these predict performance before revenue data is available

Frequency is the leading indicator of creative fatigue tracking it proactively prevents budget burn after performance has already collapsed

Dynamic creative testing requires element-level analysis to be actionable ad-level comparisons tell you what won, not why

Manual reporting is the bottleneck in most creative analytics workflows, scaling poorly with creative volume

Cross-channel attribution requires joining Meta data with CRM and MMP data native tools don't do this

Also read Ecommerce Analytics in 2026: A Profit-First Guide to Scaling

Why ROAS isn't enough for creative decisions

ROAS answers the budget question. If you want to know whether to scale a campaign or cut it, ROAS is the right input. But it answers it retrospectively after impressions have been served, clicks have happened, and conversions have been attributed.

For creative decisions, you need to understand what inside the ad produced those results. And that requires metrics that operate at the element level, not the campaign level.

Consider a scenario most teams have run into: two video ads with similar spend, one with 4x ROAS and one with 1.5x. The obvious conclusion is that the first creative is better. But "better" is doing a lot of work here. Was it better because the hook stopped the scroll? Because the offer was stronger? Because the audience segment this ad served happened to be higher intent? Because the landing page experience aligned better with this creative's messaging?

ROAS doesn't decompose that way. Creative analytics does.

The Meta metrics that actually predict creative performance

Thumbstop rate

Thumbstop rate measures what percentage of people stopped scrolling and held your ad in view for at least three seconds. It's the earliest signal of creative quality, it captures audience response before any click or conversion decision happens.

A low thumbstop rate means your opening frame is losing the audience before the message lands. The visual, the first line of text, the initial scene, something in the first two to three seconds isn't compelling enough to interrupt the scroll. No optimization downstream of that fixes a creative that loses people before they've seen it.

Meta's algorithm uses thumbstop rate as one of its quality signals when determining which creatives earn more auction impressions at lower CPMs. Higher-quality creative engagement translates directly to more efficient ad spend.

Hold rate

Hold rate measures the percentage of people who watched your ad for at least 15 seconds. Where thumbstop rate measures initial capture, hold rate measures sustained engagement. An ad can have strong thumbstop but poor hold rate you're capturing attention but not keeping it. That narrows the problem to the five-to-ten seconds immediately following the opening, not the hook itself.

These two metrics in combination are diagnostic tools. High thumbstop, low hold: fix the middle of the ad. Low thumbstop: fix the opening. Strong both: the creative is holding audience attention, and weak downstream conversion points to a messaging or landing page issue.

CTR as a downstream signal

Click-through rate captures audience members who were engaged enough to take action after watching your ad. It's an important metric but it's a lagging one by the time you're measuring CTR, the audience has already been pre-filtered by your hook and hold quality.

CTR is most useful when analyzed alongside conversion rate from your landing page or app store. A high CTR with poor conversion rate often means the creative is generating curiosity clicks that don't reflect real purchase intent, a messaging alignment problem between ad and destination.

Frequency and fatigue

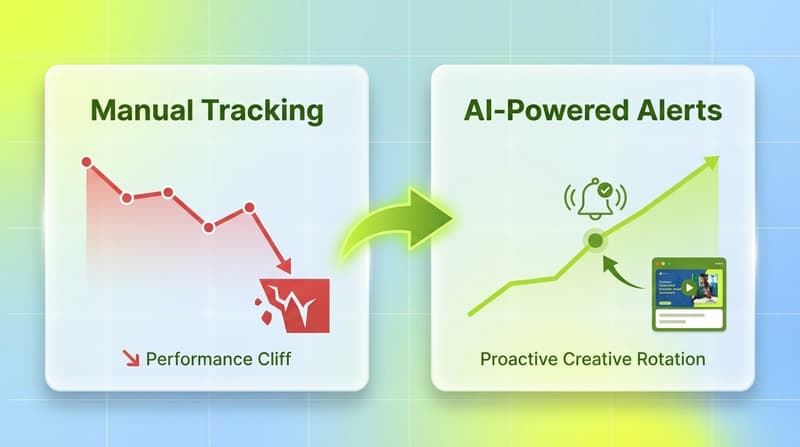

Frequency is average impressions per unique user. It's the primary leading indicator of creative fatigue. As an audience sees the same ad repeatedly, engagement drops and cost per conversion rises. The performance cliff, when CTR drops sharply and ROAS deteriorates, usually follows sustained high frequency.

Most teams catch fatigue after it's already damaged performance. Monitoring frequency proactively, and setting thresholds for when to rotate creative prevents significant budget waste.

Cost per acquisition and downstream revenue

CPA tells you the cost of a conversion, but like ROAS, it's most useful when joined with CRM or MMP data. Meta's attribution model will give you conversions attributed to Meta. Your CRM or MMP will give you what those conversions actually became in revenue terms. Connecting these data sources gives you creative performance evaluated against actual business outcomes, not just platform-reported conversions.

The structural challenges with Meta creative analytics

Dynamic creative testing produces ad-level results, not element-level

Meta's Advantage+ and dynamic creative features enable testing multiple headlines, visuals, and CTAs simultaneously. But native reporting shows you which ad combination performed best not which individual element was responsible. You can see that variation B outperformed variation A, but not whether the headline drove the difference or the image did.

Getting element-level signal from dynamic creative requires either running fully isolated single-variable tests (expensive, slow) or using a creative analytics layer that can tag and attribute performance to specific creative components.

Siloed data breaks attribution

Meta data sits in Ads Manager. Revenue data sits in your CRM. App event data sits in your MMP. Without joining these, you're making creative decisions based on Meta's own attribution which systematically overattributes conversion credit to itself. Getting to accurate ROAS requires connecting Meta ad spend to actual revenue outcomes from external data sources.

For mobile apps, this means MMP integration connecting Meta spend to install data, in-app events, and downstream retention from AppsFlyer, Adjust, Branch, or Singular. For DTC brands, it means connecting Meta data to Shopify or CRM purchase records.

Manual reporting doesn't scale with creative volume

As the number of active creatives grows, manual export and spreadsheet analysis becomes the bottleneck. Teams spend significant time preparing data rather than analyzing it. Version drift, formula errors, and inconsistent data handling compound over time into unreliable creative performance records. Automated data pipelines replace this overhead, but the more important shift is from reporting infrastructure to creative intelligence infrastructure.

What a proper Meta creative analytics stack looks like

Unified cross-platform data

The foundation is bringing Meta data together with your other ad networks and MMP data in a single environment. Most growth teams are running Meta alongside TikTok, Google, Snapchat, and network partners. Creative patterns that emerge on Meta are often testable across other networks but only if you have cross-network data in a common format.

Segwise connects to 15+ ad networks and MMPs with no-code integrations, Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, IronSource, and more, plus MMP integrations with AppsFlyer, Adjust, Branch, and Singular. All creative and performance data in one place, so you can see which Meta creative patterns translate to other networks and which don't.

Creative-element tagging at scale

This is where most analytics stacks still fall short, and where Segwise is fundamentally different from standard reporting tools.

Segwise uses multimodal AI to automatically tag every Meta creative across four dimensions:

Video: visual elements, scene changes, on-screen text, product shots, visual styles

Audio: hook lines, voiceover style, background music type, audio emotional tone

Image: colors, compositions, characters, products, emotions, visual styles

Text: headlines, CTAs, benefit statements, offer framing

Every tag maps automatically to your performance metrics, ROAS, CTR, installs, conversions, custom events. The output is a system that can answer: "Which hook styles produce the highest thumbstop rates for this audience? Do benefit-focused CTAs outperform urgency-based ones? Which visual formats are correlating with above-benchmark ROAS right now?"

Those answers feed directly into creative briefs. Instead of briefing your team to "make something that resonates," you're briefing them with specific performance-validated creative variables to build around.

Fatigue detection before the performance cliff

Segwise monitors all running Meta creatives for performance decline patterns and sends configurable alerts before ROAS tanks. You set the thresholds, a 20% ROAS decline over 7 days, a spend share drop below a threshold, and the system flags issues aligned to your business logic. Teams using this catch fatigue early enough to rotate creative before significant budget is wasted.

Competitor creative intelligence

Meta's Ad Library gives advertisers some visibility into what competitors are running. Segwise's competitor tracking goes further, applying the same multimodal AI tagging to competitor creatives so you can analyze patterns across their library systematically. Which hooks do they repeat most? Which formats are they scaling? Which creative angles are saturated in your category versus underexplored?

This turns competitive intelligence from a manual browsing exercise into a data-driven analysis, and it integrates directly with your own creative planning workflow.

AI-powered creative generation from winning data

Once Segwise's tag-to-metric mapping identifies your best-performing creative elements, you can generate new creative variations built around those winning elements directly within the platform. The system produces 15+ data-backed iterations grounded in what's actually working, not in assumptions or creative director preference. This compresses the feedback loop between performance data and new creative production from weeks to days.

How to use creative analytics to brief better Meta ads

The metric layer tells you what's happening. The element layer tells you why. The brief connects both to what gets made next.

Teams running Meta at scale and getting compounding value from their creative testing share a few common practices:

Test one variable at a time when possible. Dynamic creative can tell you which combination won. Isolated variable testing tells you which specific element was responsible. Both have value but serve different purposes.

Use thumbstop and hold rate as diagnostic tools, not just reporting metrics. If you know where your creative is losing the audience, the hook, the middle, the CTA, you know exactly what to fix in the next iteration.

Connect Meta attribution to downstream revenue data. CPA numbers that live only in Meta's reporting are directionally useful but attribution-inflated. Decisions about which creative to scale should incorporate MMP or CRM data on actual business outcomes.

Tag creatives consistently before launch. File naming conventions that encode creative variables hook type, format, talent present or absent, offer type create the data layer for analysis over time. This compounds: a year of disciplined tagging produces creative intelligence that can't be replicated quickly.

Set specific performance hypotheses before each test. "Benefit-led hooks will outperform curiosity hooks for this audience" is testable. "Let's see what sticks" is not. The best creative testing programs are structured more like scientific experiments than creative auditions.

Conclusion

Most Meta advertisers are leaving performance on the table, not because they're spending wrong, but because they're learning wrong. ROAS tells you what's worth scaling. Creative analytics tells you what to make more of. Teams that operate with both have a structural advantage over teams that only operate with one.

Segwise gives performance marketing teams the creative intelligence layer Meta's native tools don't provide. Multimodal AI tagging across every Meta creative, cross-network benchmarking against TikTok, Google, and 12+ other networks, competitor creative analysis, and fatigue detection before performance drops. Teams using Segwise save up to 20 hours per week on creative analysis and achieve up to 50% ROAS improvement by making creative decisions grounded in element-level data, not campaign-level averages.

Frequently asked questions

What is thumbstop rate on Meta and how is it different from CTR?

Thumbstop rate measures the percentage of people who stopped scrolling on your ad and watched it for at least three seconds. CTR measures the percentage of people who clicked. Thumbstop rate is a leading indicator of creative quality, it captures audience response before any intent signal. CTR is a downstream measure of full creative appeal. You can have a strong thumbstop rate and weak CTR (the ad captures attention but the message or CTA isn't converting interest to action), which points to a different creative fix than a weak thumbstop rate across the board.

Why does Meta's algorithm favor creatives with high thumbstop and hold rates?

Meta's algorithm uses creative engagement quality signals, thumbstop rate, hold rate, CTR, to determine which ads earn more auction impressions. Higher-quality engagement signals mean Meta shows the ad to more people at lower CPMs. This is why a creative with genuinely strong engagement can outperform a higher-budget campaign running mediocre creative: the algorithm rewards quality with cheaper reach.

How do I analyze dynamic creative performance on Meta?

Meta's dynamic creative reporting shows you which combination of elements performed best, but not which individual element drove the result. To get element-level attribution, you need either single-variable testing (testing one element at a time with everything else held constant) or a creative analytics platform that can tag and attribute performance to specific components. Segwise's AI tagging analyzes every creative element across all your Meta ads automatically and maps each tag to performance metrics.

How do I detect creative fatigue before it damages performance?

Frequency is the primary leading indicator. When average impressions per unique user climbs above your category's threshold, CTR starts to drop and CPA rises shortly after. The goal is to rotate creative before frequency causes the performance cliff, not after. Segwise monitors all running Meta creatives for decline patterns and sends configurable alerts when fatigue thresholds are hit, based on your own criteria — ROAS decline percentage, spend share drop, or other custom signals.

What does a proper Meta creative analytics workflow look like for a growth team?

The core components: accurate data pipeline connecting Meta to your analytics environment and MMP data, element-level creative tagging that maps creative variables to performance metrics, fatigue monitoring with proactive alerts, competitor tracking against your key competitors' creative libraries, and a brief process that feeds performance insights directly back into new creative production. Segwise covers the creative intelligence layer — AI tagging, fatigue detection, competitor tracking, and AI-powered creative generation — working on top of your existing MMP and attribution infrastructure.

What's the difference between Meta creative analytics and standard campaign reporting?

Standard campaign reporting tells you how each campaign performed: spend, impressions, clicks, conversions, ROAS. Creative analytics tells you why — which elements inside the creative produced those results. Standard reporting helps you allocate budget. Creative analytics helps you brief better ads, identify patterns that travel across campaigns and audiences, and build compound creative advantage over time.

Do I need a data science team to use creative analytics on Meta?

No. Platforms like Segwise are designed for performance marketing teams — UA managers, creative strategists, and growth leads — not data scientists. The AI tagging, tag-to-metric mapping, and report generation is automated. The output is accessible analysis that feeds directly into creative decisions without requiring SQL or custom data infrastructure. No-code integration setup takes under 15 minutes.

Comments

Your comment has been submitted