AI in Performance Creative: What's Working and What's Still Hype in 2026

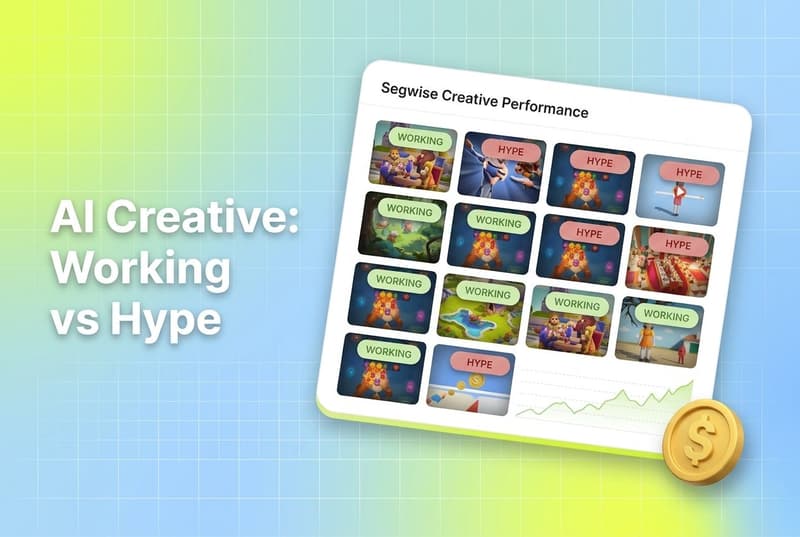

AI in performance creative has split into two clear lanes: a working lane (image-to-video, voiceover, localization, ideation) where teams are shipping winners and shaving real budget, and a hype lane (full cinematic, brand-safe-at-scale, persistent character consistency) that still produces demo reels more than ad creatives. For UA managers, creative strategists, and growth leaders, the practical move in 2026 is to industrialise the working lane and stop waiting for the hype lane to mature. Segwise sits in the working lane, plugging into ad networks to surface winning patterns and generate Hit Creatives directly from performance data.

If you've spent any of the last 18 months evaluating AI creative tools, you already know the pitch is louder than the proof. Every demo looks great. Every tool claims to ship winning ads. Then you actually try it on a real Meta or TikTok account and half the output gets rejected by your reviewer, the other half can't be reproduced consistently, and the results land somewhere between "useful" and "uncanny."

That gap between demo and ad account is the whole story of AI in performance creative right now. The tools that are genuinely helpful are doing narrow, well-scoped jobs. The tools that try to do everything from concept to final brand-safe cinematic ad still ship results that need a human to clean up before they can run at scale.

This post is a 2026 reality check for performance marketers. It covers what's actually working in production today, what's still hype, the tool stack most teams are converging on, the budget impact when AI creative is implemented well, and the difference between teams that get gains from AI creative and the ones that just spend more on tools. We'll lean on data from the AppsFlyer Creative Optimization Report 2025, Meta's reported lift from GenAI ads, Runway's Gen-4.5 image-to-video tutorial, Gartner's CMO survey, Mobile Dev Memo, and published case studies.

Also learn about The AI Creative Stack for App UA Teams in 2026

Key takeaways

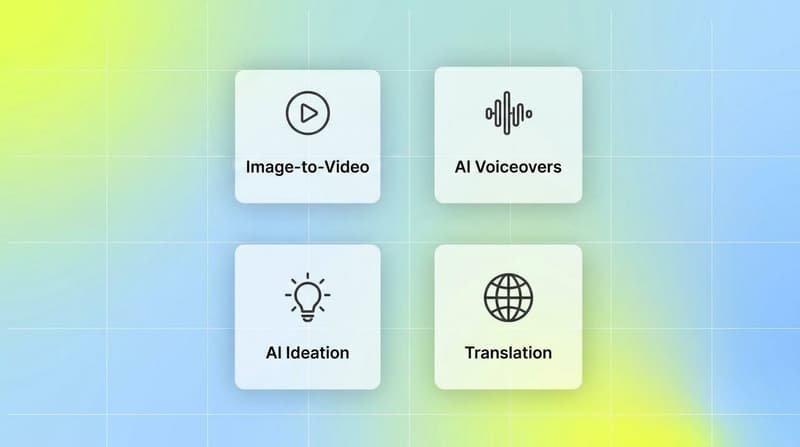

AI image-to-video, AI voiceovers, AI localisation, and AI ideation are the four workflows shipping consistent gains. Teams using them well report production-time cuts of 50%+ and CPI/CPA drops in the 30–70% range, per Admiral Media and AppsFlyer.

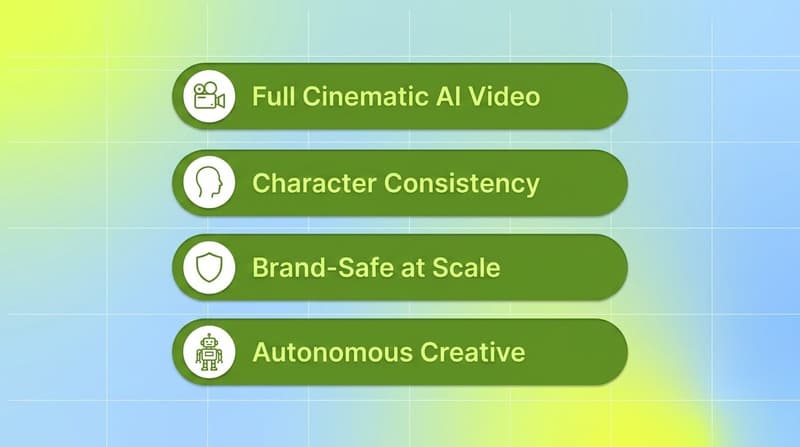

Full cinematic AI video, persistent character consistency across clips, and brand-safe AI creative at scale are still hype. Character drift, hallucinated objects, and uncanny output continue to force human cleanup or full reshoots, per AI video analysis sources.

Meta-reported data: ad campaigns using AI-generated images saw an 11% higher CTR and 7.6% higher conversion rate, and over 4 million advertisers now use Meta's GenAI ad tools, according to the AppsFlyer Creative Optimization Report 2025.

65% of CMOs say AI will dramatically change their role in the next two years, but only 32% say significant skill changes are needed, signaling a literacy gap, per Gartner.

Most teams stitch together point tools (Midjourney, Runway, ElevenLabs, HeyGen, CapCut). The teams seeing real gains add a creative intelligence layer on top, so production volume meets actual performance signal rather than vibes.

Budget impact is real but uneven: AppsFlyer found non-gaming apps spending $7M+ per quarter now average 2,365 creatives per quarter, growing 80% faster than gaming. Volume without an analytics layer just adds noise.

Where AI is actually winning in performance creative

The working lane has narrowed to a handful of workflows where AI is fast enough, cheap enough, and good enough to be defaulted to in production.

Image-to-video for ad variants

This is the single most reliable AI creative workflow in 2026. You start with a static product shot, character render, or hero frame, and AI converts it into a 4–8 second motion clip ready for Reels, TikTok, or Shorts.

Runway's Gen-4.5 image-to-video model is the best example of how this matured. It uses a single reference image plus a prompt to generate short clips with consistent style, characters, and locations. The model is short-form first, optimised for Instagram and TikTok-length output rather than feature film clips, which is exactly the format performance ads need.

Image-to-video works well because the input image already locks in brand-safe details, product appearance, and composition. The AI only needs to handle motion, which is the part it's now reliable at over 4–8 seconds. Beyond that window, drift and inconsistency creep back in.

Performance impact: in Meta beta testing, advertisers using AI video generation for most of their campaign ads saw average gains of 10% in CTR and 8% in CVR. Image generation saw a 7% conversion lift, per the AppsFlyer Creative Optimization Report 2025 summarising Meta's internal data.

AI voiceovers and dubbing

ElevenLabs and HeyGen have made AI voice the default for ad voiceovers in any vertical that needs scale or localisation. AI dubbing now hits 95–98% accuracy with up to 15x cost savings versus traditional dubbing, per industry reporting from HeyGen.

The reason voice works where full video doesn't: there's no character consistency problem. A voice clone doesn't drift across a 30-second ad the way a face does across a 30-second clip. Pacing, emotion, and accent stay locked.

Real-world ad results: HeyGen reports Trivago localised TV ads across 30 markets, cutting post-production time in half and saving 3–4 months per campaign. Rosetta Stone used HeyGen to translate Spanish, French, German, and Italian ads while preserving original look and feel. These aren't lab demos, they're shipped media plans.

AI ideation and concept generation

The least sexy workflow, and the one with the highest hidden ROI. Using ChatGPT, Claude, or a structured ideation engine to generate hook variants, value-prop angles, headline tests, and storyboard outlines is now standard for most performance creative teams.

The point isn't that the AI writes the final copy. The point is the testing surface area. When you can generate 50 hook variants in five minutes instead of writing 5 in an hour, you find winners that wouldn't otherwise exist. Admiral Media's published case studies note that approximately 6–7% of ad variants become genuine scale performers, so the brand finding the most winners is the brand testing the most variants.

This is also the workflow that compounds with creative analytics: AI generates concepts, the data tells you which concepts share the same winning DNA, and the next batch is built on those signals.

AI translation and localisation

AI translation has moved from "good for first draft" to "good for production" in the last year. AI dubbing and translation now match human dubbing on accuracy benchmarks for most non-emotive ad scripts, per HeyGen's 2025 reporting, while running 10x faster and costing 15x less.

For UA teams running 20+ markets, this is the difference between localising a hero asset and not bothering. Cost-per-market for video localisation has dropped enough that teams can localise variant tests, not just hero assets.

Where AI is still hype

Every workflow above shares a common feature: it's narrow. As soon as you ask AI to do everything from concept to brand-safe cinematic at scale, it falls apart.

Full cinematic AI video

A 30-second cinematic spot with multiple characters, locations, transitions, branded products, and an emotional arc is still beyond reliable AI production. The output looks impressive in isolation, then breaks the moment you try to use it as a real ad. McDonald's Netherlands released an AI-generated holiday ad that audiences described as unsettling and inauthentic, per industry coverage. The ad was pulled within three days.

The problem isn't aesthetic, it's structural. Models trained on flat 2D video clips lack a real understanding of 3D space, object permanence, and physics. They can generate something that looks like a cinematic, but they can't reliably distinguish between "a camera panning past a motorcycle" and "a motorcycle hovering while the background moves," per analysis on AI video drift. This produces the spatial inconsistencies that make ads feel uncanny.

Character consistency across clips

If you need the same hero, mascot, or spokesperson across 10 connected clips, AI still fails more than it succeeds. AI video lacks a persistent mental model of characters, so the "same guy" in frame 1 has drifted into someone slightly different by frame 100. Minor errors compound.

Runway Gen-4.5 made progress here with reference-image conditioning and tools like Act-Two for performance capture, but it works best for short single-shot clips. Multi-shot consistency, especially across separate generation runs, still requires manual intervention or a dedicated workflow with tight reference control.

Brand-safe AI creative at scale

Generating 1,000 ad variants is now trivial. Generating 1,000 ad variants that are brand-safe, on-message, free of hallucinated logos, accurate to product details, and compliant with platform policy is a different problem. Hallucinations show up as identity drift, prompt-related artifacting (two chairs instead of one, an extra finger), and unprompted background objects. In ad creative, those errors trigger reshoots, regenerations, or human fixes that erode the cost savings.

Sora's content filters were simultaneously too aggressive (blocking legitimate creative use) and too porous (workarounds appeared immediately), which is the same pattern most generation models hit when scaled. The result: brand-safety review becomes the new bottleneck.

Fully autonomous "creative as a service"

The pitch that an AI agent will read your performance data, generate fresh creatives, push them live, and self-optimise without human intervention is real in fragments and demoware everywhere else. McKinsey estimates agentic AI could support up to two-thirds of current marketing activities, per their 2026 agentic workflows research, but they're describing potential, not deployed reality. In practice, the human layer (strategy, judgment, brand alignment) is still what makes AI creative scalable. Admiral Media states this directly in their case studies: AI handles production volume and speed, humans handle judgment, strategy, and brand alignment, and that combination, not AI alone, is what drives results.

The 2026 AI creative tool stack snapshot

There's no single "AI creative platform" most teams use. Most performance teams converge on a stack that looks like this:

Ideation: ChatGPT, Claude, or domain-specific ideation engines for hook variants, headlines, and angle generation.

Image generation: Midjourney v6, Adobe Firefly, or Meta's GenAI image tools for static creative production and product photography variants.

Image-to-video: Runway Gen-4.5, Kling, Veo, or Pika for animating static frames into 4–8 second motion clips.

AI voice and dubbing: ElevenLabs for natural voice generation, HeyGen for video translation with lip sync (175+ languages, per HeyGen).

Avatar / spokesperson video: HeyGen for branded talking-head content where a real spokesperson isn't available.

Editing and assembly: CapCut for fast Reels and TikTok edits, Adobe Premiere for higher-end work.

Performance analytics and creative intelligence: This layer ties production output back to ad-network performance data so the team knows which AI-generated patterns are actually winning.

The first six layers are the production stack. Without the seventh layer, teams end up generating high volumes of variants without a reliable signal for which ones to scale or kill. AppsFlyer's data shows non-gaming advertisers spending $7M+ per quarter now average 2,365 creatives per quarter, but their core conclusion is direct: "Volume without intent just adds noise. Use granular data to guide production, spot gaps, and iterate with purpose."

This is exactly where Segwise sits. Most AI creative tools are point solutions, one for ideation, one for video, one for analytics. Segwise is a fully agentic AI creative intelligence and generation platform: plug in your ad networks (Meta, Google, TikTok, Snapchat, YouTube, Axon, Unity Ads, Mintegral, IronSource) and MMPs (AppsFlyer, Adjust, Branch, Singular), and Segwise's Creative Tagging Agent tags every visual, audio, and text element across creatives. The Creative Strategy Agent then surfaces winning patterns at the element level (which hooks, characters, CTAs, emotions, and visual styles drive performance) and the Creative Generation Agent produces new creatives built on those winning patterns, exportable in every aspect ratio your ad networks need.

Budget impact: the gains are real, the gains are uneven

AI in performance creative does change unit economics. The numbers are real. The question is whether your team is set up to capture them.

Documented gains from Admiral Media's published case studies include +45% ROAS and +55% CTR on a mobile cooking game, -32% CPA with +77% ad spend on a dynamic creative program, -66% CPA and +162% subscriptions on a dating app, and -74% CPI on a global app launch. These are not minor optimisations, they're transformational shifts in unit economics. A 74% CPI reduction means either 3.8x install volume at the same budget, or the same install volume at 26% of the previous spend.

Budget allocation is shifting accordingly. 23% of companies plan to dedicate 16–20% of their marketing budget to AI tools in 2025, up from 11% in 2024, per industry reporting on AI marketing budgets. Smartly customers see an average 10% CPA improvement, per their published results, and Meta's Advantage+ Shopping Campaigns with 20+ creatives delivered a 29% reduction in median incremental cost per purchase.

But there's a counter-statistic that matters: 68% of agencies without a clear AI strategy wasted significant portions of their budgets in the first year of AI tooling, per industry reporting. The differential isn't whether you use AI tools, it's whether you have the analytics and creative intelligence layer to direct what the AI tools produce.

The pattern holds across every published case study: the gains come from volume + measurement + iteration, not volume alone. Teams that just buy seats to generation tools and let designers experiment without a creative intelligence layer end up paying for noise.

Teams using AI creative well vs badly

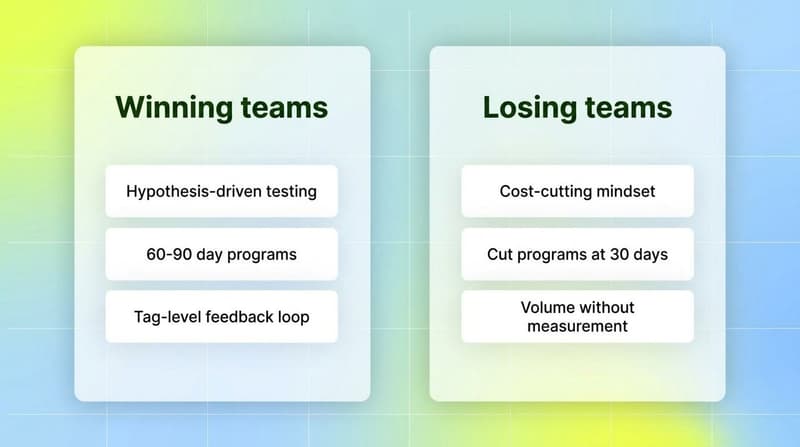

Across the published case studies and industry reporting, the difference between teams that capture AI creative gains and teams that waste budget comes down to four operational patterns.

Teams using it well:

They run AI tools against a clear performance hypothesis. Each batch of variants is built to test a specific hook, audience, or angle, not to fill a content calendar.

They give the program enough time to accumulate signal. Most strong results come at 60–90 days of sustained production, per Admiral Media's case studies.

They maintain a tight feedback loop between ad-network performance data and the next production cycle. They know which variants worked, which didn't, and what's getting iterated next, all backed by tag-level data rather than gut feel.

They have a human creative strategist (or an AI Creative Strategist agent) interpreting the data and writing the next round of briefs, so AI volume meets strategic direction.

Teams using it badly:

They treat AI as a cost-cutting measure rather than a performance strategy. They optimise for cheaper creative instead of better creative, then wonder why their CTR didn't move.

They cut programs at 30 days because early variants didn't immediately outperform their hero asset, before the testing surface has accumulated enough data to surface real winners.

They generate volume without an analytics layer, then can't tell their winning variants from their losing ones.

They over-constrain the creative brief to the point where meaningful testing is impossible, then conclude that AI creative "doesn't work for our brand" when the real issue is brief design.

What the data says about AI creative in 2026

The directional signal across every authoritative source is the same: AI creative is now table stakes in production, and the differentiator has moved from "do you use AI tools" to "how well do you measure and iterate on AI output."

Gartner's 2024 CMO survey found 65% of CMOs say advances in AI will dramatically change their role in the next two years. The follow-up 2026 survey found that while 65% still expect role disruption, only 32% say significant skill changes are needed, an "AI blind spot" Gartner expects will lead to CMO replacements by 2027. Only 5% of marketing leaders not piloting AI agents report significant gains on business outcomes.

The takeaway for performance marketers: the working lane of AI creative (image-to-video, voice, ideation, localisation) is reliable enough to make a default workflow today. The hype lane (full cinematic, autonomous brand-safe production at scale, persistent character consistency) is real R&D, not deployable production. Plan your stack around that boundary, build the measurement layer that turns AI volume into iteration signal, and you'll capture the gains that the case studies show are available.

Frequently asked questions

What's actually working in AI performance creative right now?

Image-to-video for short-form ads, AI voiceovers and dubbing, AI ideation for hook and angle variants, and AI localisation are the four workflows shipping consistent performance gains in 2026. Tools like Runway Gen-4.5, ElevenLabs, HeyGen, and Midjourney v6 are reliable for production. Platforms like Segwise add the creative intelligence layer that ties AI-generated output back to ad-network performance data, so teams know which variants to scale.

Where does AI in advertising still fail?

Full cinematic AI video, persistent character consistency across multiple clips, brand-safe AI creative at scale, and fully autonomous "AI creative as a service" still produce results that need human cleanup before they can run at scale. Character drift, hallucinated objects, identity inconsistency, and spatial issues continue to derail multi-shot AI video, and brand-safety review is the new bottleneck for high-volume generation.

How much can AI creative actually reduce my CPA or CPI?

Published case studies from Admiral Media show CPA reductions in the 32–66% range and CPI reductions up to 74%, depending on vertical and program duration. Meta-reported data shows 7–11% CTR and CVR lift from GenAI ad images and videos, and 9% lower CPA in AI-powered formats. The gains require 60–90 days of sustained production and a measurement loop, not a one-off campaign.

What's the right AI creative tool stack in 2026?

Most performance teams converge on Midjourney or Adobe Firefly for image generation, Runway Gen-4.5 for image-to-video, ElevenLabs for AI voice, HeyGen for dubbing and avatars, CapCut for fast assembly, and a creative analytics layer like Segwise to tie it all back to ad-network performance. The first six are production tools. The seventh is what turns volume into iteration signal.

How do I avoid the AI creative budget waste trap?

Don't treat AI as a cost-cutting tool, treat it as a performance strategy. Run each batch of variants against a clear hypothesis, give programs at least 60–90 days to accumulate signal, maintain a tight feedback loop between ad-network performance and the next production cycle, and use a creative intelligence layer (Segwise, or equivalent in-house) so you can tell winning variants from losing ones at the element level rather than the campaign level.

Are AI-generated ads as good as human-made ads for performance?

In performance contexts, AI-generated ads consistently match or outperform traditionally produced ads on core metrics like CTR, CPA, and CPI. The advantage is not the AI itself, it's the testing surface area AI production enables. Testing 50 variants instead of 5 finds winners that would otherwise stay undiscovered, per Admiral Media. Quality matters, but volume + measurement matters more.

Will agentic AI replace my creative team?

Not in 2026. McKinsey estimates agentic AI could support up to two-thirds of current marketing activities, but the deployed reality is that humans still own strategy, judgment, and brand alignment. The teams capturing real gains use AI to handle production volume and speed while humans handle strategic direction. Tools like Segwise's Creative Strategy Agent give the human strategist faster, deeper signal to work from, but the strategic call still sits with a human.

How does Segwise compare to standalone AI creative tools?

Most AI creative tools are point solutions: one for ideation, one for video, one for analytics. Segwise is a fully agentic AI creative intelligence and generation platform that unifies creative data across 15+ ad networks (Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, IronSource) and MMPs (AppsFlyer, Adjust, Branch, Singular). It tags every creative element with multimodal AI, surfaces winning patterns at the element level via the Creative Strategy Agent, and generates new Hit Creatives from those patterns, exportable in every aspect ratio. Teams report up to 20 hours saved per week and 50% ROAS improvement.

Comments

Your comment has been submitted