Pre-Flight Testing Frameworks for Meta and TikTok Ads

Pre-flight testing is the practice of evaluating ad creative before spend, using structured in-platform experiments, AI-powered prediction, or brand-compliance checks to decide what actually goes live. For user acquisition and creative teams, that means fewer expensive "launch and pray" drops, faster learning cycles, and a clearer signal on which concepts deserve real budget. Segwise closes the loop after the pre-flight phase, so the winners you picked pre-launch get tagged, tracked, and iterated on at the element level once they go live.

Most creative decisions on Meta and TikTok still get made on gut feel, then validated with spend. That works until your CPMs rise, your benchmarks tighten, and a bad hero asset eats a week of budget. Pre-flight testing flips the order. You pressure-test the creative first, whether that's against a live audience in a controlled setup, an AI attention model, or a brand-compliance rubric, then put real dollars behind what survived.

The confusion in 2026 is that "pre-flight" means three different things depending on who you ask. Performance agencies use it to describe structured in-market testing before the scaling phase. Platforms like Smartly use it to describe AI-powered prediction before you even press publish. Enterprise brand teams use tools like CreativeX to check brand hygiene and regulatory compliance before spend. All three are valid. All three solve different problems. This guide walks through how each one works on Meta and TikTok, and when to use which.

If you run UA for a mobile game, a DTC brand, or a subscription app, the practical implication is simple: you need a pre-flight step in your workflow, and it cannot just be "the creative director liked it."

Also read about Creative Experimentation Platforms for Ads: What Actually Works in 2026

Key Takeaways

Pre-flight testing covers three distinct practices in 2026: structured in-market creative testing before scaling, AI-powered pre-launch prediction (attention, emotion, composition), and brand-compliance checks before publish. Each solves a different problem.

For Meta, the standard framework is a three-phase flow: test new creatives against each other in isolation (ASC+, CBO, ABO, or cost cap), then test the winner against your current best ad, then scale, drawn from Ben & Vic Agency's published creative testing framework.

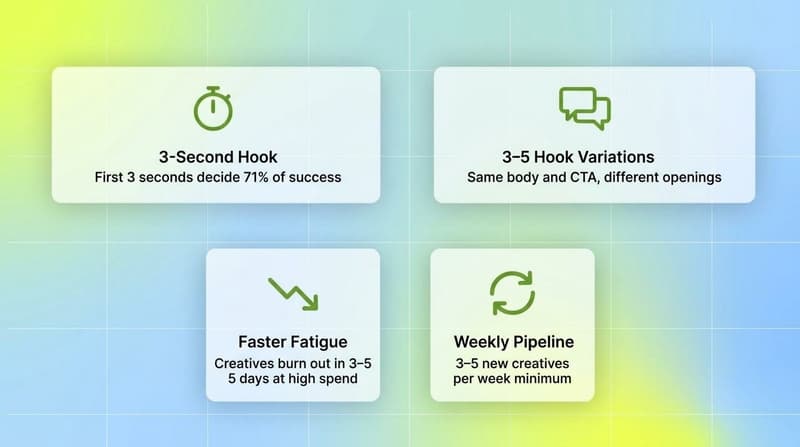

For TikTok, pre-flight is about hook volume (3–5 hook variations per concept), the 3-second pattern interrupt rule, and faster kill criteria. Top-performing TikTok videos hook viewers in the first three seconds, and fatigue can set in within 3–5 days at high spend.

AI pre-flight tools like Smartly's Creative Predictive Potential and Brainsight predict attention, emotional response, and composition quality before a dollar is spent. They do not predict sales outcomes, only creative potential.

The 2x CPA rule still holds for Meta pre-flight kill decisions: if a test ad spends 2x your target CPA without hitting goal, it's dead, according to AdStellar's 2026 budget allocation guidance.

Pre-flight testing is only the first half of the loop. The post-flight phase, tagging winners at the element level and feeding insights back into the next brief, is where compounding happens.

What Pre-Flight Testing Actually Means

"Pre-flight" originally came from aviation: the checklist a pilot runs before takeoff. In advertising, the term has been adopted across three adjacent categories that sometimes use the same words to mean different things.

Pre-flight as in-market structured testing. This is the performance-marketing definition. You run new creatives in controlled ad sets, against a live audience, with rules for how much you're willing to spend before calling a winner or a loser. The goal is to separate new-creative signal from the historical-data advantage that already-running ads have. Ben & Vic Agency formalized this into a three-phase structure (new vs. new, then new vs. BAU, then scale) that most Meta advertisers now run some version of.

Pre-flight as AI prediction before spend. This is Smartly's definition. Upload the creative, get back a predicted score on attention, emotion, and composition. You're not running the ad at all yet. Smartly's Creative Predictive Potential is explicit that it predicts creative potential, not sales outcomes, because performance depends on targeting, placement, timing, and budget. Similar tools in this category include Brainsight, which claims up to 94% accuracy on instant attention vs. traditional eye-tracking, and Neurons.

Pre-flight as brand-compliance evaluation.CreativeX built their tool around this. Upload an image, video, or GIF and get a scorecard against platform best practices, brand rules, and regulatory requirements within 24 hours. If the creative hits 80% or higher on the Creative Hygiene Score, it's cleared to launch. If not, you fix and resubmit. Unilever reportedly scaled this across 6 continents in six weeks with 2,000+ employees using it.

All three live under the same "pre-flight" umbrella because they all happen before real spend. The practical question is which one you need, or whether you need a mix.

A Pre-Flight Framework for Meta Ads

The dominant in-market pre-flight framework for Meta in 2026 is the three-phase model: test new creatives against each other first, then test your top new creative against your current best ad, then scale.

The first mistake most advertisers make is running a new creative straight against an old one. The old ad has pixel optimization, historical data, and audience memory working for it. The new ad has none of that. You're not measuring creative quality, you're measuring seasoning.

Phase 1: New vs. New

Run your candidate creatives against each other in isolation. The Ben & Vic three-phase framework offers five common setups:

ASC+ campaign with all creatives inside. Best for smaller accounts that want budget efficiency over precision. Accuracy is lower because Advantage+ redistributes spend heavily toward its own picks.

CBO with one creative per ad set. Lets Meta's algorithm decide where spend goes. Efficient, but CBO can over-allocate to a single ad set fast, so use cost-cap rules to kill ad sets that run 2–3x your target CPA without conversions.

ABO with one concept per ad set (plus variants). Medium accuracy, medium cost. Forces equal budget allocation, so you get cleaner reads faster than CBO.

CBO with concept-level ad sets. Variants of the same concept live together, so you compare concept-level signal first, then variant-level.

Cost cap with one ad set per concept. The advanced option. Highest accuracy because Meta is forced to hit your cost ceiling or stop spending. Realistic for accounts spending over roughly $500K/month.

Phase 2: New vs. BAU

Once Phase 1 has a winner, test it against your current best-performing ad. This is the real bar: can your new creative match or beat an ad with historical data and pixel learning?

Common setups:

CBO with two ad sets, one creative each (old vs. new). Efficient for limited budgets.

ABO with two ad sets. Balanced, good for medium budgets.

One ad set with both creatives and cost cap. Highest precision, used by larger accounts.

The budget rule most teams settle on is the 2x CPA kill: if an ad spends 2x your target CPA without hitting goal, it's dead. AdStellar's 2026 Meta budget allocation guidance adds a tighter version: if an ad spends 1.5x target CPA with zero conversions, kill immediately. A common starting budget floor is 50x your target CPA weekly, so Meta has enough data to optimize against.

Phase 3: Scale

When your new creative matches or beats BAU, it graduates. Practical rules from the in-market framework:

Begin scaling immediately once performance is validated.

Add the new winner into fatigued ad sets to refresh them rather than pausing old ones.

Be patient with CBO and ASC+ when adding new creatives, because the algorithm re-learns.

Keep old creatives running alongside new ones, since pausing a pixel-optimized ad can cost more than it saves.

A Pre-Flight Framework for TikTok Ads

TikTok pre-flight runs on different math. Creative life is shorter, hook volume matters more, and the 3-second window is non-negotiable.

Hook volume is the unit of work

On Meta, you often test one hook per concept and call it. On TikTok, that's a broken test. TikAdTools' 2026 TikTok ads playbook recommends 3–5 distinct hook variations per concept, with the same body and CTA, so you can isolate which opening actually stops the scroll.

The 3-second rule

TikTok's algorithm weights watch time heavily. Research cited across the creative-strategy community suggests the first 3 seconds determine 71% of a TikTok ad's success, and 63% of top-performing TikTok videos hook viewers in that window. In practice, that means opening with the payoff, not building to it. Show the outcome, the conflict, or the pattern interrupt up front. Videos without a visual change or text pop in the first 0–3 seconds get penalized on CPM, which makes traffic more expensive before you've even started the real test.

Faster fatigue, faster kill criteria

Creative fatigue on TikTok runs roughly 4x faster than on Meta. According to CRO Benchmark's breakdown of Meta vs. TikTok fatigue, a creative that lasts 2 weeks on Meta can burn out in 3 days on TikTok at high spend. Typical TikTok refresh cadence sits in the 3–5 day range for high-spend accounts, with most ads losing meaningful performance within 7–10 days.

That compresses the pre-flight window. On Meta you might give a Phase 1 test a full week. On TikTok, a few days of clean data is often all you get before the concept starts to age. Plan for at least 3–5 new creatives per week on TikTok just to keep the pipeline fed.

The TikTok pre-flight sequence

Write 10–20 hook candidates against a single offer and CTA. Hooks are cheap to produce, so over-produce here.

Shoot 3–5 hook variants per winning concept, same body, same close.

Run a new-vs-new round, ABO or CBO depending on budget, with a kill rule around 2x target CPA.

Promote winners into a new-vs-BAU round against your current top performer.

Scale the winner into concept-level expansion: take the same narrative angle and produce 5–10 executions with different creators, settings, or formats. This is what most TikTok operators mean by "scaling concepts, not ads."

AI-Powered Pre-Flight: Predicting Before You Spend

The newer category of pre-flight testing skips the in-market test entirely at the first stage and asks a model: how is this creative likely to perform?

Smartly's Creative Predictive Potential is a representative example. Upload your image or video ads (ideally 20 or more for richer benchmarks), and the system returns three signals: a sentiment scorecard across emotions like joy, surprise, and sadness; an attention heatmap showing where viewers focus; and AI recommendations on what to adjust. It explicitly does not predict revenue, because revenue depends on targeting, placement, audience behavior, timing, budget, and algorithm state. It predicts what the creative, in isolation, is likely to do to a viewer.

Other tools in this category operate similarly. Brainsight benchmarks ads against a dataset of over 10,000 tested creatives across formats and industries, and claims up to 94% accuracy on predicted instant attention vs. traditional eye-tracking. Neurons, Adverteyes with Clinch, and Teads have similar predictive offerings.

When AI pre-flight works best:

You produce high creative volume and need a cheap pre-screen before in-market testing burns budget.

You test concepts that differ mostly on emotional or compositional choices (where to place the logo, which first-frame reads strongest, how dense the text overlay is).

You have the option to iterate before publish, not after.

When it doesn't:

You're testing offer, pricing, or targeting, not creative.

Your category has weak overlap with the model's training data.

You treat the score as a verdict. The right read is "creative potential," not "creative destiny."

The honest version of this is: AI pre-flight tools shorten the path to a decent first draft. They don't replace in-market testing.

Pre-Flight for Brand Hygiene and Compliance

The third definition of pre-flight is the enterprise one. CreativeX's Pre-Flight Testing tool checks creative against brand guidelines (logo placement, talent usage, CTA visibility) and regulatory requirements before spend. A Creative Hygiene Score of 80% or above means the creative is cleared to launch; below that, you fix and resubmit.

This is less about performance and more about risk management. For a global CPG or pharma brand running thousands of localized creatives across dozens of markets, the cost of one non-compliant ad going live can be bigger than a full quarter of performance optimization. This version of pre-flight is not a replacement for structured in-market testing; it's a sibling process.

Pre-Flight Testing Is Only Half the Loop

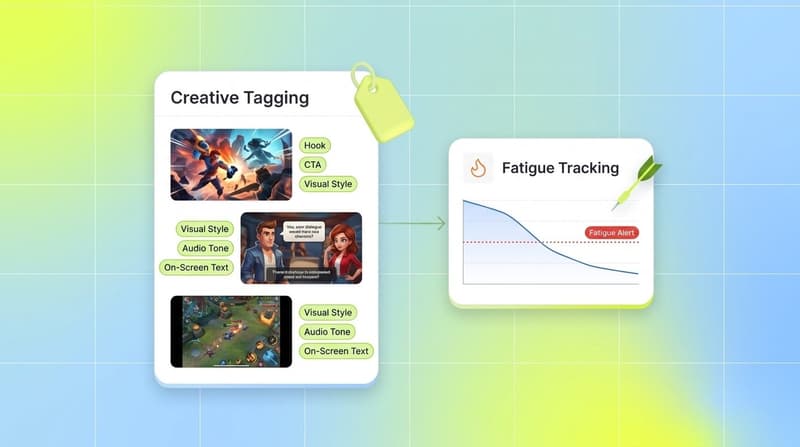

The honest limit of every pre-flight approach, whether structured in-market, AI-predictive, or compliance-based, is that it ends at launch. Pre-flight tells you what to put live with confidence. It does not tell you why the winner worked, which specific creative element carried it, or what to brief next.

That's the post-flight problem. Once an ad is running, you need element-level tagging so you can isolate the actual drivers: is it the hook, the opening visual, the on-screen text, the CTA, the music bed, the pacing? Most teams still do this by hand in spreadsheets, which is why, according to practitioner estimates, teams can spend 20+ hours per week on creative tagging and performance consolidation.

This is the gap Segwise's Creative Tagging Agent and Creative Strategy Agent sit in. The Tagging Agent uses multimodal AI to auto-tag every creative element (visual style, hook line, character, CTA, emotion, audio tone, on-screen text) across Meta, Google, TikTok, Snapchat, YouTube, AppLovin, Unity Ads, Mintegral, IronSource, and MMPs including AppsFlyer, Adjust, Branch, and Singular. The Creative Strategy Agent then lets you query the data in plain language and flags fatigue early through its fatigue tracking so you can refresh before performance crashes. Segwise is the only platform that also tags playable (interactive) ads, which matters for mobile gaming advertisers whose pre-flight pipeline includes playables.

Teams using Segwise report up to 20 hours saved per week per app or brand, and cases of up to 50% ROAS improvement by identifying winning creative patterns and catching fatigue early.

Conclusion: Making Pre-Flight Testing Work for Meta and TikTok

Pre-flight testing in 2026 is not one practice. It's three, stacked on top of each other. You evaluate creative quality with AI tools before production locks, you run structured in-market tests on Meta and TikTok before scaling, and on larger brands you add a compliance pre-flight on top. The teams that beat rising CPMs are the ones running at least two of the three, consistently, and feeding the post-flight data back into the next brief.

The core insight holds across all three definitions: the cost of a bad creative launching is always bigger than the cost of catching it before launch. Pre-flight testing is the cheapest insurance you can buy against burning a week of spend on a concept that was never going to work, and the highest-leverage moment to make your creative better is always before money moves.

Frequently Asked Questions

What is pre-flight testing for Meta and TikTok ads?

Pre-flight testing is the evaluation of ad creative before paid spend using one of three approaches. Structured in-market testing runs new creatives against each other in controlled ad sets (ASC+, CBO, ABO, or cost cap) with a 2x CPA kill rule, then pits the winner against your current best ad before scaling. AI-powered prediction tools like Smartly and Brainsight score attention, emotional response, and composition before publish to pre-screen creative volume cheaply. Brand-compliance pre-flight, via CreativeX, checks logos, talent, CTAs, and regulatory requirements against a Creative Hygiene Score. Segwise complements all three by handling the post-flight phase: multimodal tagging of every live creative element on Meta, TikTok, Google, AppLovin, Unity Ads, and more so you know which hooks, CTAs, and visuals drove the win.

How is pre-flight creative testing different from post-flight analysis?

Pre-flight testing happens before spend. It tells you what to launch with confidence. Post-flight analysis happens after spend and tells you why a winner won, which elements drove it, and what to iterate on next. Smartly frames this as a continuous loop (predict, launch, learn, refine). Segwise's multimodal tagging is the post-flight half of that loop, turning live ad data into element-level insight across Meta, TikTok, Google, AppLovin, and major MMPs.

What does pre-flight testing mean for a UA manager running Meta and TikTok campaigns?

For a working UA manager, it means a repeatable step between "creative is ready" and "creative is scaled." On Meta that's a three-phase in-market flow with a 2x CPA kill rule. On TikTok that's 3–5 hook variations per concept, a 3-second rule for the first frame, and faster refresh cadence. AI pre-flight tools like Smartly can pre-screen high creative volume. Post-flight, Segwise tags elements and catches fatigue so you know what to brief next.

How do I run pre-flight testing on Meta step by step?

Produce a set of candidate creatives (at least 3–5 per concept). 2. Run a new-vs-new round using ASC+, CBO, ABO, or cost cap depending on account size. 3. Apply a kill rule at 2x target CPA. 4. Take the winner and run it against your current best ad (new vs. BAU). 5. Scale only the creative that matches or beats BAU. 6. Once live, tag creative elements so you know what drove the win, then feed that into the next brief.

What's the difference between Meta pre-flight testing and TikTok pre-flight testing?

Meta pre-flight runs on longer test windows (roughly a week per phase), fewer hooks per concept, and a 2–4 week fatigue cycle. TikTok pre-flight runs on shorter windows (a few days), 3–5 hook variations per concept as the default, a 3-second opening rule, and a 3–5 day fatigue cycle at high spend. Same three-phase structure, very different pacing.

Can AI really predict ad performance before launch?

AI pre-flight tools predict creative potential, not sales. Smartly and Brainsight measure attention, emotional response, and composition quality, which correlate with engagement and recall. They do not account for targeting, placement, timing, or budget, which is why most practitioners use them as a pre-screen before in-market testing, not a replacement for it.

How many creatives should I pre-flight test at once?

Smartly recommends uploading 20 or more assets for meaningful AI benchmarks. For in-market testing on Meta, 3–5 variants per concept is standard. For TikTok, 3–5 hook variations per concept plus 10–20 raw hooks to screen first is closer to the working norm given faster fatigue.

Do I need separate pre-flight workflows for Meta and TikTok, or can I run one test?

Run them separately. Audience behavior, fatigue rate, and hook structure are different enough on Meta and TikTok that a single combined test produces noisy data. Cross-platform learnings do transfer (a strong hook usually works on both), but the kill rules, budget floors, and refresh cadences are different and should be treated that way.

Which pre-flight approach works best for mobile gaming, DTC, or subscription apps?

Mobile gaming teams should prioritize in-market testing on Meta and TikTok plus playable-ad pre-flight. Segwise is the only platform that auto-tags playable (interactive) ads, which matters when your pre-flight pipeline includes interactives. DTC brands get the most leverage from AI-powered pre-flight on hero assets (Smartly, Brainsight) combined with structured Meta and TikTok testing. Subscription apps usually run a lighter pre-flight on Meta and TikTok plus compliance checks for regulated verticals (fintech, health), with CreativeX covering the enterprise-grade brand-hygiene layer.

Comments

Your comment has been submitted